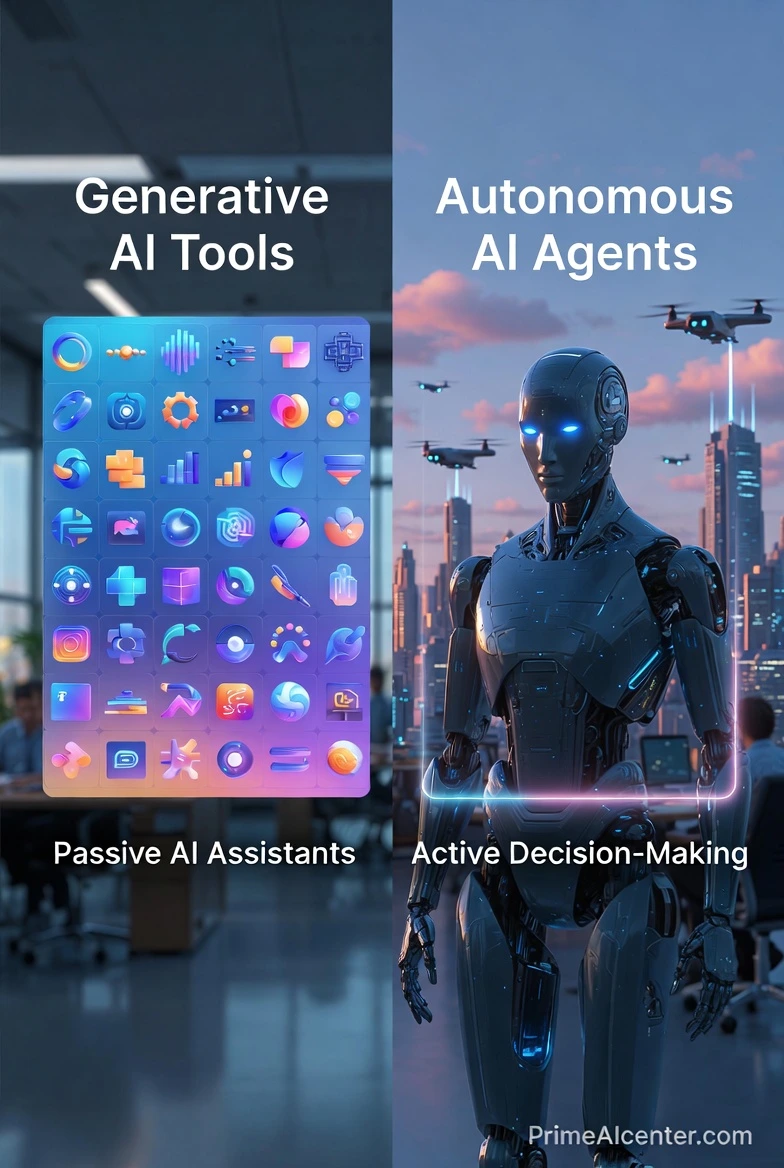

Enterprise AI Agent Deployment represents a structural shift in how organizations design, deploy, and govern intelligent systems. Enterprises are moving beyond static generative AI tools and toward autonomous, goal-driven AI agents capable of reasoning, planning, and executing tasks across complex digital environments. This transition is not cosmetic. It fundamentally alters enterprise software architecture, operating models, and risk profiles.

Early generative AI implementations focused on content generation and conversational interfaces. These systems were reactive, single-turn, and largely disconnected from enterprise systems of record. Agentic AI changes this paradigm. AI agents operate with memory, context, tool access, and decision-making loops. They interact with APIs, databases, workflows, and even other agents. As a result, enterprises must rethink deployment models, security controls, data governance, and ROI measurement.

This guide provides a deeply technical, enterprise-grade framework for Enterprise AI Agent Deployment. It is written for CTOs, IT leaders, and enterprise architects responsible for production systems, regulatory compliance, and long-term scalability. The focus is not experimentation, but operationalization at scale.

The Shift from Generative AI to Agentic AI in the Enterprise

Generative AI systems are fundamentally stateless. They receive a prompt, generate a response, and terminate. While powerful, this model limits enterprise value. Agentic AI systems introduce persistence, autonomy, and orchestration. An AI agent can decompose objectives, invoke tools, retrieve knowledge, validate outputs, and iterate until completion.

In an enterprise context, this shift enables automation of end-to-end business processes rather than isolated tasks. Examples include autonomous financial reconciliation, dynamic supply chain rebalancing, and real-time compliance monitoring. These use cases require AI Agent Orchestration, integration with enterprise APIs, and continuous decision loops.

The implications are significant:

- AI systems move from assistive tools to operational actors.

- Failure modes shift from incorrect answers to incorrect actions.

- Security, auditability, and governance become non-negotiable.

- Scalability and cost control determine long-term ROI of AI initiatives.

This evolution makes Enterprise AI Agent Deployment as much an infrastructure challenge as an AI challenge.

Traditional Bots vs. Autonomous Enterprise AI Agents

Understanding the difference between legacy automation and modern AI agents is critical for architectural planning. The table below highlights the structural and operational differences.

| Dimension | Traditional Bots / RPA | Autonomous Enterprise AI Agents |

|---|---|---|

| Decision Logic | Rule-based, deterministic | Probabilistic reasoning using LLMs |

| Context Awareness | Limited to predefined inputs | Dynamic context via memory and RAG Architecture |

| Adaptability | Low, requires manual reconfiguration | High, can adapt strategies at runtime |

| Integration | UI-level automation | API Integration with core enterprise systems |

| Governance | Process-level audit logs | Full traceability across prompts, tools, and decisions |

| Scalability | Linear, infrastructure-heavy | Elastic, cloud-native scalability models |

While RPA remains useful for deterministic workflows, it cannot handle ambiguity, reasoning, or knowledge synthesis. Enterprise AI agents fill this gap but introduce new complexity in deployment, monitoring, and compliance.

The Core Architecture of Enterprise AI Agents

A production-grade enterprise AI agent is not a single model invocation. It is a distributed system composed of multiple architectural layers. At minimum, these systems include reasoning engines, retrieval mechanisms, tool interfaces, and orchestration logic.

Retrieval-Augmented Generation (RAG Architecture)

RAG Architecture is foundational to enterprise AI agents. Large language models do not inherently possess up-to-date or proprietary enterprise knowledge. RAG enables agents to retrieve relevant data from controlled sources at inference time.

In enterprise deployments, RAG typically involves:

- Document ingestion pipelines with metadata enrichment

- Embedding generation using domain-specific models

- Vector similarity search for contextual retrieval

- Context window optimization to control latency and cost

RAG directly impacts data governance. Only approved, auditable data sources should be indexed. Access controls must align with enterprise identity and role-based permissions.

Vector Databases and Knowledge Stores

Vector databases serve as the semantic memory layer for AI agents. Unlike traditional relational databases, they enable similarity-based retrieval, which is essential for reasoning over unstructured data.

Enterprise considerations for vector databases include:

- Multi-tenancy isolation

- Encryption at rest and in transit

- Hybrid search combining vector and keyword queries

- Data lifecycle management aligned with compliance requirements

Vector stores should be treated as regulated data assets, not experimental components. This is a common failure point in early Enterprise AI Agent Deployment projects.

Reasoning Loops and Multi-agent Systems

Autonomous agents rely on iterative reasoning loops. These loops enable planning, execution, validation, and correction. In advanced deployments, multiple agents collaborate, each with specialized roles.

Examples of multi-agent patterns include:

- Planner agents that decompose objectives

- Executor agents that interact with tools and APIs

- Validator agents that check outputs for accuracy and compliance

Multi-agent Systems increase robustness but also increase orchestration complexity. Without clear control planes, these systems can become opaque and difficult to audit.

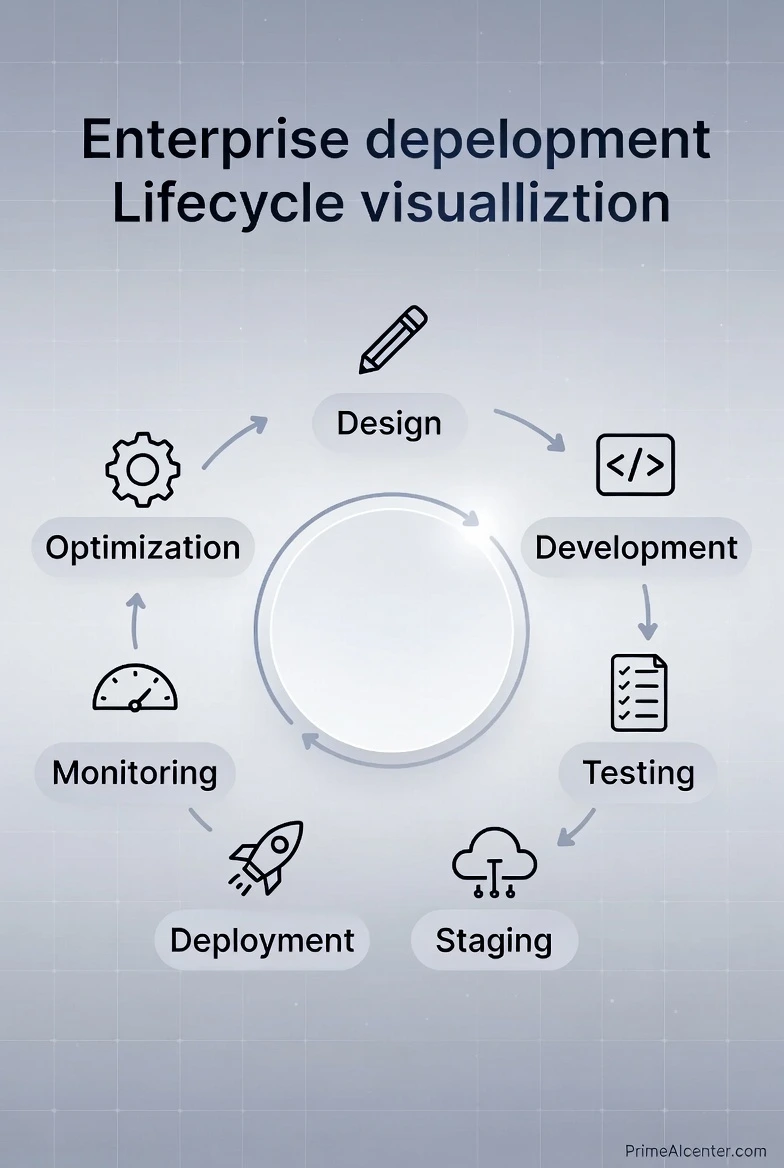

The Enterprise AI Agent Deployment Lifecycle

Deploying AI agents in an enterprise environment requires a disciplined, phased approach. Ad hoc deployments almost always fail due to security, cost, or integration issues.

Step 1: Business Objective and ROI Definition

Every deployment must begin with a clearly defined business objective and measurable ROI of AI. Agents should be mapped to revenue impact, cost reduction, risk mitigation, or productivity gains.

Step 2: Data Readiness and Governance

Data governance is a prerequisite, not an afterthought. Enterprises must define which data sources agents can access, under what conditions, and with what audit trails.

Step 3: Architecture Design and Tool Selection

This phase includes selecting LLM providers, vector databases, orchestration frameworks, and integration patterns. Decisions here directly affect scalability and vendor lock-in.

Step 4: Security and Compliance by Design

Security compliance must be embedded into the architecture. This includes identity management, prompt injection prevention, logging, and policy enforcement.

At this stage, enterprises should already be aligning with SOC2, GDPR, and internal risk frameworks.

Step 5: Testing and Evaluation of Enterprise AI Agents

Testing is the most underestimated phase of Enterprise AI Agent Deployment. Traditional QA methodologies fail because AI agents are non-deterministic systems. The same input can produce different outputs depending on context, memory state, and retrieved knowledge. Enterprises must adopt probabilistic and behavior-based evaluation frameworks.

Testing must operate across three dimensions:

- Model-level correctness and reasoning quality

- Retrieval quality and grounding accuracy

- System-level behavior under real-world conditions

RAG Evaluation with RAGAS

RAGAS (Retrieval-Augmented Generation Assessment) has emerged as a de facto evaluation framework for RAG-based systems. It focuses on measuring whether responses are grounded in retrieved data rather than hallucinated.

Key RAGAS metrics include:

- Context Precision: Measures whether retrieved documents are relevant to the query.

- Context Recall: Evaluates whether all necessary information was retrieved.

- Faithfulness: Assesses whether the generated answer is supported by the retrieved context.

- Answer Relevance: Determines alignment between the response and the user intent.

In enterprise environments, these metrics must be extended with domain-specific validators. For example, in finance, numeric consistency and reconciliation accuracy must be explicitly checked. In healthcare, clinical guideline alignment is mandatory.

Scenario-Based and Adversarial Testing

Beyond static test sets, enterprises should implement scenario-based testing. This involves simulating realistic workflows where agents operate over multiple steps, tools, and data sources.

Adversarial testing is equally critical. This includes:

- Prompt injection prevention validation

- Unauthorized data access attempts

- Tool misuse and escalation scenarios

These tests should be automated and continuously executed as part of the deployment pipeline. Manual evaluation does not scale and introduces unacceptable risk.

Step 6: CI/CD for AI Agents and LLMOps

LLMOps extends traditional DevOps and MLOps to accommodate large language models and agentic workflows. In Enterprise AI Agent Deployment, CI/CD pipelines must handle not only code changes but also prompts, retrieval configurations, and orchestration logic.

Versioning Across the AI Stack

Enterprises must version the following artifacts independently:

- Prompt templates and system instructions

- Embedding models and vector indices

- Agent policies and tool permissions

- Evaluation datasets and benchmarks

Failure to version these components leads to irreproducible behavior and audit gaps. In regulated industries, this is unacceptable.

Automated Deployment Pipelines

A mature LLMOps pipeline includes:

- Pre-deployment evaluation gates using RAGAS and custom metrics

- Canary deployments for new agent versions

- Rollback mechanisms based on behavior thresholds

- Environment parity between staging and production

Unlike traditional software, rolling back an AI agent may involve reverting vector indexes, prompt versions, or orchestration graphs. CI/CD systems must be designed with this complexity in mind.

Cost-Aware Deployment Controls

LLMOps must incorporate cost telemetry. Token usage, retrieval overhead, and tool invocation frequency should be monitored during deployment. Cost anomalies are often early indicators of logic loops or runaway agents.

Enterprises that ignore cost controls during CI/CD often discover negative ROI after full-scale rollout.

Step 7: Monitoring, Observability, and Feedback Loops

Once deployed, enterprise AI agents require continuous monitoring. Observability must extend beyond uptime and latency to include behavioral and semantic metrics.

Operational Monitoring

Core operational metrics include:

- End-to-end latency across reasoning loops

- API integration success and failure rates

- Vector retrieval latency and hit rates

- Token consumption per task

These metrics feed into scalability planning and infrastructure optimization. Enterprises must anticipate usage spikes and ensure elastic scaling without degrading performance.

Behavioral and Risk Monitoring

Behavioral monitoring focuses on what agents do, not just how fast they do it. This includes:

- Deviation from expected action patterns

- Repeated correction loops indicating reasoning failure

- Unauthorized tool access attempts

- Data leakage or policy violations

These signals are essential for security compliance and operational trust. In many enterprises, AI agents are granted privileges similar to service accounts. Their behavior must be auditable and explainable.

Human-in-the-Loop Feedback Systems

Despite high autonomy, enterprise AI agents should never be fully detached from human oversight. Feedback loops enable continuous improvement and risk mitigation.

Effective feedback mechanisms include:

- Inline approval workflows for high-impact actions

- User feedback tagging for incorrect or suboptimal outcomes

- Automated retraining or prompt refinement triggers

Feedback data should be treated as a first-class asset. It informs future deployments, improves reasoning quality, and strengthens long-term ROI of AI investments.

At this stage, Enterprise AI Agent Deployment transitions from a project to a living system. Enterprises that succeed treat AI agents as evolving digital workers, governed with the same rigor as human-operated processes.

Security Governance & Prompt Injection Prevention in Enterprise AI Agent Deployment

Security is the single largest blocker to large-scale Enterprise AI Agent Deployment. Unlike traditional software systems, AI agents operate on natural language inputs, reason probabilistically, and invoke tools autonomously. This dramatically expands the attack surface. As a result, enterprises must implement a dedicated AI security governance layer rather than relying solely on existing application security controls.

Security governance for AI agents must be proactive, layered, and continuously enforced. It spans data protection, access control, model behavior constraints, and runtime monitoring. Treating AI agents as “just another microservice” is a structural mistake that leads to data leakage, privilege escalation, and compliance failures.

Prompt Injection as a First-Class Enterprise Threat

Prompt injection is the most common and most misunderstood vulnerability in agentic systems. Unlike SQL injection, prompt injection targets the reasoning layer of the system rather than a parser. Attackers manipulate natural language inputs to override system instructions, extract sensitive data, or force unauthorized actions.

In enterprise environments, prompt injection risks are amplified because agents often have access to internal APIs, proprietary data, and privileged workflows. A successful attack can result in:

- Unauthorized disclosure of PII or confidential documents

- Execution of restricted API actions

- Bypassing compliance or approval workflows

- Corruption of downstream business processes

Prompt injection prevention must therefore be enforced at multiple layers, not just at the prompt template level.

OWASP Top 10 for LLMs: Enterprise Relevance

The OWASP Top 10 for LLM Applications provides a practical framework for threat modeling AI systems. Enterprises deploying AI agents should explicitly map controls to these risk categories rather than treating them as theoretical concerns.

Key OWASP LLM risks relevant to enterprise agents include:

- LLM01: Prompt Injection – Direct and indirect instruction hijacking.

- LLM02: Insecure Output Handling – Blindly trusting model outputs for execution.

- LLM03: Training Data Poisoning – Corrupted embeddings or vector stores.

- LLM06: Excessive Agency – Granting agents overly broad permissions.

- LLM09: Overreliance on LLMs – Removing human oversight for high-risk actions.

Each of these risks maps directly to architectural and governance decisions. For example, excessive agency is not a model flaw. It is an authorization failure.

Role-Based Access Control (RBAC) for AI Agents

Role-Based Access Control is the cornerstone of enterprise AI governance. AI agents must never operate with blanket access. Instead, they should be treated as digital identities with tightly scoped roles.

Effective RBAC for AI agents includes:

- Distinct service identities per agent or agent class

- Fine-grained permissions for API integration

- Separation of read, write, and execute privileges

- Context-aware access based on task and data sensitivity

RBAC policies must be enforced outside the LLM. The model should request actions, but enforcement should occur at the orchestration or API gateway layer. This ensures that even if an agent is manipulated via prompt injection, it cannot exceed its assigned authority.

In mature deployments, enterprises implement dynamic RBAC, where permissions are granted temporarily and revoked automatically after task completion.

PII Masking and Data Protection by Design

Personally Identifiable Information (PII) represents a critical compliance risk under regulations such as GDPR, HIPAA, and regional data protection laws. AI agents must be architected to minimize exposure to sensitive data.

PII masking strategies typically operate at multiple stages:

- Pre-retrieval masking: Sensitive fields are redacted before embedding or retrieval.

- In-flight masking: Data is anonymized before being sent to the LLM.

- Post-generation validation: Outputs are scanned to prevent leakage.

Masking should be reversible only when strictly required and only within controlled execution contexts. Logs, prompts, and evaluation datasets must never store raw PII by default.

From a governance perspective, this aligns with data minimization principles and significantly reduces breach impact.

Guardrails, Policies, and Runtime Enforcement

Guardrails define what an AI agent is allowed to do, say, and access. In enterprise systems, guardrails must be enforced programmatically rather than relying on prompt wording alone.

Common guardrail mechanisms include:

- Policy engines that validate actions before execution

- Schema validation for structured outputs

- Allowlists for tool and API usage

- Content filters for sensitive topics or data types

Runtime enforcement is critical. Policies must be evaluated at every reasoning step, especially in multi-agent systems where delegation occurs.

In well-governed Enterprise AI Agent Deployment architectures, security is not a bolt-on feature. It is embedded into identity, data flows, orchestration logic, and operational monitoring. Enterprises that treat AI agents as privileged actors, governed by explicit policy and continuous oversight, are the ones that achieve scalability without sacrificing trust.

Industry-Specific Use Cases for Enterprise AI Agents

The true test of Enterprise AI Agent Deployment is not architectural elegance, but measurable impact on core business functions. The following use cases illustrate how agentic systems move beyond experimentation and into mission-critical operations across regulated and high-complexity industries.

Finance: Automated Auditing and Continuous Assurance

In financial services, auditing is traditionally periodic, manual, and resource-intensive. Enterprise AI agents enable a shift toward continuous, automated auditing with real-time anomaly detection.

An automated auditing agent typically operates within a tightly governed RAG Architecture, connected to:

- General ledger systems

- Transaction processing platforms

- Regulatory rule repositories

- Historical audit findings

The agent continuously monitors transactions, reconciles entries, and flags deviations from expected patterns. When anomalies are detected, the agent generates explainable audit trails, including source data references and reasoning steps.

Crucially, execution privileges are limited. The agent cannot modify financial records. It can only recommend actions, escalating issues through predefined approval workflows. This balance between autonomy and control is central to compliant enterprise deployments.

Healthcare: AI-Driven Patient Triage

Healthcare environments demand extreme accuracy, privacy, and explainability. AI agents deployed for patient triage operate as decision-support systems rather than autonomous decision-makers.

A patient triage agent integrates:

- Electronic Health Records (EHR)

- Clinical guidelines and protocols

- Symptom intake interfaces

- Scheduling and care coordination systems

Using structured reasoning loops, the agent assesses symptoms, prioritizes cases, and recommends next steps. PII masking and strict RBAC ensure that sensitive data is exposed only when necessary.

The agent’s output is always reviewed by a clinician before action is taken. This human-in-the-loop model reduces clinician burnout while preserving clinical accountability.

Retail: Inventory Prediction and Autonomous Rebalancing

Retail inventory management is a classic example of a dynamic, multi-variable optimization problem. AI agents excel in this environment due to their ability to ingest real-time signals and adapt continuously.

Inventory prediction agents consume data from:

- Point-of-sale systems

- Supply chain logistics platforms

- Seasonal demand models

- External signals such as weather or promotions

The agent forecasts demand, recommends stock transfers, and triggers replenishment workflows via API Integration. Over time, feedback loops improve accuracy and reduce overstock and stockout scenarios.

The Rise of Multi-Agent Systems in the Enterprise

As enterprise use cases grow in complexity, single-agent architectures reach their limits. Multi-agent Systems (MAS) address this by decomposing responsibilities across specialized agents.

Manager-Worker Architecture

The most common enterprise MAS pattern is the Manager-Worker model:

- Manager Agent: Interprets objectives, plans tasks, and allocates work.

- Worker Agents: Execute specialized tasks such as data retrieval, analysis, or API interaction.

- Validator Agents: Review outputs for accuracy, compliance, and policy alignment.

This architecture mirrors traditional organizational structures and improves both scalability and fault tolerance. If a worker agent fails or produces low-confidence results, the manager can reassign the task or escalate to human oversight.

However, MAS deployments demand robust AI Agent Orchestration. Without centralized observability and policy enforcement, agent collaboration can become opaque and risky.

Calculating the ROI of AI Agent Deployment

Measuring the ROI of AI agents requires moving beyond simplistic cost-per-token calculations. Enterprise value emerges from structural efficiency gains rather than isolated productivity improvements.

Time-to-Value (TTV)

Time-to-Value measures how quickly an AI agent delivers measurable business outcomes after deployment. Shorter TTV indicates effective alignment between use case selection, data readiness, and system integration.

Enterprises that invest upfront in governance and architecture consistently achieve faster TTV than those pursuing rapid pilots without structure.

Human-Capital Redistribution

One of the most overlooked ROI metrics is human-capital redistribution. AI agents do not simply replace tasks; they reallocate human effort toward higher-value activities.

Examples include:

- Auditors focusing on judgment rather than data sampling

- Clinicians spending more time on patient care

- Planners shifting from reactive to strategic decision-making

When measured correctly, this redistribution often exceeds direct cost savings in long-term value.

Conclusion: From Tools to Trusted Digital Workers

Enterprise AI agents represent a fundamental shift in how organizations operate. They are not features or add-ons. They are persistent, autonomous actors embedded within the enterprise fabric.

Successful Enterprise AI Agent Deployment demands architectural discipline, security-first thinking, and a clear link to business outcomes. Enterprises that approach agents as governed digital workers, rather than experimental chatbots, will define the next decade of operational excellence.

FAQ: Enterprise AI Agent Deployment

Is it better to use open-source or proprietary models for enterprise agents?

The choice depends on data sensitivity, customization needs, and compliance requirements. Proprietary models often offer better support and security assurances, while open-source models provide transparency and deployment flexibility.

How can hallucination be prevented in production systems?

Hallucination is mitigated through RAG Architecture, strict output validation, and domain-specific evaluation frameworks. Agents should never operate without grounding in authoritative data sources.

How do enterprises ensure compliance with data protection regulations?

Compliance is achieved through Data Governance, PII masking, audit logging, and role-based access enforcement at every layer of the system.

What is the role of humans once AI agents are deployed?

Humans remain responsible for oversight, exception handling, and strategic decision-making. AI agents augment, not eliminate, human accountability.

How scalable are multi-agent systems?

When properly orchestrated, MAS architectures scale horizontally. However, scalability depends on cost controls, coordination logic, and observability.

How are AI agents integrated with legacy systems?

Integration is achieved through controlled API Integration layers, avoiding direct coupling to legacy interfaces whenever possible.

What are the biggest operational risks?

The most common risks include excessive agent permissions, lack of monitoring, and poorly governed data access.

Good info’s