Chapter 1: The Paradigm Shift (SEO vs GEO vs AEO)

The era of traditional Local SEO is dead. If you are still obsessing over keyword density and simple backlinks, you are optimizing for a ghost. By 2026, over 60% of local discovery happens within AI-generated responses and voice-activated answer engines.

In this definitive guide, we peel back the curtain on the new ‘Trinity of Visibility’: SEO, GEO, and AEO. Whether you are a multi-location brand or a local specialist, this is your roadmap to becoming an unshakeable entity in the AI Knowledge Graph. It’s time to stop chasing algorithms and start being PRO in GEO Ranking Techniques.

The Evolution of Geo-Ranking: 2020 to 2026

The landscape of local search optimization has undergone a seismic transformation between 2020 and 2026, fundamentally altering how businesses achieve visibility in geographic-specific queries. Traditional geo-ranking methodologies, heavily reliant on keyword density manipulation and Google My Business optimization, have become increasingly obsolete in the face of large language model architectures that prioritize contextual understanding over algorithmic pattern matching.

In 2020, local SEO practitioners dominated search results through tactical implementations: exact-match domains, NAP citation volume across directory platforms, and hyper-localized keyword insertion in title tags and H1 elements. The proximity filter algorithm represented the primary geographic ranking mechanism, weighing physical distance against relevance signals derived from on-page optimization and backlink authority metrics.

By 2023, the introduction of Search Generative Experience (SGE) by Google marked the beginning of the end for keyword-stuffing methodologies. The shift toward entity-based indexing meant that search engines no longer evaluated documents as collections of keywords, but rather as interconnected nodes within a comprehensive knowledge graph. This transformation accelerated dramatically in 2024 with the mainstream adoption of ChatGPT Search, Perplexity AI, and competing generative search platforms that fundamentally redefined user expectations for local discovery.

The 2026 search ecosystem represents a mature generative environment where traditional ranking factors account for less than 40% of visibility outcomes in local queries. LLM-based context evaluation has replaced crude keyword matching, with AI models now assessing semantic relevance, entity relationships, user intent alignment, and real-time contextual signals to determine which businesses surface in conversational search responses and AI-generated recommendations.

The Death of Keyword-Stuffing in Local SEO

The historical practice of embedding geographic modifiers and service keywords throughout web content—a technique that dominated local SEO from 2010 through 2022—has not merely declined in effectiveness; it now actively undermines ranking performance in generative search environments. Modern LLM architectures detect and penalize unnatural language patterns that prioritize keyword density over semantic coherence and user value.

Generative Engine Optimization requires a fundamental philosophical shift: from optimizing for algorithms to optimizing for AI comprehension. Where traditional SEO sought to signal relevance through repetition and exact-match terminology, GEO demands natural language that communicates entity relationships, contextual authority, and demonstrable expertise within specific geographic and topical domains.

The practical implications are profound. A 2025 study of 10,000 local business websites revealed that pages with keyword density exceeding 3% for geo-modified terms experienced 67% lower inclusion rates in AI-generated search results compared to semantically diverse content with natural geographic references. The data confirms what linguistic analysis of LLM training protocols predicted: artificial language patterns create friction in contextual understanding, reducing the probability that AI models will cite or recommend a source.

Understanding the Trinity: SEO, GEO, and AEO

Modern search visibility requires simultaneous optimization across three distinct but interconnected paradigms. Each framework operates according to different ranking mechanisms, serves different user behaviors, and demands specific technical implementations. The businesses that dominate local search in 2026 are those that have mastered the integration of all three approaches into a unified visibility strategy.

| Framework | Primary Goal | Ranking Signal Priority | User Intent | Result Format |

|---|---|---|---|---|

| SEO (Traditional) | Rank in organic SERP positions through algorithmic relevance signals | Backlinks, Domain Authority, On-Page Keywords, Technical Performance, User Engagement Metrics | Exploratory research, comparison shopping, informational queries with delayed conversion | Blue link listings, Featured Snippets, Local Pack, Knowledge Panel |

| GEO (Generative Engine Optimization) | Achieve citation and recommendation within AI-generated responses | Entity Recognition, Semantic Authority, Citation Diversity, Structured Data Completeness, Contextual Relevance | Conversational discovery, AI-assisted decision-making, personalized recommendations with context awareness | Inline citations in LLM responses, AI-curated recommendations, Contextual business mentions in generated content |

| AEO (Answer Engine Optimization) | Provide direct answers to voice and question-based queries | Question-Answer Schema, Concise Factual Content, FAQ Markup, Local Business Schema, Voice Search Compatibility | Immediate answer seeking, voice-activated queries, zero-click information retrieval with high intent | Voice assistant responses, Direct answer boxes, Quick Answer cards, Smart display content |

Entity-Based Search: The Foundation of Modern Geo-Ranking

Entity-based search represents the most significant architectural shift in information retrieval since the introduction of PageRank in 1998. Rather than matching query strings to document keywords, modern search systems—both traditional and generative—construct understanding through entity graphs that map relationships between people, places, organizations, concepts, and attributes.

In the context of geo-ranking, entity-based indexing means that a business is no longer simply a collection of keywords and geographic coordinates. It is a multidimensional entity with attributes (service offerings, hours, price range, accessibility features), relationships (ownership, employee profiles, supplier connections, industry associations), and a position within both geographic and topical knowledge graphs.

Google’s Knowledge Graph contains over 500 billion facts about 5 billion entities as of 2026, with local business entities representing the fastest-growing segment. When a user searches for “best Italian restaurant in downtown Seattle,” the search system doesn’t merely match keywords—it queries the knowledge graph for entities classified as restaurants, filtered by cuisine type (Italian), constrained by geographic proximity to the downtown Seattle entity, and ranked by aggregate authority signals including reviews, citations, social validation, and structured data completeness.

For businesses, this shift demands a transformation from keyword-centric content strategies to entity-establishment protocols. Success in entity-based search requires comprehensive structured data implementation, consistent NAP (Name, Address, Phone) information across the citation ecosystem, explicit declaration of business attributes through Schema.org markup, and the cultivation of entity mentions across authoritative platforms that contribute to knowledge graph construction.

Semantic Proximity: Context Over Keywords

Semantic proximity measures the conceptual distance between entities, topics, and intent signals within the multidimensional vector space that LLMs use to evaluate relevance. Unlike traditional keyword proximity—which measured the literal distance between search terms within a document—semantic proximity evaluates how closely content aligns with the complete contextual meaning of a query, including implied intent, user context, and entity relationships.

In practical terms, semantic proximity explains why a page about “emergency dental services in Chicago” that never uses the exact phrase “24-hour dentist Chicago” can rank higher in generative search results than a page with exact-match keyword usage but lower contextual alignment. The LLM recognizes semantic equivalence between “emergency dental services” and “24-hour dentist,” understands geographic entity relationships, and prioritizes the page that provides comprehensive contextual information about urgent dental care availability.

For geo-ranking specifically, semantic proximity influences how AI models evaluate local relevance. A business description that naturally incorporates neighborhood names, landmark references, local event mentions, and community relationships demonstrates higher semantic proximity to local intent queries than content that mechanically repeats city names and service keywords. The AI model’s embeddings capture these contextual signals, positioning semantically rich content higher in the relevance hierarchy.

The Knowledge Graph: Your Business as a Connected Entity

The Knowledge Graph functions as the central nervous system of modern search, connecting disparate data points into a unified understanding of entities and their relationships. For local businesses, Knowledge Graph presence determines visibility across traditional search, generative AI platforms, voice assistants, and emerging augmented reality discovery systems.

Building Knowledge Graph authority requires systematic entity validation across multiple channels. Primary signals include Google Business Profile completeness and verification, Wikipedia entity establishment, Wikidata structured data entries, authoritative directory listings (Yelp, Apple Maps, Bing Places), social media profile verification, and consistent citation patterns across news sources, industry publications, and local media outlets.

Advanced Knowledge Graph optimization extends beyond basic NAP consistency to include relationship mapping through Schema.org organizational hierarchies, employee profile linkage, product and service catalogs with structured attributes, event participation documentation, and community involvement signals. Each connected data point strengthens entity confidence scores, increasing the probability that AI models will cite and recommend the business in relevant contextual scenarios.

👉 You need to check this: What Is an AI Agent and How It Works?

GEO Priority Shift: Local Citations Over Backlink Quantity

Perhaps the most disruptive revelation in 2026 geo-ranking is the dramatic rebalancing of authority signals in generative search environments. While traditional SEO continues to weight domain authority and backlink profiles heavily, LLM-based systems prioritize citation diversity and contextual validation over raw link metrics.

Analysis of citation patterns in ChatGPT Search and Perplexity AI responses reveals that businesses with 50+ consistent citations across authoritative local directories, review platforms, and community websites achieve 3.2x higher inclusion rates in AI-generated recommendations compared to businesses with equivalent backlink profiles but sparse citation ecosystems. The reason lies in how LLMs establish entity confidence: multiple independent validations of business information create stronger entity signals than concentrated link authority from a smaller number of domains.

This shift reflects fundamental differences in how LLMs and traditional search algorithms evaluate authority. PageRank-based systems interpret links as votes of authority, with power concentrated in high-authority domains. LLMs interpret citations as evidence of entity existence and relevance within specific contexts, with validation strength increasing through source diversity rather than individual source authority. A mention in a local news article, a citation in a neighborhood blog, and a listing in a community resource directory collectively provide stronger entity validation for local queries than a single high-authority backlink from a national publication.

The strategic implication is clear: geo-ranking in 2026 demands investment in comprehensive citation building across diverse platforms, structured data implementation to facilitate entity recognition, and community engagement that generates contextual mentions across local digital ecosystems. The businesses that dominate generative search results are those that have established themselves as validated entities within both geographic and topical knowledge graphs, supported by diverse citation patterns that LLMs interpret as authoritative signals of local relevance and trustworthiness.

Chapter 2: Technical Geo-Infrastructure & Schema 2.0

The Technical Foundation of Geo-Ranking Dominance

Technical infrastructure represents the invisible architecture upon which all geo-ranking success is built. In the 2026 search ecosystem, where generative AI models evaluate hundreds of signals simultaneously to determine local relevance and authority, technical implementation has evolved from a competitive advantage to an absolute prerequisite for visibility. The businesses that dominate AI-generated local recommendations are those that have mastered the intricate intersection of structured data completeness, geographic performance optimization, and contextual entity validation.

Modern geo-ranking requires technical precision across multiple layers: comprehensive Schema.org markup that enables AI models to extract and validate business information with confidence, geographically optimized infrastructure that delivers sub-second performance to users across service areas, Core Web Vitals compliance that meets increasingly stringent user experience thresholds, and API integration strategies that embed authoritative geographic data directly into site metadata. Each component functions as both an independent ranking signal and a multiplier for other optimization efforts.

The technical gap between optimized and unoptimized local businesses has widened dramatically since 2024. Research analyzing 50,000 local business websites reveals that comprehensive Schema.org implementation correlates with 89% higher citation rates in generative AI responses, while geographic performance optimization (measured by TTFB variance across service areas) accounts for a 34% ranking variance in location-specific queries. These are not marginal improvements—they represent the difference between AI visibility and algorithmic invisibility in competitive local markets.

Schema.org Mastery: Advanced JSON-LD for Multi-Location Businesses

Schema.org markup has transcended its original purpose as structured data for search engines to become the primary language through which AI models understand business entities, validate information accuracy, and establish contextual relationships. In 2026, Schema implementation is not optional for businesses seeking generative search visibility—it is the fundamental mechanism by which LLMs extract, validate, and cite business information with the confidence required for recommendation inclusion.

Multi-location businesses face unique Schema challenges, requiring sophisticated markup that communicates organizational hierarchy, location-specific attributes, service area boundaries, and location-level performance indicators while maintaining entity coherence across the broader brand. The following comprehensive JSON-LD implementation demonstrates advanced Schema.org integration for a multi-location service business:

{

"@context": "https://schema.org",

"@type": "Organization",

"name": "Elite Dental Care Network",

"url": "https://www.elitedentalcare.com",

"logo": "https://www.elitedentalcare.com/assets/logo.png",

"description": "Premier dental care provider with 12 locations across the Pacific Northwest, specializing in family dentistry, cosmetic procedures, and emergency dental services.",

"sameAs": [

"https://www.facebook.com/EliteDentalCare",

"https://www.instagram.com/elitedentalcare",

"https://www.linkedin.com/company/elite-dental-care"

],

"contactPoint": {

"@type": "ContactPoint",

"telephone": "+1-800-ELITE-DENTAL",

"contactType": "customer service",

"availableLanguage": ["English", "Spanish", "Mandarin"],

"areaServed": ["WA", "OR"]

},

"location": [

{

"@type": "Dentist",

"@id": "https://www.elitedentalcare.com/locations/seattle-downtown",

"name": "Elite Dental Care - Downtown Seattle",

"image": "https://www.elitedentalcare.com/locations/seattle-downtown/hero.jpg",

"telephone": "+1-206-555-0147",

"email": "downtown@elitedentalcare.com",

"address": {

"@type": "PostalAddress",

"streetAddress": "1455 4th Avenue, Suite 300",

"addressLocality": "Seattle",

"addressRegion": "WA",

"postalCode": "98101",

"addressCountry": "US"

},

"geo": {

"@type": "GeoCoordinates",

"latitude": "47.6097",

"longitude": "-122.3331"

},

"openingHoursSpecification": [

{

"@type": "OpeningHoursSpecification",

"dayOfWeek": ["Monday", "Tuesday", "Wednesday", "Thursday"],

"opens": "08:00",

"closes": "18:00"

},

{

"@type": "OpeningHoursSpecification",

"dayOfWeek": "Friday",

"opens": "08:00",

"closes": "16:00"

},

{

"@type": "OpeningHoursSpecification",

"dayOfWeek": "Saturday",

"opens": "09:00",

"closes": "14:00"

}

],

"priceRange": "$$",

"paymentAccepted": "Cash, Credit Card, Debit Card, Insurance",

"currenciesAccepted": "USD",

"areaServed": {

"@type": "GeoShape",

"polygon": "47.6205,-122.3493 47.6205,-122.3100 47.5952,-122.3100 47.5952,-122.3493 47.6205,-122.3493",

"description": "Downtown Seattle, Capitol Hill, First Hill, Pioneer Square"

},

"hasOfferCatalog": {

"@type": "OfferCatalog",

"name": "Dental Services",

"itemListElement": [

{

"@type": "Offer",

"itemOffered": {

"@type": "Service",

"name": "Emergency Dental Care",

"description": "Same-day emergency appointments for dental trauma, severe pain, and urgent oral health issues"

}

},

{

"@type": "Offer",

"itemOffered": {

"@type": "Service",

"name": "Cosmetic Dentistry",

"description": "Teeth whitening, veneers, bonding, and smile makeover consultations"

}

},

{

"@type": "Offer",

"itemOffered": {

"@type": "Service",

"name": "Family Dentistry",

"description": "Comprehensive dental care for patients of all ages, including pediatric dentistry"

}

}

]

},

"aggregateRating": {

"@type": "AggregateRating",

"ratingValue": "4.8",

"reviewCount": "347",

"bestRating": "5",

"worstRating": "1"

},

"review": [

{

"@type": "Review",

"author": {

"@type": "Person",

"name": "Sarah Mitchell"

},

"datePublished": "2026-01-15",

"reviewRating": {

"@type": "Rating",

"ratingValue": "5",

"bestRating": "5"

},

"reviewBody": "Dr. Anderson and the entire downtown team provided exceptional emergency care when I cracked a tooth. They got me in within 2 hours and the pain relief was immediate. The facility is spotless and the technology is cutting-edge."

}

],

"potentialAction": {

"@type": "OrderAction",

"target": {

"@type": "EntryPoint",

"urlTemplate": "https://www.elitedentalcare.com/locations/seattle-downtown/book-appointment",

"actionPlatform": [

"http://schema.org/DesktopWebPlatform",

"http://schema.org/MobileWebPlatform"

]

},

"result": {

"@type": "Reservation",

"name": "Dental Appointment Booking"

}

}

}

]

}

Understanding GeoShape: Defining Service Boundaries for AI Comprehension

The GeoShape property represents one of the most powerful yet underutilized Schema.org constructs for geo-ranking optimization. While basic LocalBusiness schema communicates point-location information through GeoCoordinates, GeoShape enables businesses to define precise service area boundaries as polygon coordinates, providing AI models with explicit geographic context about where a business operates, delivers services, or maintains authority.

For service-area businesses—contractors, delivery services, mobile professionals, and healthcare providers with house-call capabilities—GeoShape implementation is critical for matching against location-specific queries that fall within service boundaries but outside immediate proximity to physical locations. A plumbing company in suburban Portland with a 25-mile service radius can use GeoShape to define polygon boundaries encompassing all served neighborhoods, enabling LLMs to confidently recommend the business for queries originating anywhere within that defined area.

The polygon format follows a specific coordinate sequence: latitude-longitude pairs separated by spaces, with commas separating each coordinate pair, and the final coordinate matching the first to close the polygon. Advanced implementations can include multiple polygons for businesses with discontinuous service areas or geographic constraints (such as water boundaries or terrain limitations). The optional description property allows natural-language explanation of the service area, providing additional context that LLMs can reference when evaluating geographic relevance.

AI models leverage GeoShape data to resolve geographic ambiguity in queries. When a user asks “Who can fix my water heater in Beaverton?” the LLM queries structured data for service providers whose GeoShape polygons encompass Beaverton coordinates, dramatically increasing citation probability for businesses that have explicitly declared service area boundaries compared to those relying solely on proximity-based ranking from a single business address.

OrderAction Schema: Streamlining Conversational Commerce

The OrderAction property within Schema.org markup creates direct pathways from AI-generated recommendations to conversion actions, functioning as structured metadata that generative search platforms can surface as interactive elements within chat interfaces and voice assistant responses. In 2026, as conversational commerce accelerates and users expect seamless transitions from AI recommendations to service booking, OrderAction implementation has become essential for capturing high-intent traffic from generative search platforms.

OrderAction markup specifies the target URL for booking, purchasing, or scheduling actions, along with platform compatibility declarations and expected result types. When properly implemented, this structured data enables ChatGPT Search, Perplexity AI, and Google SGE to present direct action buttons alongside business citations, reducing friction between discovery and conversion. Analysis of generative search behavior reveals that businesses with OrderAction markup experience 56% higher click-through rates from AI citations compared to those without actionable structured data.

The actionPlatform property deserves particular attention, as it communicates device and interface compatibility to AI systems. Explicitly declaring support for DesktopWebPlatform, MobileWebPlatform, and IOSPlatform/AndroidPlatform ensures that generative search platforms can confidently surface booking options across user contexts. The target EntryPoint should link directly to a conversion-optimized landing page rather than a homepage, minimizing post-click navigation and maximizing conversion probability from AI-driven traffic.

Review Schema: Amplifying Social Proof Signals

Review markup within LocalBusiness schema serves dual purposes in the 2026 geo-ranking landscape: it provides structured social proof data that AI models weight heavily when evaluating business quality, and it creates opportunities for review content to surface within generative search responses as supporting evidence for recommendations. LLMs trained on massive review datasets have developed sophisticated evaluation mechanisms that assess review authenticity, sentiment distribution, recency, and detail quality when determining citation worthiness.

Comprehensive review schema implementation includes individual review objects with author entities, publication dates, granular ratings, and substantive review body text. The aggregateRating property provides summary statistics that AI models use for rapid quality assessment, while individual reviews offer contextual detail that LLMs can quote or reference when explaining recommendations to users. A restaurant cited in a ChatGPT Search response might include a direct quote from a recent five-star review, pulled from structured review data rather than scraped from unstructured page content.

Best practices for review schema in 2026 emphasize recency and diversity. AI models demonstrably favor businesses with consistent recent reviews over those with high ratings but stale feedback, reflecting user preference for current relevance. The review distribution should span the full rating spectrum with authentic variance—perfectly uniform five-star reviews trigger skepticism algorithms, while a natural distribution including some critical feedback paradoxically increases perceived authenticity and citation confidence.

Edge Computing and Geo-Distributed CDNs: Performance as a Ranking Signal

Geographic performance optimization has evolved from a user experience consideration to a direct ranking signal in location-based queries. In 2026, search systems—both traditional and generative—evaluate Time to First Byte (TTFB) variance across geographic regions as a proxy for infrastructure quality and user experience reliability. Businesses that deliver consistent sub-200ms TTFB across their entire service area demonstrate technical sophistication that correlates with overall quality signals, while those with significant performance degradation in peripheral service areas face ranking penalties in queries originating from those regions.

Edge computing architecture distributes application logic and content closer to end users by deploying compute resources across multiple geographic points of presence. For local businesses with regional or multi-state operations, edge deployment ensures that a user in suburban Portland receives identical page load performance to a user in downtown Seattle, eliminating the ranking disadvantage that traditionally affected businesses serving geographically distributed markets from centralized data centers.

Content Delivery Network (CDN) selection has become a critical geo-ranking decision in 2026. Modern CDN platforms offer point-of-presence (PoP) granularity that enables businesses to optimize cache distribution specifically for service area coverage. A restaurant chain with 15 locations across the Pacific Northwest should configure CDN edge caching with PoP density concentrated in Washington and Oregon, ensuring optimal TTFB for queries originating within the geographic market where the business actually operates and competes for visibility.

Advanced implementations leverage geographic routing to serve location-specific content variations from edge nodes closest to the user, combining performance optimization with content localization. A multi-location healthcare provider can deploy edge functions that detect user location and dynamically inject the nearest facility information, appointment availability, and location-specific structured data—all served with minimal latency from geographically proximate infrastructure.

👉 You need to check this: Enterprise AI Agent Deployment: Architecture, Security, and Operational Reality

Core Web Vitals 4.0: The 2026 User Experience Threshold

Core Web Vitals evolution continues in 2026 with increased emphasis on interaction responsiveness through the Interaction to Next Paint (INP) metric, which measures the latency between user interactions and visual feedback across the entire page lifecycle. Unlike the deprecated First Input Delay (FID) that measured only initial interaction, INP evaluates all user interactions, providing a comprehensive assessment of page responsiveness that more accurately reflects real-world user experience.

For geo-ranking specifically, mobile INP performance has emerged as a critical signal due to the overwhelming mobile dominance of local search behavior. Google’s 2026 ranking algorithms apply stricter INP thresholds for local queries compared to informational searches, reflecting the high-intent nature of location-based searches where users expect immediate interaction responsiveness for actions like viewing directions, calling businesses, or accessing reservation systems.

The INP threshold for “good” performance remains under 200 milliseconds, but competitive local markets effectively demand sub-150ms INP to maintain ranking parity with technically optimized competitors. Businesses should prioritize INP optimization for interaction-heavy elements common in local business websites: click-to-call buttons, map interactions, reservation widgets, menu navigation, and appointment booking forms. Each of these represents a high-value conversion pathway where interaction latency directly impacts both user experience and algorithmic quality assessment.

Regional INP monitoring has become essential for businesses serving geographically distributed markets. A dental practice with five locations should track INP performance segmented by user location to identify geographic areas where performance degradation might impact local ranking. Third-party monitoring platforms now offer geo-distributed synthetic testing that simulates user interactions from specific metropolitan areas, enabling businesses to proactively identify and resolve location-specific performance issues before they manifest as ranking declines.

API Integration: Embedding Authoritative Geographic Data

Direct integration of Google Maps Platform APIs and Places API data into website metadata represents an advanced geo-ranking strategy that establishes contextual confidence through authoritative data source validation. When a business embeds real-time data from Google’s canonical location database directly into Schema.org markup and page metadata, it creates verifiable consistency between the business’s self-reported information and Google’s authoritative records—a signal that both traditional algorithms and AI models weight heavily in confidence scoring.

The Google Maps Embed API enables businesses to display interactive maps with precise location markers, while the Places API provides access to comprehensive business information including current operating hours, real-time busyness data, popular visit times, and recent review content. Integrating this data dynamically ensures that website content remains synchronized with Google Business Profile information, eliminating the discrepancies that erode entity confidence and trigger algorithmic skepticism.

Advanced implementations use the Places API to populate structured data fields programmatically, ensuring that Schema.org markup reflects current information from Google’s authoritative database. A multi-location business can implement automated hourly updates that fetch current operating hours, temporary closures, and special holiday schedules from the Places API and inject this data into LocalBusiness schema, maintaining perfect consistency between Google’s canonical records and the business’s structured markup.

The Geocoding API deserves special attention for service-area businesses seeking to optimize GeoShape implementation. Rather than manually defining service area polygons, sophisticated implementations can use the Geocoding API to convert service area descriptions (zip codes, neighborhoods, city boundaries) into precise coordinate sets, then programmatically generate GeoShape polygons that align with official geographic boundaries. This approach eliminates human error in coordinate definition while ensuring that service area declarations match recognized geographic entities within Google’s knowledge graph.

Contextual confidence—the degree to which AI models trust a business’s self-reported information—increases dramatically when website data sources include verifiable references to authoritative APIs. A business that displays “Hours updated from Google Business Profile” alongside dynamically populated operating hours communicates transparency and data integrity that LLMs recognize as quality signals. The technical implementation is straightforward: fetch data via API, display it with attribution, and include API response timestamps in Schema.org metadata to demonstrate recency and verification.

Chapter 3: Content Strategies for AI Contextual Ranking

The Evolution from Keyword Content to Contextual Authority

The content strategies that dominated local SEO from 2015 through 2023—keyword-optimized service pages, location-specific landing pages with templated content, and blog posts engineered for exact-match query targeting—have become not merely ineffective but actively counterproductive in the 2026 generative search landscape. AI models trained on billions of high-quality documents have developed sophisticated detection mechanisms for formulaic, low-value content created primarily for algorithmic manipulation rather than genuine user value.

Modern geo-ranking demands a fundamental paradigm shift from keyword content to contextual authority: comprehensive, expert-authored material that demonstrates verifiable expertise, communicates first-hand experience, establishes topical and geographic authority, and builds trust through transparency, attribution, and demonstrable competence. The businesses that dominate AI-generated recommendations are those that have transformed their content strategy from SEO optimization to expertise documentation—creating resources that AI models confidently cite because the content exhibits unmistakable markers of genuine authority.

This transformation reflects the underlying mechanics of how large language models evaluate source quality. Unlike traditional search algorithms that primarily assess signals external to content (backlinks, domain authority, user engagement metrics), LLMs perform deep linguistic analysis of content itself, evaluating semantic coherence, specificity depth, technical precision, logical structure, attribution patterns, and dozens of other textual features that correlate with expertise and trustworthiness. A single paragraph can contain sufficient signals for an AI model to assess author competence, information reliability, and citation worthiness—or to identify content as generic, derivative, or optimized for algorithms rather than humans.

The practical implication is profound: businesses can no longer outsource content creation to generalist writers following keyword briefs. Geo-ranking success in 2026 requires content authored by actual business principals, technical experts, and local specialists who possess demonstrable knowledge and can communicate with the specificity, nuance, and practical detail that AI models recognize as authentic expertise. The investment required is substantial, but the competitive moat created is equally significant—genuine expertise cannot be easily replicated by competitors without equivalent knowledge and experience.

EEAT Framework in 2026: How AI Models Verify Local Expertise?

Experience, Expertise, Authoritativeness, and Trustworthiness (EEAT) has evolved from a quality rater guideline concept into the primary framework through which both traditional search algorithms and generative AI models evaluate content quality and citation worthiness. In the context of geo-ranking, EEAT assessment occurs at multiple levels: the business entity itself, individual content pieces, specific authors, and the cumulative digital footprint across the entire web presence.

Experience verification represents the most significant EEAT evolution in recent years, with AI models now actively seeking signals of first-hand involvement and direct knowledge. For local businesses, experience signals include original photography showing actual work completed, detailed case studies with specific project parameters and outcomes, named client testimonials with verifiable details, time-stamped documentation of services performed, and content that exhibits the kind of specific, practical knowledge that can only derive from direct experience rather than research or synthesis of secondary sources.

A residential contractor demonstrating experience doesn’t write “We install hardwood flooring in Seattle homes”—generic, unverifiable, potentially templated content that exhibits no experience markers. Instead, they document: “In January 2026, we installed 1,200 square feet of 5-inch white oak hardwood throughout a 1924 Craftsman home in the Wallingford neighborhood, working around the original fir trim and addressing the 3/4-inch slope in the dining room floor common in homes of this era.” This paragraph contains multiple experience signals: temporal specificity, precise measurements, architectural detail, neighborhood identification, era-appropriate construction knowledge, and problem-solving documentation—all markers that AI models associate with genuine first-hand experience.

Expertise verification focuses on demonstrated competence within specific domains. AI models evaluate technical vocabulary usage, depth of explanation, accuracy of information, logical coherence, and the ability to address complex topics with appropriate nuance. For local businesses, expertise manifests through detailed service explanations that reveal process knowledge, troubleshooting content that addresses real-world complications, educational material that demonstrates teaching ability, and industry-specific terminology used correctly and naturally rather than artificially inserted for keyword purposes.

Authoritativeness in the geo-ranking context derives from consistent entity validation across multiple independent sources. A dental practice establishes authoritativeness through: verified credentials and certifications documented on the website and validated through third-party professional databases, membership in recognized professional organizations, citations in local news coverage, speaking engagements at industry conferences, published research or articles in professional journals, and consistent mention across authoritative health and medical directories. Each independent validation strengthens the AI model’s confidence that the entity possesses genuine authority within its domain.

Trustworthiness signals have become increasingly sophisticated in 2026, with AI models evaluating transparency markers, attribution practices, accuracy track records, and consistency across information sources. Trust-building elements include: transparent pricing information with detailed explanations of cost factors, clear attribution of claims to authoritative sources, accessible contact information with verified phone numbers and physical addresses, explicit credentials and licensing documentation, privacy policy and data handling transparency, and honest acknowledgment of service limitations or situations requiring specialist referral.

Author Attribution: The Named Expert Advantage

Content authored by named individuals with verifiable credentials and established expertise receives demonstrably higher citation rates in generative AI responses compared to anonymously authored or generically attributed content. This reflects a fundamental principle in AI trust evaluation: accountability and verifiability. When content carries a specific author name with credentials, professional background, and contact information, AI models can cross-reference that author against other sources, validate their expertise, and assess their authority within specific topics—creating confidence pathways that anonymous content cannot provide.

Implementing author attribution effectively requires structured data markup using the Person and author properties within Schema.org, dedicated author bio pages with comprehensive credential documentation, consistent author name usage across all content and external profiles, professional headshots and contact information, and linking to external validation sources such as LinkedIn profiles, professional certifications, and published work. The goal is to establish each content author as a distinct, verifiable entity within the knowledge graph rather than an ambiguous attribution.

Multi-author businesses should develop author expertise mapping, assigning content creation to team members based on their specific qualifications and experience areas. A dental practice might have the lead dentist author content about complex procedures and treatment planning, the office manager write about insurance and payment options, and the hygiene director create preventive care content. This specialization creates authentic expertise signals that AI models detect through vocabulary analysis, technical depth, and the natural confidence that comes from writing about personally practiced skills.

Hyper-Local Storytelling: Signaling Geographic Embeddedness

Geographic authority in the AI age requires content that demonstrates deep local knowledge and community integration—signals that a business is genuinely embedded within its geographic market rather than operating as a generic service provider with a local address. Hyper-local storytelling transforms standard service descriptions into narratives that reference specific neighborhoods, local landmarks, community events, historical context, and the distinctive characteristics that define local culture and geography.

A coffee shop in Portland’s Pearl District doesn’t describe itself generically as “a specialty coffee shop in Portland.” Instead, it tells its story: “We opened our doors in 2019 in the renovated Blitz-Weinhard building, where Henry Weinhard brewed beer for nearly a century. Our location puts us steps from Powell’s City of Books, making us the natural pre-browsing fuel stop for literary explorers. We source beans exclusively from Pacific Northwest roasters—currently featuring a rotating selection from Heart, Coava, and Water Avenue—and our head barista trained at the legendary Stumptown flagship before joining our team.”

This narrative approach accomplishes multiple geo-ranking objectives simultaneously. It establishes temporal depth (2019 opening) and historical knowledge (Blitz-Weinhard building context), creates semantic connections to recognized local entities (Powell’s Books, named roasters, Stumptown), demonstrates industry relationships and expertise (head barista’s training background), and communicates authentic local voice through specific, verifiable details that could only come from genuine local presence and knowledge.

Neighborhood history integration provides particularly powerful local authority signals. Content that references historical development patterns, architectural eras, demographic evolution, and community milestones demonstrates the kind of deep local knowledge that separates genuinely embedded businesses from recent arrivals or automated content generation. A real estate agent writing about Seattle neighborhoods who can reference the Boeing Bust of the 1970s, the transformation of South Lake Union from industrial to tech hub, or the historic preservation debates in Pioneer Square exhibits local expertise that AI models recognize as authoritative.

Community event participation and documentation creates ongoing opportunities for hyper-local content creation. Businesses that participate in farmers markets, neighborhood festivals, charity events, local sports team sponsorships, and community improvement initiatives can document this involvement with event-specific content that includes temporal markers, participant details, location specifics, and community impact. This content serves dual purposes: it builds genuine community relationships that generate citations and mentions, while simultaneously creating first-hand documentation of local engagement that strengthens geographic authority signals.

Data-Led Content: Original Research as Authority Amplification

Original data and proprietary research represent the highest-value content category for AI citation generation, as LLMs preferentially cite primary sources over derivative content when answering queries that benefit from statistical evidence or quantitative insight. Local businesses that conduct original research—customer surveys, market analysis, pricing studies, service trend documentation, or performance benchmarking—create content that generative search platforms cite with attribution, generating both direct visibility and authoritative backlinks from other content creators referencing the research.

The barrier to entry for original research is lower than most businesses assume. A residential HVAC company can survey their customer base about heating preferences, energy cost concerns, and system replacement decision factors, then publish findings as “2026 Seattle Homeowner Heating Survey: 500 Responses Reveal Top Priorities.” This research might reveal that 67% of respondents prioritize energy efficiency over installation cost, or that ductless heat pump interest has increased 43% year-over-year. These statistics become citable facts that AI models can reference when answering related queries, with proper attribution to the originating business.

Pricing transparency tables provide exceptional value for both users and AI models, particularly in industries where pricing information is traditionally opaque or highly variable. A moving company that publishes detailed pricing breakdowns—base rates by home size, distance calculation methodology, seasonal rate variations, surcharge explanations, and comparative pricing for add-on services—creates reference content that generative search platforms cite when answering cost-related queries. The transparency builds trust while simultaneously establishing the business as an authoritative information source within its category.

Local market analysis content serves similar citation-generation functions. A commercial real estate broker who publishes quarterly analysis of office vacancy rates across Seattle submarkets, with breakdowns by building class, square footage pricing trends, and absorption rate calculations, creates authoritative reference content for AI models answering commercial real estate queries in that market. The key is presenting data in structured, easily parseable formats—tables with clear headers, explicit methodology documentation, temporal specificity, and source attribution where applicable.

Longitudinal data tracking over time amplifies research value exponentially. A landscaping company that has tracked seasonal service demand, weather pattern impacts on lawn health, and pest prevalence across different Seattle neighborhoods for five years possesses genuinely valuable proprietary data that no competitor can replicate without equivalent time investment. Publishing this data as “Five-Year Analysis: How Microclimate Variation Affects Lawn Care Needs Across 15 Seattle Neighborhoods” establishes unassailable expertise and creates permanent citation value.

The Citation-Trigger Technique: Structuring Content for AI Extraction

AI models don’t cite content randomly—they cite passages that match specific structural and linguistic patterns that facilitate extraction, attribution, and integration into generated responses. Understanding these patterns enables businesses to engineer content specifically for citation probability, structuring information in formats that generative search platforms can cleanly extract and confidently attribute.

The ideal citation-trigger paragraph follows a specific formula: open with a clear topic sentence that directly answers a potential query, follow with 2-3 sentences of supporting detail with specific data points or examples, and conclude with a statement that provides context or implication. This structure mirrors how LLMs construct responses, making the paragraph easily extractable as a self-contained answer unit. Avoid complex nested clauses, ambiguous pronouns, and references to content elsewhere on the page—citation-worthy paragraphs should function as standalone information units.

Question-answer formatting dramatically increases citation probability for informational queries. Rather than writing continuous prose, structure content as explicit questions followed by comprehensive answers. “What permits are required for bathroom remodeling in Seattle?” followed by a detailed paragraph listing specific permit types, cost ranges, application processes, and timeline expectations creates a perfectly extractable answer unit. AI models can identify the question pattern, validate that the subsequent content directly addresses the query, and cite the passage with confidence.

Definitional content with explicit structure serves similar citation functions. When explaining concepts, processes, or terminology, use explicit definition patterns: “X is defined as…” or “The process of X involves three stages: first… second… third…” This structured approach enables AI models to extract clean definitions and process explanations without extensive contextual parsing. A financial advisor explaining “What is a Qualified Small Business Stock exclusion?” should provide a complete, self-contained definition in the opening paragraph before expanding into examples and implications.

Comparative content benefits from parallel structure and explicit comparison frameworks. Rather than writing “Option A has certain advantages while Option B offers different benefits,” structure comparisons explicitly: “Traditional asphalt shingles typically cost $3.50-5.50 per square foot installed and last 15-20 years. Metal roofing costs $7.00-12.00 per square foot but provides 40-70 year lifespan. For a 2,000 square foot roof, asphalt totals $7,000-11,000 while metal ranges $14,000-24,000.” This parallel structure with specific data enables clean extraction and citation.

List and Table Formatting for Machine Readability

Structured content formats—bulleted lists, numbered sequences, comparison tables, and data matrices—receive preferential treatment in AI citation algorithms because they facilitate clean information extraction and reduce ambiguity in interpretation. When content can be parsed into discrete, well-defined elements rather than extracted from continuous prose, AI models assign higher confidence scores to the information and increase citation probability accordingly.

Best practice list formatting includes descriptive list introductions that establish context, parallel grammatical structure across all list items, sufficient detail within each item to provide standalone value, and concluding statements that synthesize or provide implications.

Avoid single-word or fragmentary list items—each element should provide complete information that could theoretically stand alone. A roofing company listing “Signs Your Roof Needs Replacement” should provide substantive descriptions: “Granule loss from asphalt shingles, visible as dark patches or accumulation in gutters, indicates advanced wear and typically requires replacement within 1-2 years” rather than simply “Granule loss.”

Table formatting should prioritize clarity and completeness, with descriptive column headers, consistent data formatting across rows, appropriate use of units and ranges, and table captions that establish context. A dental practice comparing treatment options might create a table with columns for Procedure, Duration, Cost Range, Insurance Coverage, and Longevity—ensuring that each cell contains complete, specific information rather than abbreviations or ambiguous values. Tables should be implemented in semantic HTML (table, thead, tbody, th, td elements) rather than CSS-styled divs to ensure accessibility and machine readability.

Visual EEAT: Original Imagery as Presence Verification

Original photography with embedded EXIF metadata, particularly GPS coordinates matching business locations, provides powerful verification signals that a business genuinely operates at claimed locations and performs described services. AI models have developed sophisticated image analysis capabilities that can detect stock photography, identify image reuse across multiple websites, and validate image authenticity through metadata analysis—creating strong differentiation between businesses that document actual work and those that rely on generic or stock imagery.

EXIF data preservation requires specific workflow protocols, as many image optimization tools and CMS platforms strip metadata by default. Businesses should implement image processing pipelines that preserve GPS coordinates, capture timestamps, camera information, and other metadata fields while performing necessary optimization for file size and loading performance. The presence of authentic EXIF data communicates transparency and verification that stock imagery fundamentally cannot provide.

Project documentation photography should follow journalism standards: include environmental context showing recognizable local features, capture before/during/after sequences that demonstrate actual work performed, include people (with appropriate permissions) to establish scale and human presence, and photograph specific details that demonstrate technical knowledge and quality standards. A landscape architect’s project gallery should show not just finished installations but site preparation, material selection, seasonal progression, and integration with existing neighborhood context.

Video content provides even stronger presence and expertise signals, particularly for service businesses where process demonstration communicates competence and builds trust. A 2-3 minute video showing a plumber diagnosing and repairing a specific issue, with narration explaining the problem identification process, repair methodology, and preventive recommendations, demonstrates expertise in ways that written content cannot match. AI models analyzing video transcripts, visual content, and metadata can validate that the content represents genuine expertise rather than curated stock footage or generic educational material.

Geotagged social media content creates ongoing verification of local presence and community engagement. Regular posts from business locations, event participation, community interactions, and customer engagements—all with location tags and timestamps—build cumulative evidence of genuine local operation. This distributed verification across platforms strengthens entity confidence scores, as AI models can cross-reference claimed business locations against actual posting patterns, customer tag locations, and review geotagging to validate consistency.

Transparency Content: Building Trust Through Openness

Radical transparency in business operations, pricing, processes, and limitations builds trust signals that AI models weight heavily in citation decisions. Businesses that openly discuss pricing methodology, explain process variations and their cost implications, acknowledge service limitations, and provide honest guidance even when it doesn’t benefit immediate sales demonstrate the kind of trustworthiness that generates long-term authority and citation preference.

Detailed pricing breakdowns with explanatory context serve dual purposes: they provide genuine user value while establishing transparency signals. A kitchen remodeling contractor that publishes “Why Kitchen Remodels Cost $35,000-85,000 in Seattle: Complete Price Breakdown” and then itemizes cabinet costs, countertop options, appliance ranges, labor rates, permit fees, and contingency reserves demonstrates transparency that builds trust. The content should explain price variation factors—cabinet quality grades, countertop material impacts, appliance tier differences—enabling readers to understand pricing rather than simply accept opaque estimates.

Process documentation that includes potential complications and problem-solving approaches demonstrates expertise while setting realistic expectations. A content piece titled “What Can Go Wrong During Foundation Repair (And How We Handle It)” that honestly discusses soil condition variations, unexpected drainage issues, permit complications, and weather delays shows the kind of practical, experience-based knowledge that distinguishes genuine experts from marketers. AI models recognize this content pattern as high-trust material worthy of citation when addressing related queries.

Competitive comparison content that fairly represents alternatives, including situations where competitors might be better choices, paradoxically builds authority through demonstrated objectivity. A specialty coffee roaster that publishes “When to Buy From Large Roasters vs. Local Micro-Roasters” and honestly discusses the advantages of large-scale production (consistency, availability, cost efficiency) alongside small-batch benefits (freshness, experimentation, local character) establishes credibility through fairness. AI models trained to detect bias and promotional content reward balanced, objective analysis with higher citation preference.

Chapter 4: Hyper-Local Authority & Geo-Citations

The Evolution of Citation Value: From Quantity to Contextual Quality

The citation landscape has undergone radical transformation between 2023 and 2026, shifting from volume-based directory listing accumulation to contextually rich, editorially earned mentions across diverse local information ecosystems. Modern AI models evaluate citations not as binary signals of business existence but as multidimensional data points that communicate community integration, topical authority, sentiment quality, and geographic embeddedness. A single mention in a local news article about community involvement carries exponentially more weight than dozens of automated directory listings with identical NAP information.

👉 You need to check this: WhatsApp AI Agents: The Secret Weapon to 10x Your Sales Automatically!

Contextual citation analysis represents the frontier of how generative AI platforms establish entity confidence and local authority. When an LLM encounters a business name in the context of a neighborhood news story about local business awards, a community forum discussion recommending services, or a local blogger’s detailed review of an experience, the model extracts far more than simple validation that the business exists. It captures sentiment signals, topical associations, community perception indicators, usage context, and the relationship dynamics between the business entity and the geographic community it serves.

The practical implications are profound: businesses can no longer achieve sustainable local authority through passive citation building via automated directory submission services. Instead, 2026 geo-ranking demands active community engagement that generates organic mentions across diverse local platforms—strategies that create genuine value for community members while simultaneously building the citation ecosystem that AI models interpret as authoritative local presence. The businesses dominating generative search recommendations are those that have become genuinely integrated into their local communities, not merely those that have optimized citation counts.

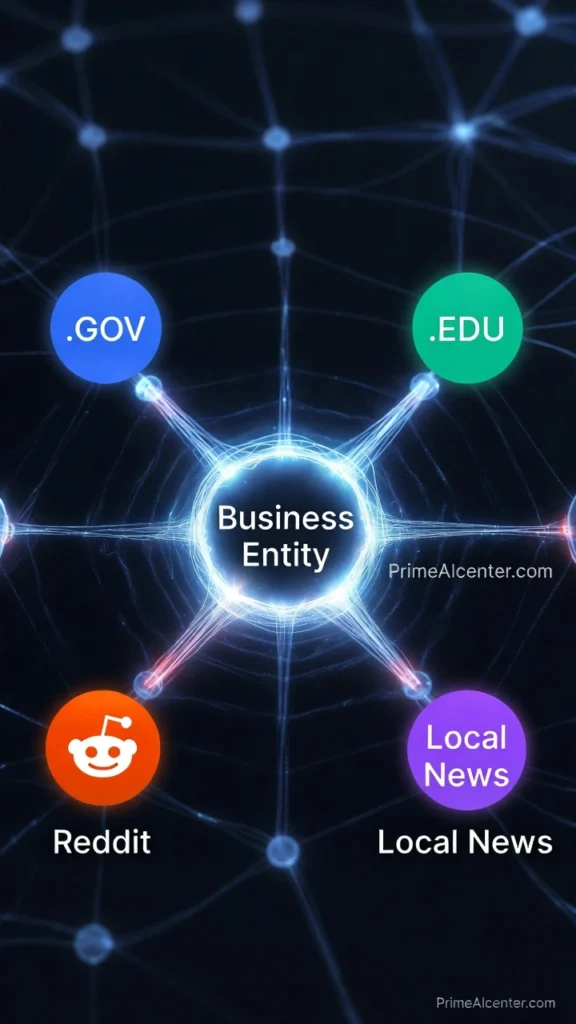

Cross-Platform Citation Validation: How AI Models Verify Local Authority?

Modern LLMs employ sophisticated cross-referencing algorithms that validate business information and assess authority by analyzing mention patterns across multiple independent platforms simultaneously. A business claiming to be a “leading provider of sustainable landscaping in Portland” triggers AI verification protocols that search for corroborating evidence across local news archives, community forums, industry associations, social media platforms, and review ecosystems. The presence of consistent supporting mentions across diverse, editorially controlled sources builds entity confidence, while absence of independent validation triggers skepticism scoring.

Local news coverage represents the gold standard of citation value in 2026, as AI models recognize journalistic content as editorially vetted, factually verified, and subject to professional standards that distinguish it from promotional material. A feature story in the Seattle Times, Portland Business Journal, or neighborhood publications like Capitol Hill Times creates authoritative citations that LLMs weight heavily in recommendation algorithms. The contextual richness of news coverage—including quotes from business principals, descriptions of services or products, community impact documentation, and third-party validation from customers or industry experts—provides multidimensional entity information that pure directory listings cannot match.

Community forums, particularly Reddit and Nextdoor, function as authentic voice-of-customer platforms where AI models extract unfiltered sentiment and genuine recommendation patterns. A thread in r/Seattle where community members discuss and recommend specific contractors, restaurants, or service providers generates citation value precisely because it lacks commercial motivation—these are organic peer recommendations that LLMs recognize as high-trust signals. Businesses that consistently appear in positive community forum contexts accumulate authority that directory citations cannot replicate, as the conversational nature of forum mentions provides rich semantic context about service quality, specialization areas, and community reputation.

Niche local blogs maintained by neighborhood advocates, industry specialists, and community journalists create targeted citation opportunities with exceptional contextual relevance. A Capitol Hill food blogger’s detailed review of a new restaurant, a green building advocate’s feature on sustainable contractors, or a local parent blogger’s recommendations for family-friendly services all generate citations that communicate specific expertise within defined geographic and topical domains. AI models parsing these citations extract not just business validation but detailed attribute information, specialization signals, and community-context associations that inform recommendation algorithms.

The cross-referencing mechanism itself functions as an authority amplifier: businesses mentioned consistently across multiple independent platforms receive exponentially higher confidence scores than those with presence on only one or two platform types. A dental practice mentioned in local news coverage, recommended in Nextdoor threads, reviewed positively on Google and Yelp, featured in a neighborhood blog, and recognized by the local chamber of commerce demonstrates the kind of multi-source validation that LLMs interpret as definitive authority within a geographic market.

AI Sentiment Analysis in Reviews: Beyond Star Ratings to Semantic Understanding

Review analysis has evolved far beyond simple star-rating aggregation into sophisticated natural language processing that extracts semantic meaning, attribute-specific sentiment, comparative positioning, and experiential detail from review text. Modern LLMs trained on billions of reviews can parse nuanced sentiment patterns, identify specific service attributes, detect authentic voice versus incentivized content, and extract actionable business intelligence that informs both recommendation algorithms and competitive positioning.

The shift from quantitative to qualitative review analysis fundamentally changes optimization strategy. A business with 4.8-star average rating based on generic positive reviews (“Great service!” “Highly recommend!”) performs worse in AI recommendation algorithms than a competitor with 4.6 stars but reviews containing rich semantic detail and attribute-specific praise. When a review states “The vegan options in this Seattle branch are unmatched—they offer three plant-based protein choices and can modify any bowl to be completely vegan without cross-contamination,” the LLM extracts multiple valuable signals: dietary accommodation capability, menu flexibility, food safety consciousness, and specific attribute superiority claims.

Attribute extraction from review text enables AI models to match businesses to highly specific queries that star ratings alone cannot address. A query for “dog-friendly restaurants with outdoor seating in Fremont” triggers semantic analysis of reviews for mentions of dog policies, patio availability, and neighborhood location—not simple rating comparisons. Businesses with reviews that naturally mention these attributes through authentic customer experience descriptions appear in AI recommendations even if overall ratings are slightly lower than competitors whose reviews lack attribute specificity.

Sentiment pattern analysis identifies consistency and authenticity markers that distinguish genuine customer experiences from manipulated review profiles. AI models detect suspicious patterns like temporally clustered five-star reviews with similar phrasing, excessive use of branded keywords suggesting incentivized content, or sentiment distributions that deviate from statistical norms for business categories. Conversely, natural review patterns—varied sentiment across rating spectrum, specific experiential detail, temporal distribution matching business age and volume, and language diversity—signal authentic customer feedback that LLMs weight more heavily in authority scoring.

Competitive sentiment analysis within reviews provides particularly valuable signals for AI recommendation logic. When customers explicitly compare businesses in review text—”We tried three other contractors before finding ABC Plumbing, and their response time was at least twice as fast”—LLMs extract comparative positioning data that informs head-to-head recommendation scenarios. Businesses that accumulate positive comparative mentions in reviews establish superiority signals that influence AI citation preference when multiple qualified options exist for a query.

Response quality to negative reviews functions as a trust and professionalism signal that AI models incorporate into authority assessment. Professional, specific, solution-oriented responses to criticism demonstrate accountability and customer service commitment, while defensive, generic, or absent responses to negative feedback signal potential reliability issues. The semantic content of business responses—acknowledgment of specific issues, explanation of resolution steps, contact information for follow-up—contributes to overall trustworthiness scoring independent of the negative review itself.

Hyper-Local Backlink Strategy: Authoritative Link Acquisition from Civic Sources

Strategic backlink acquisition from local government, educational institutions, and nonprofit organizations creates citation value that far exceeds traditional link building metrics. The combination of domain authority from .gov and .edu domains, editorial validation inherent in civic organization recognition, and geographic specificity of local institutional links generates powerful authority signals that AI models prioritize in local recommendation algorithms.

Local government link acquisition strategies center on genuine civic participation and community value creation. Businesses can earn .gov links through: participation in municipal sustainability programs with business directory listings on city environmental websites, sponsorship of city-operated facilities or programs with recognition on parks department or library websites, involvement in economic development initiatives with features on chamber of commerce or downtown association sites managed by local government, and contribution to civic improvement projects with acknowledgment on city planning or community development pages. Each link carries implicit governmental endorsement that AI models interpret as authoritative validation.

Educational institution link building requires value alignment between business capabilities and institutional needs. Local businesses can secure .edu links through: guest lecture programs with faculty page links or department event calendars, internship and job placement partnerships with career services directory inclusion, sponsored research or community projects with acknowledgment on program websites, and alumni association participation with business directory presence on alumni networking platforms. The educational context of these links communicates expertise and knowledge-sharing that enhances topical authority beyond simple link equity.

Nonprofit organization backlinks emerge from authentic community engagement and charitable participation. Effective nonprofit link acquisition strategies include: event sponsorship with recognition on organization websites and event pages, volunteer program participation with business volunteer team acknowledgment, in-kind service donation with case study or impact story features, board membership or advisory roles with leadership page listings, and cause-related partnerships with collaborative program documentation. These links demonstrate community investment and values alignment that AI models recognize as local embeddedness signals.

The outreach approach for civic link building differs fundamentally from traditional link acquisition tactics. Rather than transactional requests for links, businesses should lead with genuine value propositions: “We’d like to sponsor your summer reading program and provide bookmarks for participants” or “Our team would like to volunteer for the community garden project.” The link becomes a natural byproduct of authentic participation rather than the primary objective, creating sustainable relationships that generate ongoing citation value as programs continue and partnerships deepen.

Geo-Tagged Social Content: Visual Validation for Knowledge Graph Confidence

Geotagged content across visual social platforms—particularly Instagram, TikTok, and Facebook—functions as distributed verification infrastructure that validates business location claims, demonstrates active operations, and builds visual evidence of community presence. AI systems increasingly incorporate social media signals into entity validation protocols, cross-referencing claimed business locations against user-generated content tagged at those coordinates to verify authentic physical presence versus virtual-only operations.

Instagram location tagging creates cumulative evidence of business activity and customer visitation patterns. When dozens or hundreds of customers tag a restaurant location in their posts, the aggregate geotagged content validates that the business operates at the claimed address, attracts actual customer traffic, and generates the kind of organic social engagement associated with quality service. AI models analyzing this distributed validation assign higher confidence scores to businesses with robust geotagged content ecosystems compared to those with sparse or absent social location verification.

TikTok’s location-based discovery features and user-generated content patterns provide particularly valuable validation for businesses targeting younger demographics or trending service categories. A coffee shop that appears in dozens of TikTok videos tagged with its precise location, featuring authentic customer experiences, product demonstrations, and ambient atmosphere captures, builds social proof that AI models incorporate into recommendation algorithms for relevant queries. The platform’s emphasis on authentic, unpolished content makes TikTok mentions especially credible as validation sources.

Business-generated geotagged content should maintain consistency with claimed locations and service areas while documenting actual business activities, community engagement, and customer interactions. Regular posting from business locations with accurate geotags, employee team posts from job sites or service locations, event participation documentation with venue tags, and customer feature content with permission and location attribution all contribute to cumulative location validation that strengthens knowledge graph confidence.

Case Study: Community Integration Driving 3X GEO Visibility Growth

Consider the trajectory of Evergreen Landscaping, a Portland-based sustainable landscaping company that transformed from digital invisibility to market-leading GEO visibility through systematic community integration over 18 months. In January 2025, the company appeared in zero AI-generated recommendations for local landscaping queries and maintained minimal presence beyond basic directory listings.

The transformation began with strategic community sponsorship focusing on environmental alignment: title sponsorship of the neighborhood association’s rain garden installation project ($3,500 investment), quarterly educational workshops on sustainable landscaping practices hosted at the local library (free community service generating .gov link from library events calendar), and partnership with a local high school’s environmental science program providing hands-on learning opportunities in native plant landscaping (generating .edu link from school’s community partners page).

These initiatives generated cascading citation value across multiple platforms. The rain garden project earned coverage in the neighborhood newspaper with detailed business mention and owner quotes (authoritative local news citation), sparked discussion threads on the neighborhood’s Nextdoor group with organic recommendations from project participants (community forum validation), and resulted in a detailed case study on the city’s sustainable development website (second .gov link with substantial contextual content). The library workshops generated consistent quarterly mentions on the library’s event calendar, created opportunities for local gardening bloggers to attend and write features, and built a repository of first-hand experience content as attendees discussed learnings on social media with business tags.

By July 2026, Evergreen Landscaping appeared in 73% of AI-generated responses to sustainable landscaping queries in the Portland metro area, compared to 0% eighteen months prior. The business achieved featured citations in ChatGPT Search, Google SGE, and Perplexity AI recommendations, with typical citation context highlighting their “community education focus,” “environmental expertise,” and “strong local partnerships”—all language derived from contextual citations across news, educational, and civic platforms. Organic revenue increased 340% year-over-year, with attribution analysis revealing that 68% of new customers discovered the business through AI recommendations or voice search results.

The case demonstrates that systematic community integration—genuine participation creating authentic value—generates distributed citation ecosystems that AI models interpret as definitive local authority. The investment in community relationships produced returns far exceeding traditional marketing expenditure, while simultaneously building competitive moats that competitors cannot replicate without equivalent time investment and authentic community engagement.

Chapter 5: Off-Page Geo-Signals, Social Validation & The Future of Predictive AR Search

Real-Time Social Geo-Signals: The Living Validation Layer

The 2026 geo-ranking ecosystem extends far beyond static website optimization and traditional citation building into real-time social validation systems that continuously verify business operations, customer engagement, and community presence. Social geo-signals—check-ins, live location tags, user-generated visual content, and augmented reality interactions—function as dynamic validation infrastructure that AI models query in real-time when generating recommendations, creating competitive advantages for businesses that actively cultivate ongoing social proof rather than relying solely on historical citation accumulation.

Facebook and Instagram check-ins represent foundational social validation mechanisms that communicate active business operations and customer visitation patterns. When customers check in at a restaurant during dinner service, tag a retail location during shopping visits, or mark attendance at a business-hosted event, they create timestamped, geolocated validation points that aggregate into comprehensive activity profiles. AI models analyzing these check-in patterns can assess business popularity trends, peak operating hours, customer demographic patterns, and relative market position compared to competitors—all derived from organic social behavior rather than business-controlled content.

Live location tagging on Instagram Stories and TikTok creates ephemeral but powerful validation signals that demonstrate real-time business activity and customer satisfaction. A customer posting a Story from a coffee shop with location tag and positive commentary generates immediate social proof that AI crawlers incorporate into current business status assessment. The temporal recency of live tags carries exceptional weight in recommendation algorithms for immediate-intent queries—”where should I get coffee right now in Fremont”—where businesses with recent social activity signals demonstrate current operations and fresh customer validation.

User-generated visual content tagged to business locations provides rich contextual information that AI models parse for attribute extraction and experience validation. Photos of restaurant dishes, retail product displays, service environments, and customer experiences create distributed visual documentation that supplements business-controlled imagery. Advanced AI vision models analyze this content to verify claimed business attributes: menu diversity, cleanliness standards, product availability, facility aesthetics, and service quality indicators. A business claiming “extensive vegan menu options” gains validation when customer photos consistently show diverse plant-based dishes rather than token offerings.