On March 11, 2026, an anonymous AI model called Hunter Alpha quietly appeared on OpenRouter. Within days, it was processing 500 billion tokens per week, topping daily usage charts, and outperforming models that cost 25 times more. Developers worldwide asked the same question: Is this DeepSeek V4?

The answer stunned the global AI industry. It was Xiaomi — and the model was MiMo V2 Pro.

This Xiaomi MiMo V2 Pro Review covers everything you need to know: confirmed specs, real benchmark data, full pricing breakdown, and an honest comparison against GPT-5.4 and Claude Opus 4.6 — so you can decide whether MiMo V2 Pro belongs in your AI stack in 2026.

What Is MiMo V2 Pro?

MiMo V2 Pro is Xiaomi’s flagship foundation model, officially launched on March 18, 2026. It is a closed-weight, trillion-parameter large language model built specifically for agentic workloads: coding agents, tool use, long-horizon reasoning, and production engineering tasks at scale.

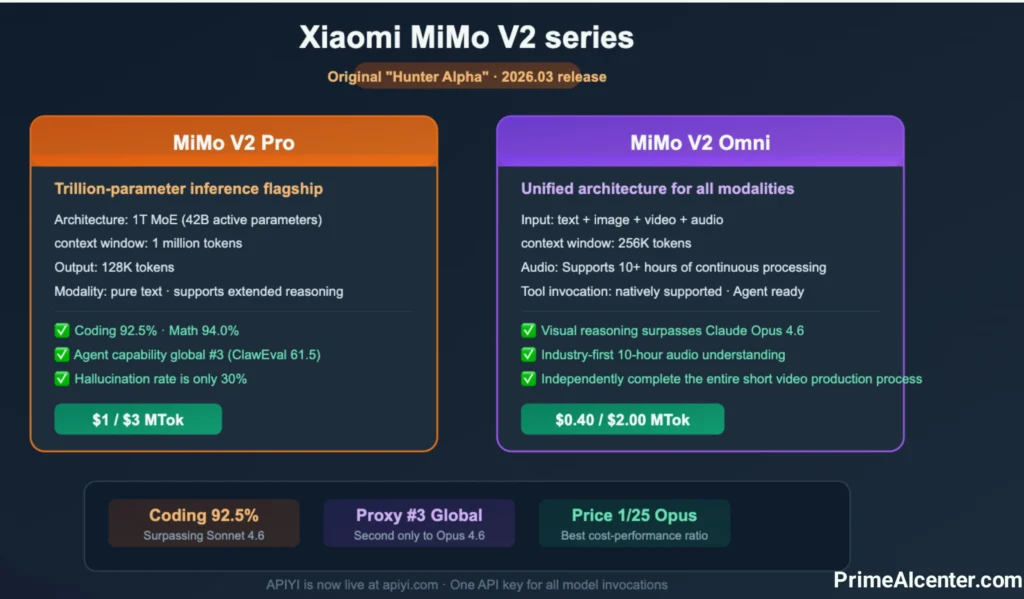

Xiaomi released the MiMo V2 family as three distinct models in a single announcement:

- MiMo V2 Pro — Flagship LLM optimized for agentic tasks and coding

- MiMo V2 Omni — Multimodal model handling text, image, video, and 10+ hours of continuous audio

- MiMo V2 TTS — High-fidelity text-to-speech with multi-dialect and emotional control

The team behind the MiMo division is led by Fuli Luo, a former core contributor to DeepSeek R1 and the V-series models. Her move to Xiaomi in late 2025 is the key to understanding why a consumer electronics company was able to ship a trillion-parameter frontier model in under a year.

MiMo V2 Pro is not positioned as a general-purpose chat assistant. Xiaomi describes it explicitly as the brain of agent systems — a model designed to act across complex, multi-step workflows rather than respond to single queries. This distinction matters when evaluating it against Claude and GPT-5.4.

If you want to understand the broader shift toward AI agents in 2026, see our guide to what AI agents are and how they work.

The Hunter Alpha Story: The Most Dramatic AI Launch of 2026

In early March 2026, an anonymous model named Hunter Alpha appeared on OpenRouter with no announcement, no branding, and no documentation beyond its spec sheet: one trillion parameters, a 1M-token context window, and fully free access.

Within one week, Hunter Alpha had processed over 1 trillion tokens in total usage. It topped OpenRouter’s daily usage charts for multiple consecutive days. Developers compared its performance to GPT-5.2 and Claude Opus 4.6 at a price of zero — and nobody knew who built it.

The community’s leading theory was DeepSeek V4. The architectural patterns, the performance characteristics, the May 2025 knowledge cutoff, and the model’s self-description as a Chinese AI all pointed toward one of China’s most respected open-source labs. Developer speculation reached peak velocity across X, Reddit, and Hacker News.

On March 18, 2026, Xiaomi ended the mystery. The company confirmed: Hunter Alpha was an early internal test build of MiMo V2 Pro. A companion model on OpenRouter, Healer Alpha, was confirmed as an early build of MiMo V2 Omni. Fuli Luo described the strategy in a post as a “quiet ambush” on the global frontier — using real developer adoption to validate performance before revealing the product identity.

The result of this approach: by the time Xiaomi’s official launch landed, MiMo V2 Pro had 1 trillion tokens of verified real-world usage data — something no benchmark can produce, and something no traditional product launch can manufacture.

This is the AI launch story of Q1 2026. Not a press release. Not a benchmark drop. A stealth deployment that let the work speak first.

MiMo V2 Pro: Confirmed Specs & Architecture

| Specification | Confirmed Value |

|---|---|

| Total Parameters | 1 Trillion (sparse MoE) |

| Active Parameters per Forward Pass | 42 Billion |

| Architecture | Sparse Mixture-of-Experts (MoE) |

| Context Window | 1,048,576 tokens (~1M) |

| Maximum Output Tokens | 131,072 tokens |

| Hybrid Attention Ratio | 7:1 (upgraded from 5:1 in Flash) |

| Knowledge Cutoff | May 2025 |

| Open Source | No (closed-weight) |

| Hallucination Rate | 30% (vs 48% in Flash) |

| Official Release Date | March 18, 2026 |

| API Platforms | platform.xiaomimimo.com, OpenRouter, MiMo Studio |

The architecture is built around two principles: efficiency and long-context reliability.

With 1 trillion total parameters but only 42 billion active per forward pass, MiMo V2 Pro achieves frontier-level reasoning without the compute costs of a dense model at equivalent scale. This is the MoE advantage in practice — and it is why Xiaomi can price the model at $1 per million input tokens while maintaining competitive benchmark performance.

The upgraded 7:1 hybrid attention ratio (up from 5:1 in MiMo V2 Flash) is the engineering decision behind the 1M-token context window’s practical usability. Standard transformer architectures face quadratic compute increases as context grows. The hybrid attention mechanism manages this at scale — meaning the 1M-token window is not just a marketing spec, but a production-grade feature confirmed during 1 trillion tokens of real usage.

For enterprise teams evaluating long-context AI deployments, see our Enterprise AI Agent Deployment guide which covers architecture, security, and operational considerations in detail.

MiMo V2 Pro Benchmark Results — March 2026

Global Intelligence Index Rankings (Artificial Analysis, March 2026)

| Global Rank | Model | Intelligence Index Score |

|---|---|---|

| #1 | Gemini 3.1 Pro Preview | 57 |

| #2 | GPT-5.4 (xhigh) | 57 |

| #3 | GPT-5.3 Codex (xhigh) | 54 |

| #4 | Claude Opus 4.6 (Adaptive Reasoning) | 53 |

| #5 | Claude Sonnet 4.6 (Adaptive Reasoning) | 52 |

| #1 open weights | GLM-5 (Reasoning) | 50 |

| #8–10 | MiMo V2 Pro | 49 |

Agentic & Coding Benchmark Comparison

| Benchmark | MiMo V2 Pro | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|---|

| Artificial Analysis Index | 49 | 53 | 57 | 57 |

| PinchBench | 84.0 (#3 global) | ~86+ | — | — |

| ClawEval (Agentic) | 61.5 (#3 global) | 66.3 | ~55 | — |

| Terminal-Bench 2.0 | 86.7 (#1 global) | 65.4 | 75.1 | — |

| GDPval-AA Agentic Elo | 1434 (#1 Chinese models) | — | 83.0% | — |

| SWE-Bench Verified | — | 80.8% | ~80% | 80.6% |

| SWE-Bench Pro | — | ~45.9% | 57.7% | 54.2% |

| Hallucination Rate | 30% | Lower | Lower | Lower |

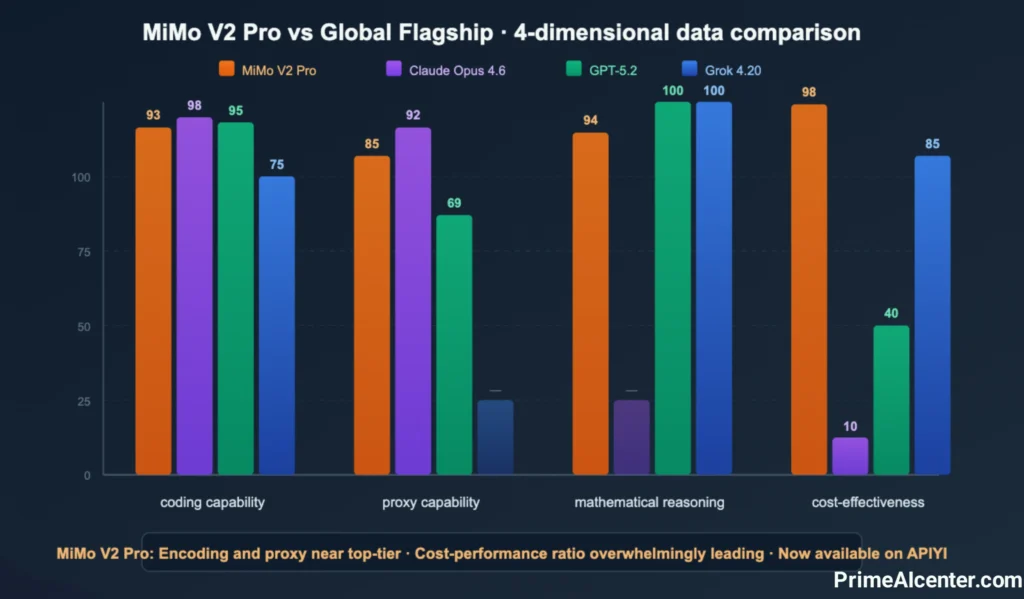

Two numbers define MiMo V2 Pro’s benchmark position: ClawEval 61.5 (#3 globally) and Terminal-Bench 2.0 86.7 (#1 globally).

The ClawEval score places MiMo V2 Pro ahead of GPT-5.4 in agentic capability, trailing only the two leading Claude Opus 4.6 variants. For a model at $1/M input tokens, this is a benchmark position that should not be possible according to 2024-era pricing logic.

Terminal-Bench 2.0 is the clearest win. An 86.7 score surpasses both GPT-5.4 (75.1) and Claude Opus 4.6 (65.4) by a margin that is not noise. For workflows involving live terminal execution, CLI operations, and command-based automation, MiMo V2 Pro outperforms both Western flagships.

One important caveat: several of Xiaomi’s own benchmark results were obtained within the OpenClaw framework, which is Xiaomi’s native agent scaffold. Independent third-party verification is limited as of March 22, 2026. The Artificial Analysis Intelligence Index score of 49 is the most reliable external data point currently available, and it places MiMo V2 Pro in the top 10 models globally.

The real-world validation is the 1 trillion tokens processed during the Hunter Alpha stealth phase, with coding tools consistently ranking as the top use cases by call volume. At scale, that data is more meaningful than any controlled benchmark.

MiMo V2 Pro Pricing & API Costs

| Context Range | Input Price (per 1M tokens) | Output Price (per 1M tokens) |

|---|---|---|

| Up to 256K tokens | $1.00 | $3.00 |

| 256K to 1M tokens | $2.00 | $6.00 |

Cost Comparison: Full Frontier Model Pricing

| Model | Input (per 1M) | Output (per 1M) | AA Index Benchmark Cost | Intelligence Index |

|---|---|---|---|---|

| MiMo V2 Pro | $1.00 | $3.00 | $348 | 49 |

| Gemini 3.1 Pro | $2.00 | $12.00 | — | 57 |

| GPT-5.4 | $2.50 | $15.00 | — | 57 |

| Claude Opus 4.6 | $5.00 | $25.00 | $2,486 | 53 |

| GPT-5.2 | — | — | $2,304 | ~50 |

The Artificial Analysis benchmark cost figure is the most direct expression of MiMo V2 Pro’s value proposition. Running the full intelligence evaluation suite costs $348 with MiMo V2 Pro compared to $2,304 with GPT-5.2 and $2,486 with Claude Opus 4.6. That is a 6-7x cost advantage for a model ranked 4-8 positions lower on the same index.

For high-volume agentic pipelines, this is not a marginal difference. A team running 10 million agent tokens per day at Claude Opus 4.6 pricing ($5/$25) would spend approximately $50,000/month on input alone. At MiMo V2 Pro pricing ($1/$3), the same volume costs $10,000/month. That $40,000/month gap funds significant engineering capacity.

Free access: Xiaomi is offering one week of free API access for developers via five agent framework partners — OpenClaw, OpenCode, KiloCode, Blackbox, and Cline. This is a zero-cost evaluation path for any team already building in those ecosystems.

MiMo V2 Pro vs GPT-5.4 vs Claude Opus 4.6: Full Head-to-Head

| Category | MiMo V2 Pro | GPT-5.4 | Claude Opus 4.6 |

|---|---|---|---|

| Intelligence Index (AA) | 49 (#8–10) | 57 (#2) | 53 (#4) |

| ClawEval (Agentic) | 61.5 (#3) | ~55 | 66.3 (#1) |

| Terminal-Bench 2.0 | 86.7 (#1) | 75.1 | 65.4 |

| SWE-Bench Verified | — | ~80% | 80.8% |

| SWE-Bench Pro | — | 57.7% | ~45.9% |

| OSWorld (Computer Use) | Not supported | 75.0% (#1) | 72.7% |

| Context Window | 1M tokens | 1M tokens | 200K tokens |

| Input Pricing | $1/M | $2.50/M | $5.00/M |

| Output Pricing | $3/M | $15/M | $25/M |

| Native Computer Use | No | Yes | Yes |

| Multimodal (text+image+video) | No | Yes | No |

| Agent Team Orchestration | 5 framework partners | OpenAI ecosystem | Agent SDK + Teams |

| Hallucination Rate | 30% | Lower | Lower |

| Open Source Option | MiMo V2 Flash | No | No |

| Best For | Agentic pipelines, CLI, cost-sensitive coding | General work, computer use, breadth | Deep reasoning, large codebases, writing quality |

Where MiMo V2 Pro Wins

Terminal execution is the decisive advantage. An 86.7 Terminal-Bench 2.0 score versus 75.1 for GPT-5.4 and 65.4 for Claude Opus 4.6 is not a marginal lead. For any workflow centered on CLI operations, command execution, DevOps automation, or live terminal agents, MiMo V2 Pro is the most reliable model available as of March 2026.

Cost efficiency at scale is the structural advantage. Running agentic pipelines at $1/$3 per million tokens versus $5/$25 for Claude Opus 4.6 is a 7x difference that compounds with every token processed.

1M-token context at low cost: Claude Opus 4.6 is capped at 200K tokens. For RAG systems processing large codebases, legal documents, or extended technical specifications, MiMo V2 Pro and GPT-5.4 both support 1M-context — but MiMo V2 Pro does so at 2.5x lower input cost.

Where GPT-5.4 Wins

Native computer use is GPT-5.4’s clearest differentiator. A 75% OSWorld-Verified score — surpassing human performance at 72.4% — makes it the only model currently deployable for reliable GUI automation, browser control, and cross-application workflows. MiMo V2 Pro does not compete here.

SWE-Bench Pro (57.7%) confirms GPT-5.4’s strength on novel, multi-language agentic coding problems that resist pattern memorization. For the hardest class of software engineering tasks, GPT-5.4 leads all available models.

Where Claude Opus 4.6 Wins

Deep reasoning and writing quality remain Claude’s domain. Human evaluators consistently rate Opus outputs higher on nuanced tasks, complex multi-file refactoring, and quality-critical long-form work. The Agent Teams feature — spawning multiple Opus instances coordinating through shared task lists — has no equivalent in either MiMo V2 Pro or GPT-5.4 ecosystems.

For a deeper comparison of the top frontier models, see our AI Reviews & Comparisons section and our article on how to evaluate AI tools for your specific use case.

Best Use Cases for MiMo V2 Pro in 2026

1. High-Volume Agentic Coding Pipelines

MiMo V2 Pro’s ClawEval score of 61.5 (#3 globally) and its official partnerships with OpenClaw, Cline, KiloCode, Blackbox, and OpenCode make it the most production-ready agentic coding model at this price point. Teams running thousands of agent calls per day will recover the evaluation cost within a day of production usage.

2. CLI and Terminal Automation

The #1 Terminal-Bench 2.0 score is a direct product of Xiaomi’s training methodology: the model was fine-tuned via SFT and RL across diverse agent scaffolds specifically targeting tool-call accuracy and multi-step CLI reasoning. For DevOps agents, build system automation, and infrastructure management workflows, no current model is more reliably tested in this specific environment.

3. Long-Context Document Processing

With a confirmed 1,048,576-token context window and a 7:1 hybrid attention ratio that makes long-context retrieval computationally viable, MiMo V2 Pro handles large technical documentation, extended codebases, and long-document RAG systems in a single pass — at half the cost of GPT-5.4 for the same context range.

4. Frontend Code Generation at Scale

During the Hunter Alpha stealth phase, coding-focused tools dominated the top use cases by API call volume. Xiaomi’s own data shows MiMo V2 Pro generating polished, functional web pages within OpenClaw in a single query with strong end-to-end completion. For agencies and teams producing high volumes of frontend code, this is the category to test first.

5. Budget-Constrained Startups and Indie Developers

Any developer or team building AI-powered applications who needs near-frontier LLM reasoning at a price point that does not require venture funding. At $1/$3 per million tokens, MiMo V2 Pro fills the gap between cheap but weak smaller models and expensive but powerful frontier options. That gap has been the biggest pricing problem in AI infrastructure since 2024.

For more on building cost-effective AI automation workflows, see our guides on WhatsApp AI Agents and Enterprise AI Agent Deployment.

How to Access MiMo V2 Pro Right Now

Option 1 — Xiaomi Official API (Recommended for Production)

Direct first-party API access at platform.xiaomimimo.com. Full pricing tiers apply: $1/$3 for contexts up to 256K and $2/$6 for 256K–1M. Best for production deployments requiring SLA guarantees and direct vendor support.

Option 2 — OpenRouter (Fastest to Integrate)

MiMo V2 Pro is available at openrouter.ai/xiaomi/mimo-v2-pro with the full 1,048,576-token context and 131,072-token output limit. Best for teams already using a unified API layer across multiple models, or for rapid evaluation without new account setup. This is how Hunter Alpha was accessed by 1 trillion tokens of developer usage.

Option 3 — Free Access via Agent Frameworks

Xiaomi is offering one week of free API access through five official partners. If you are building with any of the following, you can access MiMo V2 Pro at no cost during the launch period:

- OpenClaw

- Cline

- KiloCode

- Blackbox AI

- OpenCode

Check each framework’s documentation for the free access redemption process.

Option 4 — MiMo Studio (No-Code Interface)

For web-based access without API integration, MiMo Studio provides a direct interface to the full MiMo V2 family. Best for evaluation and non-production use cases.

For context on how to evaluate and integrate new AI models effectively into your existing stack, see our WebMCP Tutorial and our GEO Optimization guide.

Final Verdict: Is MiMo V2 Pro Worth Using in 2026?

MiMo V2 Pro is the most significant pricing disruption in frontier AI since DeepSeek R1 — and that comparison is not accidental, given who built it.

A model ranking 3rd globally in agentic benchmarks (ClawEval), #1 in terminal execution (Terminal-Bench 2.0), with a 1M-token context window, at $1 per million input tokens is a new category. It does not need to beat Claude Opus 4.6 or GPT-5.4 overall. It needs to be good enough for specific high-volume use cases at a price where those flagships are economically irrational to deploy.

The Hunter Alpha launch confirmed this with real data. The AI community did not adopt it because it was new or free — they adopted it because it worked. 1 trillion tokens in one week, before anyone even knew the name.

Use MiMo V2 Pro if you need:

- High-volume agentic coding pipelines where Claude/GPT-5.4 pricing is a constraint

- CLI and terminal automation at the highest benchmark performance currently available

- 1M-token context at a fraction of GPT-5.4 Pro pricing

- Integration with OpenClaw, Cline, KiloCode, or Blackbox ecosystems

- Near-frontier LLM reasoning at startup-viable pricing

Stay with Claude Opus 4.6 or GPT-5.4 if you need:

- Native computer use and GUI automation (GPT-5.4 only currently)

- The deepest writing quality and nuanced reasoning for content-critical tasks

- Multimodal input within a single model (GPT-5.4)

- Agent Teams orchestration for complex parallel workflows (Claude Opus 4.6)

- The lowest hallucination rates for factual precision tasks

The honest conclusion: MiMo V2 Pro will not replace Claude Opus 4.6 or GPT-5.4 as the default choice for most knowledge workers. What it will replace is the expensive use of those models for high-volume, agentic, and terminal-based workflows where they were always overkill on price. That is a large and underserved market — and as of March 18, 2026, Xiaomi owns it.

PrimeAIcenter Rating: 4.4/5 — Best-in-class for agentic pipelines and terminal automation at this price point. Meaningful gaps in computer use and hallucination rate prevent a higher score against frontier models.

FAQS: MiMo V2 Pro

What is MiMo V2 Pro?

MiMo V2 Pro is Xiaomi’s flagship AI model, released March 18, 2026. It is a trillion-parameter, closed-weight LLM built for agentic workloads, coding, and long-context tasks. Previously tested anonymously as Hunter Alpha on OpenRouter, it processed over 1 trillion developer tokens before its official reveal.

Is Hunter Alpha the same as MiMo V2 Pro?

Yes. Xiaomi confirmed on March 18, 2026 that Hunter Alpha was an early internal test build of MiMo V2 Pro. It appeared on OpenRouter in early March 2026 as a stealth deployment to validate performance under real-world conditions before the official product launch.

How much does MiMo V2 Pro cost?

MiMo V2 Pro costs $1.00 per million input tokens and $3.00 per million output tokens for contexts up to 256K. For the full 256K–1M context range, pricing is $2.00 input / $6.00 output per million tokens. Free developer access is available for one week through OpenClaw, Cline, KiloCode, Blackbox, and OpenCode.

Is MiMo V2 Pro open source?

No. MiMo V2 Pro is a closed-weight model. MiMo V2 Flash is the open-source release from the same family. Fuli Luo has indicated plans to release an open-source model variant when the Pro is stable enough.

How does MiMo V2 Pro compare to GPT-5.4?

GPT-5.4 scores higher on the Intelligence Index (57 vs 49), leads on SWE-Bench Pro (57.7% vs not tested), and uniquely supports native computer use (75% OSWorld). MiMo V2 Pro leads on Terminal-Bench 2.0 (86.7 vs 75.1) and ClawEval agentic benchmarks (61.5 vs ~55), while costing 2.5x less per input token.

What is MiMo V2 Pro’s context window?

MiMo V2 Pro supports a 1,048,576-token context window (approximately 1 million tokens), with a maximum output of 131,072 tokens. Higher pricing applies for contexts exceeding 256K tokens.

Can I try MiMo V2 Pro for free?

Yes. Free developer access for one week is available through five official agent framework partners: OpenClaw, OpenCode, KiloCode, Blackbox, and Cline. Web-based access is also available via MiMo Studio at mimo.xiaomi.com.

Is MiMo V2 Pro good for content creation?

MiMo V2 Pro was architected for agentic and coding tasks. For content creation, long-form writing, and creative work, Claude Opus 4.6 remains the stronger choice. See our AI Content Creation reviews for the best tools in that category.

Who leads the MiMo AI team at Xiaomi?

The MiMo division is led by Fuli Luo, a former core contributor to DeepSeek R1 and the V-series models. Her move to Xiaomi in late 2025 is the primary reason for the architectural quality and rapid capability development seen in the MiMo V2 family.

What is MiMo V2 Omni?

MiMo V2 Omni is the multimodal model released alongside MiMo V2 Pro on March 18, 2026. It handles text, image, video, and over 10 hours of continuous audio in a unified architecture. It was tested anonymously as Healer Alpha on OpenRouter prior to the official launch. Pricing is $0.40 input / $2.00 output per million tokens.

Where can I access MiMo V2 Pro?

MiMo V2 Pro is accessible via Xiaomi’s official API platform at platform.xiaomimimo.com, through OpenRouter, and via the MiMo Studio web interface at mimo.xiaomi.com.