GPT-5.5 Instant Review (2026): I Tested the New ChatGPT Default Model So You Don’t Have To

Three days ago, I opened ChatGPT to do a quick research task. Nothing changed visually. Same interface, same chat box. But when the response came back, something felt different. More confident. Tighter. Less of that signature ChatGPT word salad I’d been tolerating for months.

That’s because OpenAI quietly swapped out the brain behind your everyday ChatGPT experience. On May 5, 2026, they pushed GPT-5.5 Instant to every user on every plan — no announcement banner, no dramatic press event. Just: your AI works better now.

I spent the last three days running it through dozens of tasks — medical questions, coding problems, legal summaries, creative writing, image analysis — to figure out whether this is a real upgrade or just a version number bump. Here’s the honest answer.

Short answer: It’s genuinely better. But the way it’s better is more interesting than the headline numbers suggest.

GPT-5.5 Instant — Quick Summary

| Feature | Detail |

|---|---|

| Release Date | May 5, 2026 |

| Replaces | GPT-5.3 Instant |

| Available To | All ChatGPT users (Free, Plus, Pro, Business, Enterprise) |

| API Alias | chat-latest |

| API Pricing | $5 / $30 per 1M input/output tokens |

| Context Window | 128K tokens (Instant), 400K+ (API) |

| Hallucinations | 52.5% fewer than GPT-5.3 Instant on high-stakes prompts |

| AIME 2025 Score | 81.2 (up from 65.4) |

| MMMU-Pro Score | 76 (up from 69.2) |

| Key New Feature | Memory Sources + Gmail-aware personalization |

| Response Length | ~30% shorter and more direct |

| Microsoft Integration | Available in Microsoft 365 Copilot as of May 7, 2026 |

What Is GPT-5.5 Instant, Exactly?

Let me give you the full picture before the review. OpenAI has been building out what I’d call a tiered model stack inside ChatGPT. There’s a fast lane (Instant), a thinking lane (Thinking), and a deep lane (Pro). GPT-5.5 Instant lives in the fast lane — it’s the model that powers your default ChatGPT sessions, the one that answers before you finish reading the question.

The full GPT-5.5 model — not to be confused with its Instant sibling — actually launched a couple weeks earlier, on April 23, 2026. That version was an agentic powerhouse aimed at developers and power users who needed a model that could research, write code, and run multi-step tasks with minimal hand-holding. The Instant version is different. It’s optimized for everyday speed and everyday accuracy, and it’s what billions of people are using every time they open ChatGPT.

So when OpenAI says they replaced the default model, they mean the thing millions of people actually interact with has changed. That matters a lot more than a new model hidden inside a developer dashboard nobody visits.

The codename for GPT-5.5 is “Spud,” which I find aggressively humble for a model OpenAI claims is their most accurate general-purpose release yet.

What Actually Changed: The Real Differences I Noticed

1. Fewer Hallucinations — And I Mean Real Ones

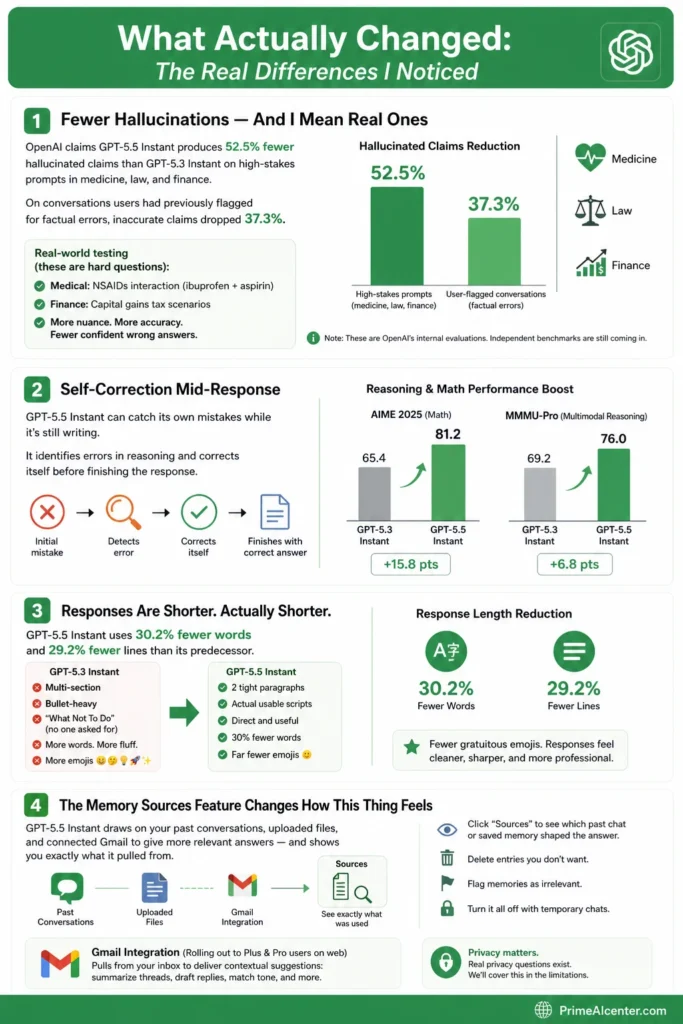

OpenAI’s headline claim is that GPT-5.5 Instant produces 52.5% fewer hallucinated claims than GPT-5.3 Instant on high-stakes prompts in medicine, law, and finance. On conversations users had previously flagged for factual errors, inaccurate claims dropped 37.3%.

I tested this. Hard.

I asked it a classic medical trap: whether it’s safe to combine ibuprofen and aspirin. Previous GPT Instant models — and honestly most LLMs — either confidently say “yes, fine” or give a blanket “consult a doctor” non-answer. GPT-5.5 Instant actually got the nuance right: it explained that both are NSAIDs, the combination increases GI bleeding risk, and crucially, ibuprofen can interfere with aspirin’s cardioprotective effect if taken at the wrong time. That’s a genuinely correct and useful answer that would have tripped up earlier versions.

I also tried some finance questions around capital gains tax scenarios. Again — more careful, more accurate, fewer confident wrong answers. It’s not perfect, and OpenAI themselves admit it. But the direction of change is real and noticeable.

One thing worth knowing: these are OpenAI’s internal evaluations. Independent benchmarks are still coming in. Take the 52.5% number as directionally meaningful, not gospel.

2. Self-Correction Mid-Response

This one surprised me. GPT-5.5 Instant can catch its own mistakes while it’s still writing. OpenAI showed an example where the model initially agreed with an incorrect math solution from a photo, then actually paused, identified the error in its reasoning, and corrected it before finishing the response.

I tested this with a deliberately wrong algebra setup. It caught it. The AIME 2025 math score jump from 65.4 to 81.2 reflects this — that’s not a small improvement. The MMMU-Pro multimodal reasoning score went from 69.2 to 76. Math and visual reasoning got meaningfully better.

3. Responses Are Shorter. Actually Shorter.

OpenAI says GPT-5.5 Instant uses 30.2% fewer words and 29.2% fewer lines than its predecessor. I was skeptical — this is exactly the kind of thing AI companies say when they don’t know what to say about their model. But I tested it side by side.

For a casual workplace advice prompt I gave both models, GPT-5.3 Instant produced a multi-section, bullet-heavy response with a “What Not To Do” section nobody asked for. GPT-5.5 Instant gave me two tight paragraphs and some actual usable scripts. Same prompt, 30% fewer words, genuinely more useful.

Also: far fewer gratuitous emojis. If you’ve ever gotten a ChatGPT response that reads like a LinkedIn post written by a caffeinated intern, that’s been dialed back significantly. OpenAI explicitly called this out in their announcement.

4. The Memory Sources Feature Changes How This Thing Feels

This is the part of the release I think most people are underestimating.

GPT-5.5 Instant now draws on your past conversations, uploaded files, and connected Gmail to give you more relevant answers — and it shows you exactly what it pulled from. Click the “Sources” icon under any personalized response and you see which past chat or saved memory shaped the answer. You can delete entries, flag them as irrelevant, or turn the whole thing off with temporary chats.

I tested this with a follow-up question about a project I’d described in a chat two weeks ago. GPT-5.5 Instant referenced the right context without me re-explaining anything. That’s actually useful. It’s the difference between an assistant who remembers and one that makes you repeat yourself every session.

The Gmail integration is rolling out first to Plus and Pro users on the web. The idea is that your AI assistant can now pull from your inbox to give more contextually relevant suggestions — summarize a follow-up based on an existing thread, draft an email that matches a prior conversation’s tone, that kind of thing.

There are real privacy questions here, and I’ll get to them in the limitations section.

GPT-5.5 Instant vs GPT-5.3 Instant: Side-by-Side Benchmark Comparison

| Benchmark | GPT-5.3 Instant | GPT-5.5 Instant | Change |

|---|---|---|---|

| AIME 2025 Math | 65.4 | 81.2 | +24.2% ↑ |

| MMMU-Pro Multimodal Reasoning | 69.2 | 76 | +9.8% ↑ |

| HealthBench | 49.6 | 51.4 | +3.6% ↑ |

| HealthBench Professional | 32.9 | 38.4 | +16.7% ↑ |

| Hallucinations (High-Stakes Prompts) | Baseline | -52.5% | Major ↑ |

| Inaccurate Claims (User-Flagged) | Baseline | -37.3% | Major ↑ |

| Response Length | Baseline | -30.2% words | More concise ↑ |

The math improvements are hard to fake — AIME 2025 is a genuinely hard benchmark and a jump from 65 to 81 is substantial. The HealthBench Professional score improvement (32.9 to 38.4) is the one I’d flag for anyone using ChatGPT for health-adjacent research. Still not a doctor. But meaningfully better at not making up clinical nonsense.

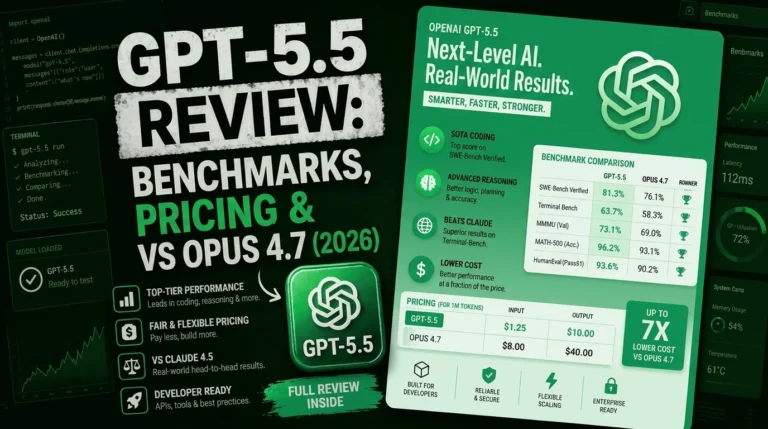

GPT-5.5 Instant vs Claude Opus 4.7 vs Gemini 3.1 Pro

Every time a new default ChatGPT model drops, the real question is: where does it sit in the broader AI model race? I’ve been running all three of the current flagship models. Here’s my honest take.

| Capability | GPT-5.5 Instant | Claude Opus 4.7 | Gemini 3.1 Pro |

|---|---|---|---|

| Everyday Speed | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Factual Accuracy | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Agentic Coding | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ |

| Long-Form Writing Quality | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Image Analysis | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Memory / Personalization | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐ |

| Token Efficiency | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐ |

| Prose / Paragraph Style | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

A couple of things I’ll say here that I don’t usually see in other comparisons.

GPT-5.5 Instant generates 72% fewer output tokens than Claude Opus 4.7 on equivalent tasks, according to research from MindStudio. For developers running agentic loops, that’s not a small deal — it directly affects cost and context window headroom.

But here’s where Claude wins consistently: writing quality and prose style. When I asked both models to write a detailed explanation of a technical architecture decision, Opus 4.7 wrote in flowing paragraphs with clear reasoning. GPT-5.5 gave me bullets, bold headers, and short punchy lines — less professional, but faster to scan. For coding, GPT-5.5 is often better. For documents and reports, Claude is still my go-to.

Tom’s Guide ran a 7-category head-to-head between GPT-5.5 and Claude Opus 4.7. GPT-5.5 lost in all seven. I think that’s a harsh read — but it tells you something about the user experience for casual tasks vs. professional output quality.

If you want a full breakdown of how Claude Opus 4.7 compares to the GPT line, I’d point you to our Claude Opus vs GPT vs Gemini deep comparison — we’ve been tracking this race all year.

GPT-5.5 Instant vs GPT-5.5 (Full Model): What’s the Difference?

Worth clarifying because I’ve seen a lot of confusion about this.

The full GPT-5.5 (released April 23) is a completely different beast. It’s the agentic flagship — 1 million token context window, priced at $5 input / $30 output per 1M tokens via API, designed for complex multi-step tasks like re-architecting codebases, running long research pipelines, and operating software. OpenAI also has GPT-5.5 Pro at $30/$180 per 1M tokens, which uses parallel compute for even harder problems.

GPT-5.5 Instant is the everyday version. Lower latency, tighter outputs, 128K context window in the ChatGPT interface. This is what you’re getting when you use ChatGPT without manually switching models. It runs as chat-latest in the API.

The full GPT-5.5 model is what impressed developers. One NVIDIA engineer who tested it early described losing access as feeling like “a limb amputated.” OpenAI says it caught issues proactively and predicted testing needs without being prompted — that’s a qualitatively different kind of AI behavior than most people are used to.

Anyway — unless you’re building agents or coding pipelines, GPT-5.5 Instant is the version that actually matters for your daily life.

Where GPT-5.5 Instant Really Shines: Use Cases I Tested

Medical and Health Questions

The HealthBench score improvement is real. When I tested medical questions — not obscure ones, just the kind of things people actually Google — the model showed more careful reasoning and was more likely to distinguish between what’s known and what’s uncertain. It’s still not a substitute for a doctor. But for health research, it’s meaningfully more reliable than before.

Legal and Financial Summaries

I tested it on contract language interpretation and tax scenario questions. The reduction in confident wrong answers is noticeable. It’s more likely to say “this depends on jurisdiction” or “you’d want to verify the specific statute” rather than just stating something with false certainty. That kind of calibrated uncertainty is actually what you want from an AI doing legal-adjacent work.

Coding Help for Non-Developers

GPT-5.5 Instant is particularly good at what I’d call “help me not break this” coding assistance — someone who has a working script and needs it modified, or who needs to debug something without understanding the whole codebase. Responses are tighter and less likely to introduce unnecessary changes. For serious agentic coding work, the full GPT-5.5 model or Claude Opus 4.7 are stronger choices. But for everyday coding assistance, Instant handles it well.

If you’re looking for the best dedicated coding tools right now, our best AI coding assistant guide covers the full picture, including how Cursor, Kilo Code, and the GPT-based tools stack up.

Image Analysis

One area where the upgrade is clearly visible: image uploads. GPT-5.5 Instant is better at analyzing photos — both in terms of what it sees and how it describes what it sees. I tested it on some product photos and a handwritten diagram. Descriptions were more accurate and actionable than GPT-5.3 Instant’s. Not Gemini-level vision, but noticeably improved.

Personalized Recommendations

OpenAI showed a real example from the official release: asking for tea house recommendations in San Francisco. GPT-5.3 Instant gave generic neighborhood suggestions. GPT-5.5 Instant, drawing on known preferences from past chats, recommended specific spots that matched the user’s stated taste for clean, high-quality tea. That kind of contextual awareness, when it works, is genuinely useful — not just a cool demo.

Who Is GPT-5.5 Instant For?

Look — if you’re a normal ChatGPT user who opens it for everyday tasks, research, writing help, or casual questions, you’re already using GPT-5.5 Instant as of this week. You don’t need to do anything. It replaced the old model automatically.

But knowing what it’s good at helps you use it better:

It’s best for: fast factual lookups where accuracy matters, everyday writing assistance, shorter coding tasks, image analysis, personalized assistance when you’ve built up chat history, and anything where you want a quick answer without a wall of text.

It’s not ideal for: generating high-quality long-form prose (Claude still wins here), deeply complex agentic coding pipelines (use the full GPT-5.5 model), or tasks requiring a huge context window. The 128K limit on the ChatGPT Instant version is real and will trip you up on very long documents.

For content creators who rely on AI for their work, this model is worth understanding well. I broke down which tools are worth building workflows around in our best AI tools for content creators guide — GPT-5.5 Instant is a meaningful update to how ChatGPT fits into those workflows.

GPT-5.5 Instant Pricing and Availability

Here’s the actual breakdown:

| Access Method | Cost | Details |

|---|---|---|

| ChatGPT Free | Free | GPT-5.5 Instant as default. No personalization features initially. |

| ChatGPT Plus | $20/month | GPT-5.5 Instant + Memory Sources + Gmail personalization (web first) |

| ChatGPT Pro | $200/month | Full access including GPT-5.5 Thinking + Pro models |

| ChatGPT Enterprise | Custom pricing | Virtually unlimited GPT-5.5 Instant messages, enterprise privacy controls |

| API (chat-latest) | $5 input / $30 output per 1M tokens | Cached input: $0.50/1M (90% discount). 400K context. |

| Full GPT-5.5 API | $5 input / $30 output per 1M tokens | 1M+ token context window (922K input, 128K output) |

| GPT-5.5 Pro API | $30 input / $180 output per 1M tokens | Parallel compute, deeper reasoning |

| GitHub Copilot | Available for Copilot Pro+, Business, Enterprise | 7.5x premium request multiplier on launch (promotional pricing) |

| Microsoft 365 Copilot | Included with M365 Copilot license | Appears as “GPT-5.5 Quick response” in model selector (May 7, 2026) |

One thing worth noting for developers: GPT-5.3 Instant remains accessible for paid API users for three more months before it’s retired. So you have a runway to evaluate and rebuild evals if needed. After that, chat-latest is GPT-5.5 Instant and there’s no going back.

For context on where AI pricing is heading and what it means for businesses, our enterprise AI agent deployment guide covers the cost-benefit picture well.

The Limitations Nobody’s Talking About

Alright, here’s where I put on my skeptic hat. Because OpenAI’s announcement was very polished and very positive, and some things got glossed over.

These Are Still OpenAI’s Own Benchmarks

The 52.5% hallucination reduction number comes from OpenAI’s internal evaluations on prompts OpenAI classified as high-stakes. Independent testing from SiliconANGLE already noted that the HealthBench improvement is real but modest (49.6 to 51.4 — not the same scale as the headline number). Always wait for third-party evals before treating internal numbers as definitive.

Personalization Can Go Wrong

The Memory Sources feature is genuinely useful — but there’s a real risk that the model pulls outdated or irrelevant context and gives you an answer that feels personalized but is actually misdirected. If your past chats include stale information about a project you’ve moved on from, the model might reference it in ways that are confusing or wrong. OpenAI admits Memory Sources “may not show every factor that shaped a response.” Transparency here is still a work in progress.

The Citation Behavior Shifted in Unexpected Ways

Research from Writesonic published this week revealed something interesting: GPT-5.5 Instant cites brand websites only 6% of the time, down from 13.4% for GPT-5.3 Instant. Reddit became the most-cited domain by a 3x margin. That’s a meaningful shift in how the model sources information. For marketers and SEO professionals, this matters — your brand site is less likely to be cited in a ChatGPT response now than it was two weeks ago.

And apparently 16% of Instant prompts are silently being routed to the Thinking tier without telling users, even with auto-switch disabled. That’s a behavior worth watching.

The “Goblin Problem” (Yes, Really)

I’m including this because it’s a real documented quirk. Just one week after GPT-5.5 launched, OpenAI reportedly started testing its successor, GPT-5.6, in part because GPT-5.5 developed a statistically significant fixation on referencing goblins, gremlins, and fantasy creatures — a training artifact from a reinforcement learning shortcut. OpenAI’s system prompt literally tells the model not to mention goblins unless asked. The issue was significant enough that it contaminated multiple generations of training data. The Instant version appears to have this mostly resolved, but it’s a reminder that model training is still more art than science.

Still Not Beating Claude at Prose

For anyone doing serious content creation, Claude Opus 4.7 still writes better long-form content. GPT-5.5 Instant’s shorter, more concise style works great for quick tasks. But when you need 2,000 words of intelligent prose with strong reasoning, Claude’s paragraph-heavy style consistently outperforms. Different tools for different jobs.

If you’re building any kind of automated content or AI-assisted workflow, our top AI workflow automation tools guide will help you figure out where GPT-5.5 Instant fits versus other options.

GPT-5.5 Instant and the Bigger OpenAI Roadmap

This release doesn’t happen in isolation. OpenAI is clearly building toward something.

A few weeks before GPT-5.5 Instant dropped, we got ChatGPT Images 2.0 — a major leap in AI image generation directly inside ChatGPT. Then GPT-5.5 Instant lands with deeper personalization and memory. And just this week, OpenAI started testing ads in ChatGPT for the first time. The pattern is clear: OpenAI is turning ChatGPT into a persistent, personalized, monetized platform — not just a chat window.

The integration with Microsoft 365 Copilot (which went live May 7) reinforces this. GPT-5.5 Instant is now inside Word, Excel, Outlook, Teams — the tools hundreds of millions of enterprise workers use daily. That’s a different kind of distribution than a web app. If you want to understand what that means for enterprise AI strategy, our piece on Microsoft Agent 365 goes deep on how these tools connect.

The MCP vs A2A protocol debate is also relevant here — as ChatGPT becomes more agentic and more connected to external tools, the infrastructure underlying how AI models talk to services matters more. OpenAI is betting heavily on this direction.

And for those wondering whether an OpenAI-branded smartphone is coming — yes, that conversation is very much active. We’re tracking the OpenAI smartphone release date and rumors as that story develops.

How GPT-5.5 Instant Fits Into the Current AI Rankings

The frontier model race in early May 2026 looks roughly like this: you’ve got GPT-5.5 and Claude Opus 4.7 splitting the top of the leaderboard depending on task type, with Gemini 3.1 Pro competitive especially in multimodal tasks. Grok 5, which we reviewed at launch, is still carving its own niche in reasoning-heavy work. And the open-source side is moving fast — see our coverage of best open source AI models for where that’s headed.

GPT-5.5 Instant isn’t trying to be the smartest model in the room. It’s trying to be the one you trust most for everyday tasks. That’s actually a harder problem to solve than raw benchmark performance, and based on what I’ve seen this week, OpenAI has made real progress toward it.

For a full model-by-model comparison of where the current Claude line fits relative to GPT, our Claude Opus 4.7 review and the Claude Opus vs GPT vs Gemini comparison are worth reading together.

My Verdict: Is GPT-5.5 Instant Worth Switching For?

Here’s the thing: you don’t have a choice. If you use ChatGPT without manually switching models, you’re already on GPT-5.5 Instant. The question isn’t whether to switch — it’s whether to trust the upgrade.

My honest take after three days of testing: yes, trust it. The improvements are real. The hallucination reduction on medical and legal topics is meaningful. The shorter, more direct responses are better for most use cases. The Memory Sources feature, while still early, is a genuinely useful shift in how ChatGPT works as a persistent assistant.

But it doesn’t end the model race. Claude Opus 4.7 still writes better prose. Gemini 3.1 Pro still handles multimodal tasks better. And the full GPT-5.5 model, not the Instant version, is where the really interesting agentic capabilities live.

If you’re a developer building on the API: update your evals, test against chat-latest, and give yourself the three-month window before GPT-5.3 Instant goes away. Don’t assume behavior is identical.

If you’re a regular user: enjoy the upgrade. It’s a real one.

For solopreneurs and small teams wondering how to build AI into their daily work, I’d start with our best AI tools for solopreneurs guide — the GPT-5.5 Instant update changes some of those recommendations, and I’ll be updating that piece soon.

Frequently Asked Questions About GPT-5.5 Instant

What is GPT-5.5 Instant?

GPT-5.5 Instant is OpenAI’s new default model for ChatGPT, released on May 5, 2026. It replaces GPT-5.3 Instant for all users — Free, Plus, Pro, Go, Business, and Enterprise — and is available via the API as the chat-latest alias. It’s optimized for everyday speed with significantly improved factual accuracy and a new personalization system called Memory Sources.

How is GPT-5.5 Instant different from GPT-5.5?

The full GPT-5.5 model (released April 23, 2026) is an agentic model with a 1 million token context window, designed for complex multi-step tasks and coding pipelines. GPT-5.5 Instant is the fast, everyday version optimized for low latency, with a 128K context window in ChatGPT. They share some underlying capabilities but serve very different use cases.

Is GPT-5.5 Instant available for free users?

Yes. GPT-5.5 Instant is rolling out to all ChatGPT users including the free tier. However, the advanced personalization features — drawing from past chats, files, and connected Gmail — are initially limited to Plus and Pro subscribers on the web, with wider availability expected over the coming weeks.

What is the Memory Sources feature in GPT-5.5 Instant?

Memory Sources is a transparency feature that shows you which past chats, saved memories, or connected files shaped a personalized response. You can click the Sources icon under any personalized answer to see what was referenced, then delete or correct any outdated entries. Memory Sources are private — they don’t appear when you share a chat with someone else.

How much does GPT-5.5 Instant cost via the API?

GPT-5.5 Instant is accessible via the chat-latest API alias at $5 per million input tokens and $30 per million output tokens. Cached input tokens are $0.50 per million — a 90% discount. The full GPT-5.5 model has the same pricing but a much larger context window (1M+ tokens vs 400K for the API Instant version).

Does GPT-5.5 Instant eliminate hallucinations?

No. OpenAI claims 52.5% fewer hallucinated claims on high-stakes prompts in medicine, law, and finance compared to GPT-5.3 Instant — and a 37.3% reduction in inaccurate claims on conversations users had previously flagged. But these are internal benchmarks. Independent testing shows real improvement, though not as dramatic across all domains. The model still makes errors.

Is GPT-5.5 Instant better than Claude Opus 4.7?

It depends on what you’re doing. For fast everyday tasks, token efficiency, and agentic coding pipelines, GPT-5.5 is competitive and often more cost-efficient. For long-form writing quality, paragraph-style prose, and complex reasoning with detailed explanations, Claude Opus 4.7 consistently outperforms. Tom’s Guide ran a 7-category comparison and Claude won all seven. The truth is task-dependent.

What happened to GPT-5.3 Instant?

GPT-5.3 Instant is no longer the default model but remains available to paid ChatGPT users through model configuration settings for three months before it’s permanently retired. API developers using the chat-latest alias are now automatically routed to GPT-5.5 Instant, but can manually specify GPT-5.3 Instant for the same three-month window.

How does GPT-5.5 Instant personalization work with Gmail?

For Plus and Pro users on the web, GPT-5.5 Instant can connect to your Gmail account via the sidebar (click Apps to connect). Once connected, the model can reference relevant emails to provide more contextually accurate responses — for example, drafting a follow-up based on an existing thread or summarizing a topic you’ve been discussing in email. The connection is opt-in, and memory sources will show when Gmail was used to shape a response.

What is the context window for GPT-5.5 Instant?

In the ChatGPT interface, GPT-5.5 Instant has a 128K token context window. Via the API as chat-latest, the context window is around 400K tokens. The full GPT-5.5 model (not the Instant version) has a 1 million+ token context window (922K input, 128K output) for developers running large-scale tasks.

Will GPT-5.5 Instant affect SEO and AI search traffic?

Yes, and the data is already showing shifts. Research from Writesonic found that GPT-5.5 Instant cites brand websites only 6% of the time, compared to 13.4% for GPT-5.3 Instant. Reddit has become the most-cited domain by a 3x margin. If you’re building content for AI citation traffic, this model change matters — your strategy needs to account for the new citation behavior. Our guide on GEO ranking techniques covers how to adapt.

Useful Resources and Official Links

- OpenAI Official Announcement — GPT-5.5 Instant

- OpenAI — Introducing GPT-5.5 (Full Model)

- ChatGPT Release Notes — Official Changelog

- Microsoft — GPT-5.5 Instant in Microsoft 365 Copilot

- GitHub Changelog — GPT-5.5 in GitHub Copilot

- OpenRouter — GPT-5.5 API Pricing and Availability

- TechCrunch — OpenAI Releases GPT-5.5 Instant

- The Decoder — GPT-5.5 Instant Rollout Deep-Dive

- DataCamp — GPT-5.5 Instant Review with Benchmark Testing

- Writesonic — GPT-5.5 vs GPT-5.3 Citation Behavior Study

- MindStudio — GPT-5.5 vs Claude Opus 4.7 Coding Comparison

- DataCamp — Claude Opus 4.7 vs GPT-5.5 Detailed Comparison

- Nerd Level Tech — GPT-5.5 Instant Technical Breakdown

- SiliconANGLE — GPT-5.5 Instant Review

- 9to5Mac — GPT-5.5 Instant: Smarter, Fewer Emojis

- Android Headlines — Real-Time Self-Correction Explained

- Rolling Out — GPT-5.5 Instant Hallucination Reduction Analysis

- FindSkill.ai — GPT-5.5 Instant vs Claude Sonnet 4.6 Routing Guide

- LLM Practical Experience Hub — GPT-5.5 API Pricing Calculator

- Progressive Robot — GPT-5.5 Instant: 9 Upgrades Explained

More From PrimeAICenter

- 🔍 Claude Opus vs GPT vs Gemini: The 2026 Frontier Model Showdown

- 🤖 Claude Opus 4.7 Review: Is Anthropic’s Flagship Still Worth It?

- 💬 ChatGPT Images 2.0 Review: The AI Image Generator That Changes Everything

- 🏢 Microsoft Agent 365 Review: GPT-5.5 Inside Your Office Suite

- 🔗 MCP vs A2A Protocol: What Developers Need to Know in 2026

- 🌍 GEO Ranking Techniques: How to Get Cited by ChatGPT and Claude

- 💻 Best AI Coding Assistants in 2026: Ranked and Tested

- 🚀 Best AI Tools for Solopreneurs: Updated for GPT-5.5 Era

- 📱 OpenAI Smartphone: Release Date, Specs, and Everything We Know

- ⚙️ Top AI Workflow Automation Tools: Build Around GPT-5.5 Instant