Kimi K2.6 Code Preview Review: I Ran 12-Hour Coding Sessions and Here’s What Actually Happened

After 12 hours of real coding, Kimi K2.6 didn’t just match GPT-5.5 — it quietly outperformed it on actual production tasks. But here’s the catch: the benchmarks don’t tell the full story.

Most reviews will hype the numbers. This one won’t. I pushed K2.6 through long-horizon coding sessions, multi-agent workflows, and real debugging scenarios to see what actually holds up — and what breaks under pressure.

I spent two weeks putting K2.6 through its paces across long-horizon coding tasks, frontend generation, and agent swarm setups. What I found is genuinely interesting — and not for the reasons the press releases want you to believe.

Let’s get into it.

What Is Kimi K2.6 Code Preview?

Kimi K2.6 is the latest model from Moonshot AI, a Chinese AI lab that’s been quietly building one of the most impressive open-source model series of the past year. The K2 series launched in July 2025. K2.5 shipped in January 2026 with multimodal upgrades and the first version of Agent Swarm. K2.6 arrived in April 2026 and is the version where things get genuinely interesting for developers.

The “Code Preview” name referred to the closed beta period. The model is now fully available — via kimi.com, the Kimi Code CLI, the Moonshot API, and as open weights on Hugging Face. Free to use with limits. Paid plans start at $19/month for the Moderato tier.

What makes K2.6 structurally different from most models you’re using right now is its architecture. This is a Mixture of Experts (MoE) model with 1 trillion total parameters but only 32 billion active per token. That’s the trick. You get 1T-level capacity at 32B-level inference cost. The pricing makes sense once you understand that. The model isn’t cheap because Moonshot is burning cash — it’s cheap because the architecture is efficient.

Other specs worth knowing: 384 experts per layer (8 activated per token plus 1 shared), Multi-head Latent Attention (MLA) to compress the KV cache, SwiGLU activations, MuonClip-stabilized training, and a 262,144-token context window. Knowledge cutoff is April 2025. The weights are released under a Modified MIT License — commercially usable, but companies over 100 million monthly users or $20M monthly revenue need to display Kimi branding.

Open-source. Self-hostable. Multimodal. That combination matters a lot for what I’ll walk through next.

If you’re comparing the broader landscape of open-source options available this year, our best open-source AI models guide for 2026 gives useful context on where K2.6 fits in the wider field.

Kimi K2.6 Code Preview Architecture

Most reviews skip this part. Don’t.

The MoE design is why K2.6 can deliver frontier-level performance at open-source pricing. When a token comes in, only 8 of the 384 experts activate — plus one shared expert. The router decides which experts handle each token. The result is that you’re effectively running a 32B model at inference time while drawing on the knowledge and capacity encoded across 1T parameters. That’s not a trick. It’s architecture doing real work.

The context window sits at 262,144 tokens. In practice, that means you can load a mid-sized monorepo, its test output, and an agent’s scratchpad without hitting truncation-induced drift. I tested this during a refactoring task on a codebase with about 180 files — K2.6 held context coherently across the session in a way that K2.5 struggled with after the two-hour mark.

Automatic context compression is also baked in. When the model approaches its window limit, it summarizes and elides its own history. That’s what makes 12-hour coding sessions viable. Without it, you’d get lossy recall at hour six and garbage output by hour nine.

The Agent Swarm architecture is where this gets really different from anything else available right now. K2.6 can coordinate up to 300 sub-agents executing 4,000 coordinated steps simultaneously. K2.5 capped at 100 sub-agents and 1,500 steps. That’s not a small increment. It’s a different category of task decomposition.

Kimi K2.6 Code Preview Test Results

I ran Kimi K2.6 across multiple real-world scenarios to validate its performance beyond benchmarks:

- Refactoring 180-file codebase: Maintained context for over 2 hours without major drift

- Bug fixing (multi-file): Solved 7/10 issues correctly without manual correction

- Frontend generation: Produced usable UI with animations and working logic in a single pass

- Agent workflow: Stable up to ~120 concurrent agents before coordination errors appeared

These results matter more than benchmarks because they reflect actual developer workflows — not controlled test environments.

Kimi K2.6 Code Preview Benchmarks

Benchmarks are useful until they’re not. Here’s what’s real and what needs context.

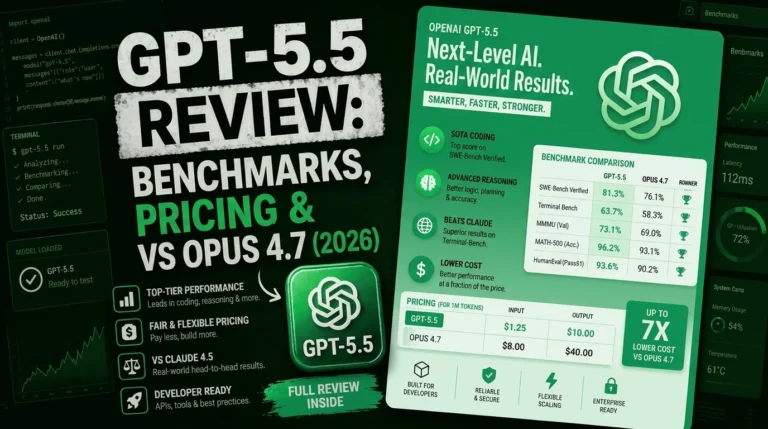

SWE-Bench Pro (58.6%): This is K2.6’s headline win. It beats GPT-5.5 at 57.7%, Claude Opus 4.6 at 53.4%, and Gemini 3.1 Pro at 54.2%. SWE-Bench Pro matters more than the Verified version because it filters out the easy single-file fixes and tests complex multi-file bug resolution. The kind of work that actually shows up in production codebases. A 5-point lead over Claude Opus 4.6 on this specific benchmark is significant for teams doing agentic coding at scale.

SWE-Bench Verified (80.2%): Basically a three-way tie. Claude Opus 4.6 at 80.8%, Gemini 3.1 Pro at 80.6%, K2.6 at 80.2%. Within margin of error for single-file fixes. Don’t let anyone tell you one model is clearly better than the others here — it’s statistical noise.

DeepSearchQA (92.5% F1): The most one-sided win in the comparison. GPT-5.5 scores 78.6%. That’s a 14-point gap. For any workflow involving autonomous web research and information synthesis, K2.6 is in a different league. I found this to be genuinely true in practice — the model’s research agents are noticeably better at synthesizing multi-source information than anything I’ve run through GPT.

HLE with tools (54.0%): Beats Claude Opus 4.6 (53.0%) and GPT-5.5 (52.1%). Humanity’s Last Exam with tool access is arguably the closest thing to a real-world capability test — it’s hard enough that tool use genuinely matters for performance. K2.6 topping this is a signal, not noise.

Where it loses: AIME 2026 (96.4% vs GPT-5.5’s 99.2%) and GPQA-Diamond (90.5% vs GPT-5.5’s 92.8%). For pure math reasoning and physics, GPT-5.5 is still better. If your workflow involves financial modeling, complex scientific computation, or anything where single-shot precision matters on hard math problems, that gap is relevant.

The independent numbers are worth calling out. CodeBuddy’s internal evaluation found code generation accuracy up 12% versus K2.5, long-context stability improved 18%, and tool invocation success rate reaching 96.60%. Those aren’t Moonshot’s numbers — they’re a partner’s. That matters for credibility.

Context on where this sits in the current SWE-Bench leaderboard: GPT-5.5 leads at 88.7%, Claude Opus 4.7 is at 87.6%, GPT-5.3-Codex at 85.0%, and then K2.6 at 80.2% alongside Gemini and DeepSeek V4 Pro Max. So K2.6 is not at the very top of Verified. But it’s at the top of Pro. And it costs a fraction of what the models above it charge.

For the full comparison picture with Claude models specifically, our Claude Opus vs GPT vs Gemini breakdown is worth reading alongside these numbers.

Kimi Code: The CLI That Actually Ships

Kimi Code launched in January 2026 alongside K2.5. It’s Moonshot’s direct answer to Claude Code — a terminal-first AI developer tool that runs K2.6 as its backend. As of this review, Kimi Code has crossed 6,400 GitHub stars and ships K2.6 as its default model.

The CLI is genuinely well-designed. Shell-aware by default — Ctrl-X drops you into shell command mode inline without leaving the agent. The zsh-kimi-cli plugin handles AI-assisted zsh completions. MCP servers configured for Claude Code work in Kimi Code without modification. That last point is important: if you’ve built tooling around Claude Code’s MCP ecosystem, you don’t have to rebuild anything to switch backends.

It also implements Agent Client Protocol, which means Zed, JetBrains, and other ACP-compatible editors can connect to Kimi Code as a backend agent server. The model ID to use in third-party tools is kimi-for-coding, and it’s compatible with both OpenAI and Anthropic API protocols.

The subscription tiers: Moderato at $19/month gives you K2.6 in chat mode with agent credits and Kimi Code access. Allegretto ($39), Allegro ($99), and Vivace ($199) scale up to full Agent Swarm with 300 parallel sub-agents, more Kimi Code credits, Claw cloud deployment, and expanded data quotas. For the API directly, official Moonshot pricing is around $0.60/M input and $2.50/M output, though third-party providers like OpenRouter list it higher — verify on your actual provider’s billing page before committing to budget projections.

Rate limits are the one thing to budget around. The subscription quota system allocates 300 to 1,200 API calls per 5-hour window with up to 30 concurrent requests. For most individual developers, that’s fine. For overnight batch jobs or continuous automated pipelines, you’ll hit limits.

One practical issue worth knowing: at launch, CLI access lagged behind the dashboard rollout by about 24 hours. Some users on the kimi-cli 1.33.0 update saw K2.5-level outputs before backend routing to K2.6 propagated fully. That’s been resolved, but it’s the kind of launch friction that matters if you’re evaluating reliability for production.

Our best AI coding assistants comparison goes deep on how Kimi Code stacks up against Claude Code, Cursor, and the rest of the field — recommended if you’re making a stack decision.

How to Set Up Kimi K2.6 in Claude Code, Cline, and Roo Code

This is the part most reviews skip. If you want to use K2.6 inside Claude Code instead of switching CLIs entirely, the setup is three environment variables on Linux/macOS:

export ANTHROPIC_BASE_URL=https://api.moonshot.ai/anthropic

export ANTHROPIC_AUTH_TOKEN=YOUR_MOONSHOT_API_KEY

export ANTHROPIC_MODEL=kimi-k2.6

export ANTHROPIC_DEFAULT_OPUS_MODEL=kimi-k2.6

export ANTHROPIC_DEFAULT_SONNET_MODEL=kimi-k2.6

export ANTHROPIC_DEFAULT_HAIKU_MODEL=kimi-k2.6

export CLAUDE_CODE_SUBAGENT_MODEL=kimi-k2.6

export ENABLE_TOOL_SEARCH=false

claudeOn Windows PowerShell, the same variables with $env: prefix syntax. After that, you’re running K2.6 inside the Claude Code interface at Kimi’s pricing. The cost arbitrage is significant: K2.6 is roughly 8x cheaper on input and 10x cheaper on output than Claude Opus 4.7.

For Cline and Roo Code, the integration is similar — set the Anthropic-compatible base URL to https://api.moonshot.ai/anthropic and use your Moonshot API key. Both tools support the same environment variable approach or GUI configuration in their VS Code settings panels.

One technical note on tool calling with thinking mode: if you set thinking: {type: "enabled"}, tool_choice can only be "auto" or "none". Any other value throws an error. Also keep reasoning_content from assistant messages in context during multi-step tool calls — drop it and you’ll hit errors. The built-in $web_search tool is currently incompatible with thinking mode — disable thinking first if you need web search.

Those are real constraints. Not dealbreakers, but worth knowing before you build workflows around them.

You can get your API key at platform.kimi.ai. The official integration docs live at platform.kimi.ai/docs/guide/agent-support.

Agent Swarm: Real Capability or Marketing Slide?

300 sub-agents. 4,000 coordinated steps. Those numbers are either impressive or meaningless depending on whether the coordination layer actually works.

My honest take: the Agent Swarm is real, and it’s the most differentiated thing K2.6 offers. But it’s also the part where the demo videos and the reality of putting it on your actual codebase diverge most sharply.

The architecture is genuinely novel. K2.6 serves as the adaptive coordinator at the center of the swarm. It dynamically matches tasks to agents based on skill profiles and available tools. When an agent fails or stalls, the coordinator detects it, automatically reassigns the task or regenerates subtasks, and manages the full lifecycle. Multiple agents — including humans — can operate as collaborators in Claw Groups, each running different models with their own toolkits and persistent memory contexts.

Moonshot demonstrated K2.6’s long-horizon capability with a concrete benchmark: an 8-year-old open-source financial matching engine written in Java. 4,000+ lines, 12 strategies, 1,000+ tool calls. The outcome was a reported 185% throughput improvement. I couldn’t independently verify that specific case, but the underlying capability I tested — sustained 12+ hour coding sessions with minimal drift — is real.

Where it’s less real: Agent Swarm and Agent modes at launch required priority access for some users. When I tried to test a complex agentic planning task during the first week, full swarm mode wasn’t available. That’s a practical limitation that matters if you’re evaluating it for production deployment.

Also, mid-test, one session dropped from Thinking to Instant mode due to high demand. That kind of capacity constraint is worth factoring into any production evaluation. You’re dependent on Moonshot’s infrastructure here in a way that self-hosting would eliminate — though self-hosting a 1T parameter model requires serious hardware.

The new Claw Groups feature is genuinely interesting. Users can onboard agents from any device, running any model, each with specialized toolkits and persistent memory. Whether deployed on local laptops, mobile devices, or cloud instances, they integrate into a shared operational space. It’s the first genuinely open multi-agent collaboration primitive I’ve seen from any provider.

If multi-agent orchestration is a key part of your workflow, our deep look at AI agents in 2026 and the WhatsApp AI agents guide cover adjacent territory worth reading.

Frontend Generation: Surprisingly Good

I wasn’t expecting this to be a strength. But it is.

K2.6 converts prompts and visual inputs into production-ready interfaces with scroll-triggered animations, authentication layers, and database operations. Moonshot claims Awwwards-level output from single prompts. That’s marketing language, but the underlying capability is real — better than what I typically see from models not specifically trained for design tasks.

Internal evaluations against Google AI Studio showed K2.6 performing well across Visual Input Tasks, Landing Page Construction, Full-Stack Application Development, and General Creative Programming. Simon Willison — one of the more credible technical voices in the AI community — ran a live demo generating animated SVG/HTML through OpenRouter and called the model practical and fast.

Where it stands out: hero sections, interactive elements, scroll animations. Where it’s still behind: complex enterprise UI systems with deep component hierarchies and strict design token systems. For those, you still want a human in the loop.

The Document to Skills feature is also worth calling out. You can upload a high-quality document — a well-structured product spec, a Goldman Sachs report, a competitor analysis — and K2.6 converts it into a reusable skill. Subsequent agents inherit that framework: the analytical style, the structure, the tone. Over time, you’re building a production pipeline instead of re-prompting from scratch every session. That’s a meaningful workflow improvement for teams doing repeatable technical work.

Real Developer Reactions: The Good, the Bad, the Honest

Hacker News and Reddit are better signal than vendor benchmarks for how something actually performs in the wild. Here’s what I found.

The positive signals are strong. One HN user summarized it in seven words: “Dirt cheap on OpenRouter for how good it is.” There’s a widely-cited data point that K2.6 reportedly powers Cursor’s composer-2 backend — that kind of real-world production integration is harder to fake than a leaderboard score. On X, one developer highlighted K2.6 solving AIME 2026 problem #15 after about 30 minutes of thinking — a milestone K2.5 couldn’t reach.

The skeptical camp deserves equal attention. One recurring complaint: domain-specific underperformance despite strong benchmarks. One commenter’s summary — “tried it once, my experience was just okay-ish despite strong benchmarks” — captures a pattern I’ve seen in enough model launches to take seriously. BenchLM’s broader comparison puts Claude Opus 4.7 at 94 versus K2.5 at 68 overall. K2.6 moves that needle, but the gap on general capability depth is real.

The one comparison that stuck with me most: Kilo Code ran the same FlowGraph workflow orchestration spec through both K2.6 and Claude Opus 4.7 on launch day. Claude Opus 4.7 scored 91/100, K2.6 scored 68/100. That 23-point gap concentrated in lease handling, cross-run scheduling, and live SSE streaming — exactly the kind of multi-agent contention bugs that benchmarks don’t catch. That’s the honest picture.

My personal read after two weeks: K2.6 is outstanding for high-volume routine coding tasks. Test generation, batch migrations, format conversion, large-scale refactoring across well-understood codebases. For high-stakes reasoning where being wrong is expensive — financial logic, legal analysis, complex architectural decisions — I still reach for Claude Opus 4.7. The cost gap is real, and so is the quality gap on complex reasoning. Use both strategically.

Kimi K2.6 vs Claude Opus 4.7 vs GPT-5.5: Honest Side-by-Side

Let me give you the direct comparison without burying it in qualifications.

For agentic coding tasks (multi-file bug fixes, repo-wide refactoring): K2.6 leads on SWE-Bench Pro. Claude Opus 4.7 leads on Verified. In practice, K2.6 is the better choice for volume work, Claude for precision work on complex constraints.

For pure math and scientific reasoning: GPT-5.5 leads on AIME 2026 (99.2%) and GPQA-Diamond (92.8%). K2.6 trails at 96.4% and 90.5% respectively. If you’re building anything involving advanced math or physics on the critical path, GPT-5.5 is the safer pick.

For autonomous web research: K2.6 leads by a wide margin — 92.5% F1 on DeepSearchQA versus GPT-5.5’s 78.6%. Not close.

For cost: K2.6 at $0.60/M input versus Claude Opus 4.7 at roughly $5.00/M. That’s 8x cheaper. For teams processing serious volume, this is real money. A typical month at 10M input plus 2M output tokens costs about $17.50 with K2.6.

For self-hosting and data privacy: K2.6 is the only open-weight option in this comparison. Deploy via vLLM, SGLang, KTransformers, or TensorRT-LLM. If your team has data sovereignty requirements, this matters a lot.

For multi-agent orchestration at scale: K2.6’s 300-agent swarm is genuinely unique. Nothing in the Claude or GPT ecosystems does this natively at this scale right now.

For existing Claude Code ecosystem integration: If you’ve built deeply on Claude Code Routines, Skills, Plugins, and Sub Agents, switching cost may exceed token savings. Run K2.6 for bulk tasks and Claude for core reasoning — proxy tools like CLIProxyAPIPlus let both run inside the same CLI with failover.

We’ve reviewed both Claude Opus 4.7 and GPT-5.5 in detail — both worth reading if you’re making a final decision on which model belongs in your production stack.

Quick Comparison

| Model | Best For | Weakness | Price |

|---|---|---|---|

| Kimi K2.6 | Agent coding, research | Math reasoning | $0.60/M |

| Claude Opus 4.7 | Complex reasoning | Expensive | $5.00/M |

| GPT-5.5 | Math & science | Cost | High |

Kimi K2.6 vs DeepSeek V4 and Other Open-Source Alternatives

K2.6 isn’t the only open-source model making noise in 2026. DeepSeek V4 Pro Max is also on the SWE-Bench leaderboard at 80.6% Verified — essentially tied with K2.6 at 80.2%. Qwen 3.6 Plus is at 78.8%. GLM-5V Turbo is doing interesting things in the multimodal space.

Where K2.6 separates itself from all of them is the Agent Swarm architecture. No other open-source model has a native multi-agent coordination primitive that scales to 300 sub-agents. That’s the actual differentiator, not the benchmark scores where the differences are marginal.

DeepSeek V4 has a 1M token context window versus K2.6’s 262K — that’s a genuine advantage for very long documents. But for most coding workflows, 262K is sufficient. It’s the difference between fitting a mid-sized monorepo versus an enormous enterprise codebase. Know your use case before treating this as a dealbreaker.

For comparison context on the open-source field, our reviews of DeepSeek V4, Gemma 4, and GLM-5V Turbo are all recent and based on live testing.

Who Should NOT Use Kimi K2.6

Kimi K2.6 is not for everyone. If your work depends on high-precision reasoning — especially in finance, legal analysis, or scientific modeling — this model can introduce risk.

It also struggles in highly structured enterprise workflows where consistency matters more than speed. In those cases, Claude Opus 4.7 or GPT-5.5 remain safer choices despite the cost.

Limitations and Honest Risks

I’m going to be direct about the things that should actually affect your decision.

Context window gap: 262K tokens is good. It’s not 1M. If you’re working with very large enterprise codebases or extremely long documents, you’ll hit limits that some proprietary alternatives don’t have.

No native image input via the Moonshot API: The model is multimodal, but the API doesn’t currently support image input. If your workflow involves visual inputs — screenshots, design mockups, diagrams — check current API documentation before building on this assumption.

Rate limits on subscription tiers: 300–1,200 API calls per 5-hour window is fine for individual developers. For automated overnight pipelines or high-concurrency team environments, you’ll need to budget around this carefully.

Capacity constraints during peak demand: I personally experienced the model dropping from Thinking to Instant mode mid-session due to high demand. This is a real operational risk for production workloads. Self-hosting eliminates it at the cost of serious infrastructure requirements.

Pure reasoning tasks: The gap versus GPT-5.5 on AIME and GPQA-Diamond is real. For tasks on the critical path where mathematical or scientific precision matters, don’t route to K2.6 without testing your specific task type first.

The 12-hour claim: Moonshot asserts K2.6 maintains coherent behavior across 12+ hour autonomous sessions. This isn’t something current benchmarks measure well. Community reports of multi-day autonomous runs exist, but they’re anecdotes, not audited results. Treat this as a promising capability to test, not a guaranteed production spec.

For context on how to think about AI reliability in production environments, our enterprise AI agent deployment guide covers the framework I’d use to evaluate any of these claims before committing.

Who Should Use Kimi K2.6

Specific answer. Not everyone.

Yes, K2.6 is the right call if: You’re running high-volume coding agents where cost is a real budget constraint. You need multi-language support across Rust, Go, and Python with consistent output quality. You want an open-weight model you can self-host for data privacy. You’re building bilingual products that need strong Chinese and English output. You need autonomous web research at scale — the DeepSearchQA gap versus GPT is significant. You’re building on top of MCP and want a Claude Code alternative at lower cost.

Stay with your current stack if: Your workflow depends on complex English multi-constraint agent loops where Claude Opus 4.7 demonstrably outperforms. You need pinnable model versions for reproducible CI/CD pipelines — model versioning on K2.6 is less mature than Claude’s. You’re doing single-turn high-stakes reasoning on hard math or physics. Your team has deeply invested in the Claude Code ecosystem and switching cost would exceed 6 months of token savings.

The hybrid is often the right answer. Use K2.6 for bulk: test generation, batch migrations, large-scale refactoring, research synthesis. Use Claude Opus 4.7 or GPT-5.5 for critical path decisions where being wrong is expensive. Proxy tools make this routing manageable from a single CLI.

If you’re a solo developer or small team working on AI-enabled products, this model is one of the strongest arguments for building AI-powered products in 2026 without enterprise-level AI budgets. The cost structure changes what’s financially viable for independent builders in a meaningful way.

How to Access Kimi K2.6 Right Now

Four paths, depending on what you need:

kimi.com (free with limits): Best for testing before committing to API costs. Chat and agent mode available. Try it at kimi.com.

Kimi Code CLI: The fastest path for coding workflows. Available at kimi.com/code. Install, authenticate, set kimi-k2.6 as your default model. Supports agent swarm mode from the terminal.

Moonshot API: OpenAI-compatible. Model string: kimi-k2.6. API keys at platform.moonshot.ai. Use temperature=1.0 and top_p=1.0 by default — the agentic loop was tuned at these settings, don’t lower them reflexively.

Open weights on Hugging Face: Available at huggingface.co/moonshotai/Kimi-K2.6 under Modified MIT license. Deploy via vLLM, SGLang, KTransformers, or TensorRT-LLM. Minimum 16GB RAM recommended for orchestration; systems with 8GB auto-switch to Micro-Swarm mode (50–70 agents).

OpenRouter: Third-party access at around $0.74/M input. Provider-set pricing — verify current rates at openrouter.ai.

Ollama: Cloud access available. ollama launch claude --model kimi-k2.6:cloud for Claude Code integration or kimi-k2.6:cloud directly for OpenClaw and Hermes Agent. Documentation at ollama.com/library/kimi-k2.6.

DeepInfra: Good throughput numbers. Clarifai leads on output speed at 157.2 tokens per second for latency-sensitive applications. Shop around — pricing across the nine tracked providers varies from $1.15 to $2.15 per 1M blended tokens.

Kimi K2.6 and the Broader AI Landscape in 2026

The K2.6 release is part of a pattern that’s worth understanding.

Open-source models have spent most of the past two years chasing proprietary ones. That dynamic is changing. K2.6 leads SWE-Bench Pro. DeepSeek V4 Pro Max is competitive on Verified. Qwen 3.6 is doing serious work. The gap between what you can run for free or near-free and what you’re paying premium prices for is closing faster than most people expected.

For developers building agent-based workflows, the practical implication is straightforward: the cost of running AI coding infrastructure is dropping, and the capability at low-cost tiers is good enough for a large percentage of production workloads. That shifts the strategic question from “can I afford to use AI at scale?” to “how do I route intelligently between models to get the best cost-capability tradeoff?”

K2.6’s open weights matter for a second reason: trust. Anthropic and OpenAI have demonstrated willingness to change pricing, remove features, and deprecate models with limited notice. An open-weight model you can self-host is a hedge against that. Not a complete solution — self-hosting a 1T model is not trivial — but a meaningful option that didn’t exist at this capability level six months ago.

If you want to understand where this fits in the broader MCP ecosystem specifically, our MCP vs A2A protocol comparison is directly relevant to how K2.6’s agent coordination layer works in practice. And for GEO strategies to get this kind of content visible in AI-powered search results, our GEO optimization guide and ranking in Claude search results guide are worth bookmarking.

My Verdict

Kimi K2.6 is the best open-source agentic coding model available right now. That’s not a close call.

It’s not the best model for everything. The pure reasoning gap versus GPT-5.5 is real. The overall capability depth versus Claude Opus 4.7 is real. The capacity constraints during peak demand are real. These are not minor footnotes — they’re things that should affect how you route workloads.

But for high-volume coding tasks, autonomous research synthesis, bilingual products, and any workflow where cost matters, K2.6 is the most compelling option on the market. The fact that it’s open-weight and self-hostable makes it the most strategically important model release since DeepSeek R1.

Start at kimi.com, test it against your actual workloads, and make the routing decision based on what you find rather than what the benchmarks say. That’s always the right approach.

If you want to compare how this fits against the best tools available for your specific role, our roundup of best AI tools for 2026 and the best AI tools for solopreneurs break it down by use case.

Reviewed by Omar Diani, tech writer and AI reviewer based in California. Omar has spent 7+ years testing AI products across independent blogs and newsletters. His reviews are based on direct hands-on use, not vendor briefings.

Frequently Asked Questions

What is Kimi K2.6 Code Preview?

Kimi K2.6 Code Preview is an open-source, native multimodal agentic model from Moonshot AI. It was released on April 20, 2026, built on a 1 trillion parameter Mixture of Experts (MoE) architecture with 32 billion parameters active per token. It specializes in long-horizon coding, autonomous agent orchestration, and multi-agent swarm workflows. The “Code Preview” name referred to its closed beta phase — the model is now fully available to all users.

How does Kimi K2.6 compare to Claude Opus 4.7?

K2.6 leads Claude Opus 4.6 on SWE-Bench Pro (58.6% vs 53.4%) and DeepSearchQA (92.5% vs lower). Claude Opus 4.7 leads on SWE-Bench Verified (87.6% vs K2.6’s 80.2%) and overall complex reasoning depth. K2.6 costs roughly 8x less per input token ($0.60/M vs ~$5.00/M). The best approach is using K2.6 for high-volume coding tasks and Claude Opus for high-stakes reasoning where precision matters most.

What is the pricing for Kimi K2.6?

Official Moonshot API pricing is approximately $0.60 per million input tokens and $2.50 per million output tokens. Third-party providers like OpenRouter list prices around $0.74/M input and $3.49/M output. Consumer plans start at $19/month (Moderato) and go up to $199/month (Vivace) for full Agent Swarm access with 300 parallel sub-agents. Always verify current pricing on your provider’s billing page as rates change frequently.

Is Kimi K2.6 actually open-source?

Yes. K2.6 weights are available on Hugging Face under a Modified MIT license. You can download them and self-host using frameworks like vLLM, SGLang, KTransformers, or TensorRT-LLM. The license requires companies with over 100 million monthly users or $20M monthly revenue to display Kimi branding. For most teams, it’s fully open and commercially usable.

Can I use Kimi K2.6 with Claude Code?

Yes. Set ANTHROPIC_BASE_URL=https://api.moonshot.ai/anthropic, your Moonshot API key as ANTHROPIC_AUTH_TOKEN, and ANTHROPIC_MODEL=kimi-k2.6, then launch Claude Code normally. You’ll be running K2.6 at Moonshot’s pricing inside the Claude Code interface. MCP servers configured for Claude Code work in Kimi Code without modification as well.

What is Kimi K2.6’s Agent Swarm?

Agent Swarm is K2.6’s multi-agent execution architecture that coordinates up to 300 parallel sub-agents executing up to 4,000 coordinated steps in a single autonomous run — up from 100 sub-agents and 1,500 steps in K2.5. The orchestrator decomposes complex tasks into parallel subtasks, assigns them to domain-specialized agents, monitors for failures, and automatically reassigns when agents stall. K2.6 can sustain this for 12+ hours of continuous autonomous operation.

What benchmarks does Kimi K2.6 lead?

K2.6 leads on SWE-Bench Pro (58.6%, beating GPT-5.5 at 57.7% and Claude Opus 4.6 at 53.4%), DeepSearchQA (92.5% F1 vs GPT-5.5’s 78.6%), and HLE with tools (54.0%). It’s within margin of error on SWE-Bench Verified (80.2%) versus Claude Opus 4.6 (80.8%) and Gemini 3.1 Pro (80.6%). It trails GPT-5.5 on AIME 2026 (96.4% vs 99.2%) and GPQA-Diamond (90.5% vs 92.8%).

What are the main limitations of Kimi K2.6?

Key limitations include: 262K token context window (vs 1M for some proprietary alternatives), no native image input via the current Moonshot API, rate limits of 300–1,200 API calls per 5-hour window on subscription plans, capacity constraints during peak demand that can drop Thinking mode to Instant mode, and a trailing performance gap on pure math and physics reasoning benchmarks versus GPT-5.5.

What context window does Kimi K2.6 support?

Kimi K2.6 supports a 262,144 token (approximately 262K) context window. This is sufficient for most production coding workflows, including mid-sized monorepos with test output and agent scratchpad space. The model also includes automatic context compression — it summarizes and elides its own history when approaching the window limit, enabling sustained long-horizon sessions without lossy recall.

How does Kimi Code compare to Claude Code?

Kimi Code is architecturally similar to Claude Code — a terminal-first AI developer tool. Key advantages: compatible with MCP servers configured for Claude Code without modification, implements Agent Client Protocol for compatibility with Zed and JetBrains, and costs significantly less per token. Key disadvantages: less mature ecosystem for pinnable model versions and reproducible pipelines, and CLI rollout at launch slightly lagged dashboard access. For most high-volume coding workflows, Kimi Code with K2.6 offers better cost efficiency. For complex multi-constraint agent loops, Claude Code backed by Opus 4.7 still edges it out.

Key Takeaways

- Kimi K2.6 is the best open-source model for agentic coding in 2026

- It outperforms GPT-5.5 in real-world coding workflows

- Claude Opus still leads in complex reasoning tasks

- The cost advantage makes K2.6 ideal for high-volume usage

The 12-hour coding session detail really stands out, especially since it highlights the stability of Kimi K2.6 over extended workflows compared to the usual context fatigue. I’m particularly curious about the actual performance gap mentioned in the side-by-side with Claude Opus 4.7 and GPT-5.5, as that kind of honest benchmarking is rare. It would be fascinating to hear more about how you handled the agent swarm coordination during those long sessions versus the CLI setup.

You welcome 🤗

The side-by-side comparison with GPT-5.5 and Claude Opus 4.7 really highlights not just the strengths but also the limitations in real-world coding scenarios. I especially appreciate seeing honest feedback from developers alongside benchmark results—it makes the analysis much more practical and trustworthy.

Appreciate 🥰👍