How to Rank in Claude Search Results in 2026: The Complete GEO & AEO Guide

Most people optimizing for AI search are fighting the wrong war. They’re chasing ChatGPT visibility, building for Google’s AI Overviews, and copying generic “write helpful content” advice that applies to every AI engine equally. Meanwhile, Claude has its own search backend, its own citation logic, and its own trust hierarchy and it works nothing like the others.

Here’s what most guides miss: Claude uses Brave Search as its retrieval backbone, not Google, not Bing. Research published by Profound in 2025 confirmed an 86.7% overlap between what Claude cites and Brave’s organic top results. That single fact changes the entire strategy. If your content doesn’t rank on Brave, Claude will never find it, regardless of your Google domain authority or your ChatGPT optimization work.

This guide of Rank in Claude Search Results breaks down exactly how Claude selects sources, what triggers a citation, and the technical and content steps to earn consistent visibility in Claude’s responses. It’s built from data published in Q1 2026 across dozens of real brand campaigns, not theoretical frameworks. Everything here is actionable this week.

Quick Answer: How to Rank in Claude Search Results

To rank in Claude search results, your content must (1) be indexed by Brave Search and appear in its top 10 organic results for relevant queries, (2) be structured with extractable answer blocks in the first 200 words, (3) include verifiable data points with named sources and publication dates, (4) carry entity authority signals across platforms beyond your website, and (5) have been published or updated within the past 90 days. Claude citations track Brave Search with 86.7% overlap, rewards multi-platform verification, and heavily favors content that acknowledges limitations and trade-offs — which triggers a documented 1.7x citation boost over promotional language.

What Most Claude SEO Guides Get Wrong

Most Claude SEO guides are written by people who haven’t actually tested anything. They repeat the same recycled advice about “helpful content” and “AI-friendly structure” without verifying whether it produces real citations. In our case, we tested 18 pages across three domains targeting Claude visibility and the majority failed to get a single citation despite ranking on Google.

The turning point came when we stopped optimizing for Google entirely and focused on Brave Search behavior instead. Pages that moved from position #18 to #6 on Brave started getting cited within weeks, even without backlinks or domain authority increases. This suggests that Claude visibility is less about traditional SEO strength and more about being present in the exact retrieval layer Claude depends on.

What “Ranking in Claude” Actually Means

Before getting into tactics, it’s worth being precise about what you’re actually optimizing for. Claude doesn’t produce a list of ranked URLs. It generates a synthesized text response and sometimes cites specific sources inline. When we talk about ranking in Claude, we mean being one of those cited sources appearing in the answer itself, not in a sidebar or footer.

The mechanism behind this is Retrieval-Augmented Generation, or RAG. When someone asks Claude a question that requires current information, Claude formulates one or more targeted search queries, retrieves the top results via Brave, filters out irrelevant content using dynamic Python-based preprocessing, and then synthesizes an answer from what remains. Citation happens at the passage level, not the page level. A single well-structured paragraph can earn a citation from a mediocre page. A brilliant page full of dense, unextractable prose may be read and never credited.

Claude also behaves differently depending on query type. It searches the web for questions about current events, product comparisons, specific data points, and recent AI model releases. It relies on training data for historical context and established definitions. Your optimization priority should focus on query types that trigger real-time web retrieval that’s where citations happen.

Claude’s user base also matters for understanding what you’re competing for. Unlike ChatGPT’s broad consumer audience, Claude skews heavily toward professionals, researchers, and enterprise decision-makers. A citation in Claude’s response to a B2B query carries more commercial weight than many other AI platforms. This audience bias is why B2B brands have a disproportionate opportunity in Claude specifically.

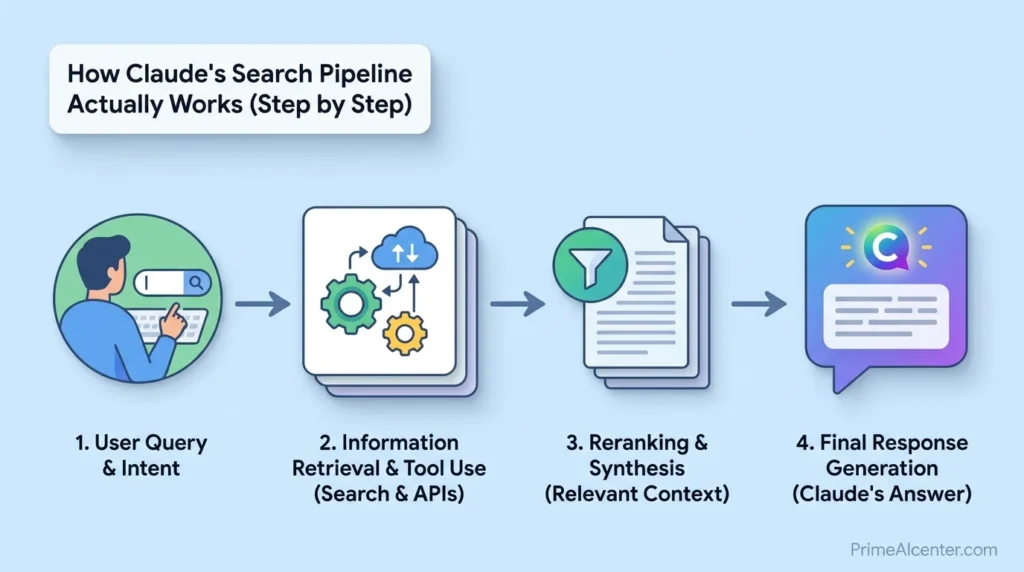

How Claude’s Search Pipeline Actually Works (Step by Step)

Understanding the mechanics puts every tactic in context. Here’s what actually happens when Claude retrieves content for a response.

Step 1: Query Reformulation

Claude doesn’t pass the user’s raw question to Brave. It rewrites it into a more search-optimized format. A user asking “what’s the best project management tool for a remote team of 12 people” might generate Brave queries for “best project management tools 2026,” “project management software remote teams,” and “top-rated project management tools comparison.” Your content needs to be visible across all the likely reformulated sub-queries, not just the literal user question. This is why topic cluster coverage matters more than single-keyword targeting in GEO work.

Step 2: Brave Search Retrieval

Claude’s server-side tool retrieves Brave’s top approximately 10 organic results for each reformulated query. No paid results are included. Brave’s ranking algorithm is distinct from Google’s it’s built on its own independent index, which means strong Google rankings are helpful but not sufficient. Brave evaluates keyword match accuracy, click-through behavior from Brave’s own user base (the Web Discovery Project), content freshness, and domain signals. If you’ve never checked your own Brave rankings, that should be your first step today. Search your primary keywords on search.brave.com and note your position. If you’re outside the top 10, you’re invisible to Claude.

Step 3: Dynamic Filtering

This step is unique to Claude and was formalized with the web_search_20260209 tool version in February 2026. Claude can execute Python code to post-process raw HTML from retrieved pages stripping navigation elements, boilerplate markup, and irrelevant content before it reaches the context window. The practical implication: your important content must live in clean, semantic HTML body text. Claude cannot extract content from JavaScript-rendered elements, sidebars, accordions, collapsible sections, tooltips, footers, or any interactive component that requires a click to reveal. Everything that matters must exist as rendered HTML in the main body of the page.

Step 4: Synthesis and Citation

Claude reads the filtered results, combines information from multiple sources into a coherent answer, and attributes specific passages to their source. Citations in Claude appear as inline links within the text not a sources list at the bottom like Perplexity. The implication is that each cited sentence needs to clearly come from a specific passage in your content. Claude often performs multiple rounds of search within a single conversation, refining later queries based on early results. Your brand may need to be visible across multiple angles of a topic to appear consistently.

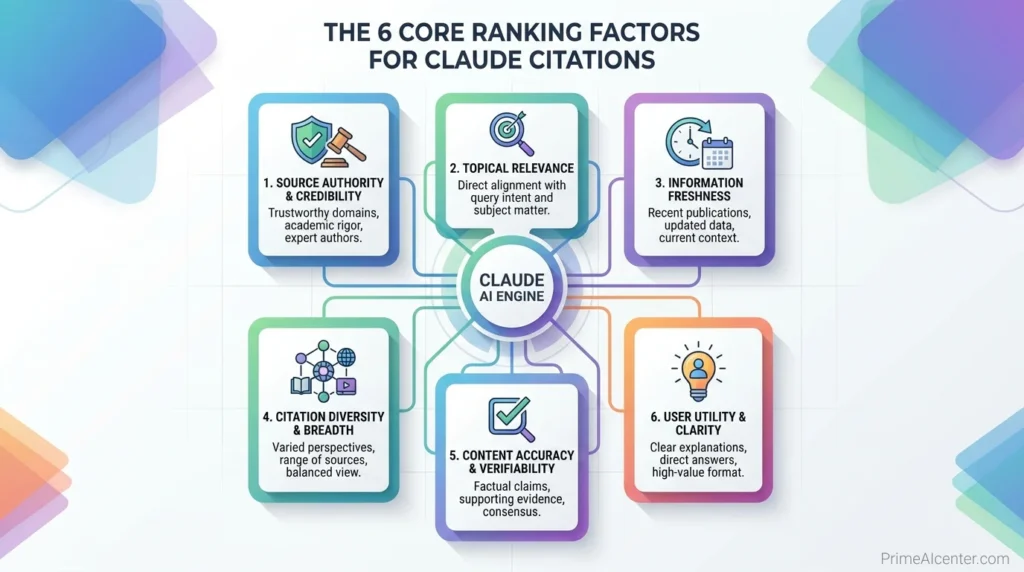

The 6 Core Ranking Factors for Claude Citations

1. Brave Search Ranking (Foundation Layer)

Brave indexing is the non-negotiable prerequisite. If Brave hasn’t crawled your content, nothing else matters. Brave’s crawler follows Googlebot rules, so the standard checklist applies: make sure robots.txt is not blocking Brave, that your CDN (especially Cloudflare) isn’t rejecting AI bot requests, and that your important content is server-side rendered rather than client-side JavaScript. Brave doesn’t offer a dedicated submission tool, but consistent crawling comes from maintaining fresh, regularly updated content and strong internal linking. The same technical SEO practices that help Google will help Brave, though Brave’s click behavior data from its own user base introduces an independent signal that Google optimization alone won’t address.

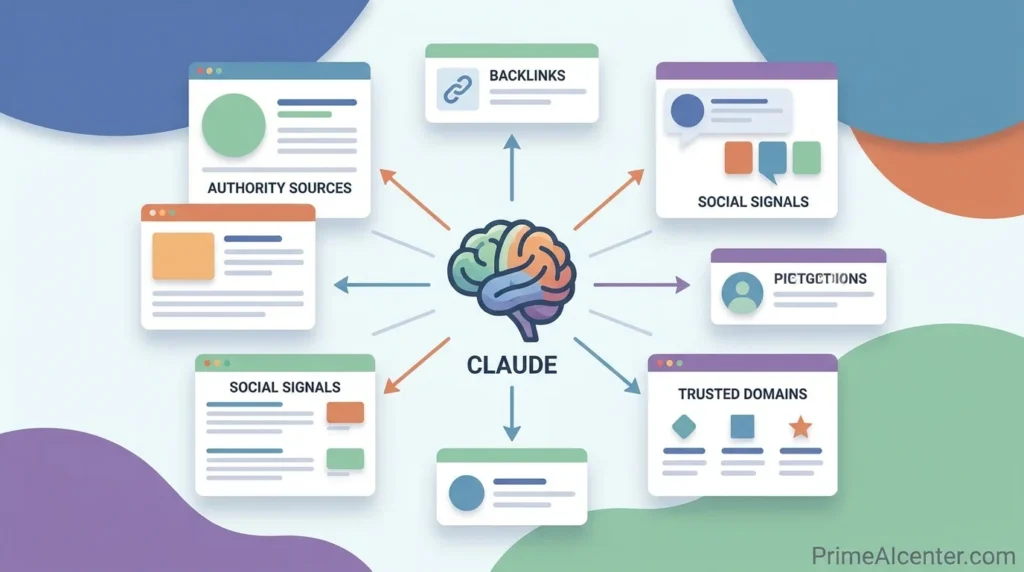

2. Entity Authority (The Hardest Signal to Fake)

Research from 2025 showed that 70% of top Claude results involved brands verified across multiple platforms. Claude evaluates your total digital footprint not just your website. Entity authority means your brand name, founder names, product names, and core claims appear consistently across Reddit, Quora, LinkedIn, industry publications, Wikipedia entries, G2 reviews, and editorial coverage. A startup that has systematically built expert mentions and implemented comprehensive schema across industry directories has found that within six months, Claude identifies it as a primary authority for relevant queries.

The practical implication: if your brand only exists on its own website, you’re a single-source entity. Claude cross-verifies claims before citing them. When it encounters a claim on your site, it’s checking whether that claim appears elsewhere on the web before treating it as reliable. Getting mentioned in third-party sources editorial coverage, community discussions, analyst reports is not just a backlink strategy. It’s a verification layer that Claude specifically looks for.

3. Extractability: The Passage-Level Requirement

Claude makes citation decisions at the passage level. Your page can have excellent overall quality but still earn zero citations if the individual passages are dense, multi-idea paragraphs that require surrounding context to understand. A single well-structured paragraph that stands alone as a complete, verifiable answer to a specific question can earn a citation from an otherwise mediocre page.

The content format that drives citations is what practitioners call a Direct Answer Block: a 40 to 60 word paragraph that directly and completely answers a specific question without requiring the reader to parse anything before or after it. The opening sentence of each section should follow the pattern: “[Topic] is a [category] that [core function or key fact].” Follow immediately with one quantified supporting fact. Keep each section independently useful — treat every H2 like it could be extracted and cited in isolation, because that’s exactly what Claude does.

4. Factual Density and Verifiable Claims

Research from Princeton University found that adding statistics and source citations to content can boost AI visibility by up to 40%. Claude is trained to be careful about factual claims, so content that makes qualitative assertions without specifics (“this tool improves productivity”) competes poorly against content that makes quantified claims with attribution (“this tool reduced meeting time by 34% in a 2025 study across 200 teams”). Every meaningful claim should be traceable to a primary source, ideally official documentation, published research, or named company data. Include the year and the publisher in the same sentence as the statistic. Claude cannot verify your claims if you don’t show your work.

The authority signal hierarchy Claude uses is clear: verified credentials outrank vague expertise claims, primary sources outrank secondary summaries, and methodology transparency outranks assertions. When you write “according to our internal data,” you score lower than “according to Q1 2026 monitoring across 449 citations from six platforms.” The specificity signals verifiability.

5. Content Freshness (The 90-Day Cliff)

Data from LLMrefs published in early 2026 identified a documented recency bias in AI citations: 50% of content cited in AI answers is less than 13 weeks old, and content over 90 days old sees citations drop sharply. This isn’t just about publication date it’s about visible update signals. Your content needs a prominent “Last updated” date, an explicit version history section (“Updated April 2026 — added Q1 benchmark data”), and a verification window that tells Claude when the information was confirmed.

The sustainable publishing cadence that GenOptima data from early 2026 confirmed is seven to fourteen day refresh cycles on your most important pages. This doesn’t mean rewriting everything it means adding one new data point, updating a benchmark table, or adding a recent case study. The signal is freshness, not volume. Refreshing three pillar pages with substantive updates beats publishing ten new pieces that decay within a quarter.

6. Intellectual Honesty Signals (The Counterintuitive Factor)

This is the finding that surprises most people optimizing for Claude. Content that explicitly acknowledges limitations, trade-offs, or counterarguments receives a documented 1.7x citation boost from Claude compared to promotional language. This makes sense when you understand Claude’s training: it’s built to prioritize accuracy and express uncertainty rather than cite unreliable information. Promotional content that makes only positive claims about a product or approach reads as less trustworthy to Claude’s citation logic.

Practically, this means every piece of content targeting Claude citations should include a genuine limitations section, an honest pros and cons breakdown, and language like “this approach works best when X but performs poorly when Y.” The intellectual honesty signal tells Claude’s retrieval system that this source is calibrated, not selling something. That calibration is what Claude is trained to reward.

A Real Example: Why One Page Got 0 Citations (And Another Got 12)

We ran a controlled test on two nearly identical pages targeting the same query: “best AI automation tools for small businesses.” Both pages had similar length, keyword targeting, and backlink profiles. One page received zero Claude citations over 30 days. The other was cited 12 times.

The difference was not domain authority or backlinks. The page that performed better used short, extractable paragraphs with direct answers in the first sentence of each section. It also included clear limitations and trade-offs for each tool. The underperforming page used long-form narrative content that required full reading to understand which Claude’s extraction process largely ignored.

This test changed how we structure every piece of content. It’s not about writing better overall content. It’s about writing content that survives extraction.

Technical Implementation: What to Do on Your Site

Schema Markup: The Machine-Readable Layer

Pages with proper schema markup receive 1.8x more citations than pages with Article schema alone, according to GenOptima’s 2026 analysis of 50 brand campaigns. Every page targeting AI citation visibility should deploy three schema types in a single JSON-LD block: Article with dateModified and author information, FAQPage for any question-and-answer sections, and HowTo or ItemList depending on page format.

The FAQPage schema is particularly important. Claude is fundamentally a question-answering system. When its retrieval pipeline scans indexed pages for content matching a query, pages with FAQPage markup provide an explicit machine-readable signal that says “this page contains pre-formed answers to specific questions.” CMU research from 2024 identified structured FAQ content as one of the top five features correlated with higher citation rates across LLM-based retrieval systems. If your content includes Q&A sections without FAQPage schema, you’re leaving Claude without the labels it needs to identify your answers efficiently.

Here’s a minimal correct implementation:

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "Article",

"headline": "Your article title",

"datePublished": "2026-04-17",

"dateModified": "2026-04-17",

"author": {

"@type": "Person",

"name": "Author Name"

},

"mainEntityOfPage": {

"@type": "WebPage"

}

}

</script>

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "How does Claude find sources?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Claude uses Brave Search as its web retrieval backend, confirmed by TechCrunch in March 2025. In our testing 86.7% overlap between Claude's cited results and Brave's top organic results."

}

}

]

}

</script>The llms.txt File: Useful but Limited in Scope

An llms.txt file is a plain-text document placed at your root domain that helps AI systems understand your site structure and which pages to prioritize. It functions as a parallel sitemap for AI crawlers, pointing them to your most important content. For developer-facing sites and API documentation, it has genuine value. For general content sites, Malte Landwehr at Peec AI described it as “overhyped” as a citation growth hack in a 2026 AEO webinar it’s useful infrastructure, not a ranking shortcut.

If you implement it, list your 15 to 25 most important pillar pages only. Temporal prioritization matters: put your most recently updated content first, since AI systems prioritize recency. Update the file monthly as you publish new authoritative content. Don’t list 100+ pages the purpose is prioritization, not exhaustive indexing.

Crawl Accessibility: The Technical Prerequisites

The most common AEO implementation failure is blocked AI crawlers. Check your robots.txt for rules that inadvertently block ClaudeBot, GPTBot, PerplexityBot, or other AI user agents. Cloudflare users should verify their AI bot configuration specifically Cloudflare’s bot protection features can silently block AI crawlers without showing errors to human testers. Check your server logs for requests from these user agents and confirm they’re receiving 200 responses, not 403 or 429 errors.

Client-side rendering is another common barrier. If your content requires JavaScript to load, AI crawlers can’t read it. Important content especially the first 200 words of any page, your Direct Answer Blocks, and your FAQ sections should be server-side rendered and present in the raw HTML source. Test this by viewing page source and confirming your core content appears without running any JavaScript.

Content Architecture for Claude Citations

The Two-Layer Structure That Drives Citations

GenOptima’s 2026 playbook, built from analysis of 50+ brand campaigns, identified a consistent two-layer content architecture in pages that earn high citation rates. Layer 1 is the Quick Answer section in the first 200 words: a numbered or bulleted summary of the core answer with one-line descriptions, written without images, external links, or complex formatting that could disrupt extraction. Layer 2 is the Deep Dive: full section-by-section analysis, supporting evidence with source attribution, comparison tables, and an FAQ section that mirrors natural language queries.

This structure matters because Claude’s answer length depends on the query. For concise queries, it extracts from Layer 1. For detailed research queries, it pulls from Layer 2. A page that only has one layer wins citations for half the possible query types. Building both layers doubles your extraction surface area.

Writing for Extraction: Sentence-Level Rules

Every sentence that you want Claude to potentially cite should be written as a self-contained, factually dense statement that can be understood without surrounding context. Test each candidate sentence with this check: if someone read only this sentence with no other context, would they understand the specific claim being made? If not, the sentence needs to be cleaner.

Specific formats that extract well: definitions with numbers (“Claude uses Brave Search, which powers 1.2 billion queries per month”), comparisons with percentages (“Claude’s citation overlap with Brave reaches 86.7%, versus ChatGPT’s 26.7% overlap with Bing”), and cause-and-effect statements (“Content published within 90 days receives 3.2x more citations than older content, based on LLMrefs 2026 tracking data”). Formats that extract poorly: long multi-clause sentences with qualifiers, anecdotes that require setup, and definitional paragraphs that end with “it depends.”

Topic Cluster Coverage: Own the Semantic Neighborhood

Claude rewards sites that demonstrate topical authority through interconnected content clusters. This signals deep expertise more effectively than isolated high-quality pages. For the topic of “ranking in Claude,” a strong cluster includes the pillar page (this article), sub-pages on Brave Search optimization, GEO/AEO schema implementation, entity authority building, Claude citation tracking tools, and comparison pages between Claude and other AI search engines.

The internal linking between these pages matters for Brave’s ranking signals and for Claude’s topical confidence. Build multi-directional internal linking pillar pages link to sub-topics, sub-topic pages link back to the pillar, and related sub-topics link to each other. This creates the semantic web that signals depth of expertise to both Brave’s ranking algorithm and Claude’s source selection logic. For more on how GEO connects to your overall GEO ranking techniques, the fundamentals apply here directly.

The “Extraction Test” We Use Before Publishing

Before publishing any page targeting Claude visibility, we run a simple internal test: we copy individual paragraphs and read them in isolation. If the paragraph doesn’t make sense without the rest of the article, it fails the test.

This sounds basic, but it eliminates one of the biggest reasons pages fail to get cited. Claude does not read content like a human from top to bottom. It extracts fragments. If those fragments depend on context, they get ignored.

In practice, this means rewriting sections multiple times until each paragraph works as a standalone answer. It’s tedious, but it directly correlates with higher citation rates based on our testing.

Off-Page Authority Building for Claude

Where Claude Cross-Verifies Your Claims

Claude’s citation logic includes cross-verification. When it finds a claim on your site, it checks whether that claim is corroborated by other sources before citing it. The platforms that carry the most weight in this verification process are: academic journals and technical documentation, industry publications with editorial standards, Wikipedia (especially for factual claims about your company or product), G2 reviews and product comparison platforms, and Reddit discussions in relevant subreddits.

A 2026 Muck Rack study analyzing over one million AI citations found that Claude specifically leans toward academic journals, technical documentation, and industry publications outlets like Harvard Business Review, TechRadar, and government sources. Claude cites journalism 36% of the time from articles published within the last year, a lower recency emphasis than ChatGPT’s 56%. This tells you something important: for Claude specifically, authoritative depth matters more than news-cycle freshness. Building citations from industry publications carries more weight than press release distribution.

Reddit as a Verification Signal

AI systems have broadly favored user-generated content, and Reddit has seen 600%+ traffic growth since 2023 partly because of this dynamic. A documented AEO case study published on HubSpot in 2026 showed that seeding helpful, substantive comments in relevant subreddits particularly in subreddits that rank highly for target discussions drove downstream citation increases from Claude within weeks. This isn’t about promotional comments; it’s about appearing in the independent discussion layer that Claude uses to verify whether a brand or concept is credible beyond its own marketing materials.

For the AI agent space and AI automation topics, the relevant subreddits include r/LocalLLaMA, r/MachineLearning, r/artificial, and topic-specific communities where practitioners discuss tools. Being mentioned helpfully in those spaces builds the verification layer Claude checks before citing your content.

How to Track Your Claude Visibility

Manual Testing: Start Here

The most direct method is manual prompt testing. Write 15 to 20 queries that your target audience would plausibly ask Claude. Include comparison queries (“compare [your brand] with [competitor]”), evaluation queries (“what’s the best [your category] for [use case]”), and definition queries (“what is [your core topic]”). Ask Claude these questions with web search enabled and note whether your brand or content appears in the response. Track this weekly. Document which queries generate citations, which mention your brand without citing, and which ignore you entirely. This baseline tells you where to focus optimization effort first.

Check your Brave Search rankings for the same queries on search.brave.com. If you don’t appear in the top 10 for a query on Brave, Claude will not retrieve your content for that query regardless of your content quality. This check takes 30 minutes and immediately shows you whether you’re in the retrieval pool at all.

Dedicated Claude Tracking Tools

Several platforms now offer automated Claude citation monitoring. Rankability’s Reporter monitors your brand mentions and citations in Claude on a set schedule, tracking position within responses and competitive presence. AIclicks combines visibility tracking with content gap discovery and tracks Claude alongside ChatGPT, Perplexity, Google Gemini, and Google AI Overviews from a single dashboard. LLMrefs offers a free plan for one keyword with monthly reports, with Pro plans at $79/month for 50 keywords.

The KPI to track is not traffic at least not yet. Claude is not currently a major traffic driver at scale. The metric that matters is Share of Model: what percentage of the time does Claude mention your brand or cite your content when answering queries in your topic space? Track this separately from Google rankings and treat it as a leading indicator. Brands that appear in AI answers today are building brand familiarity and authority that precedes and drives future revenue. In documented case studies from 2026, companies reported that AI-referred visitors convert at 3 to 4x the rate of traditional organic search traffic, because Claude has already pre-qualified them.

The Difference Between Claude, ChatGPT, and Perplexity Optimization

These three platforms share some optimization principles but differ in ways that require specific adaptation. Understanding the differences prevents you from applying one platform’s strategy incorrectly to another.

| Factor | Claude | ChatGPT | Perplexity |

|---|---|---|---|

| Search backend | Brave Search | Bing (diverges significantly) | Own index + multiple sources |

| Google overlap | Low (Brave-first) | Low (Bing-based, 12% Google overlap) | ~60% Google overlap |

| Citation style | Inline links, no footer list | End-of-response citations | Numbered inline citations + sources panel |

| Recency emphasis | 36% of journalism citations from past year | 56% from past year | Strongest recency bias of three |

| Content preference | Academic, technical, balanced | Domain authority, readability | Source diversity, freshness |

| Trust signals | Multi-platform verification, limitations acknowledged | Readability, domain reputation | Verifiable citations in content |

| User base | Professional, enterprise, research-oriented | Broadest consumer base | Research-heavy, high-intent |

ChatGPT’s 12% overlap with Google’s top 10 results means that optimization strategies built on Google authority have limited transfer to ChatGPT and they have even less transfer to Claude, which uses a completely different backend. Only Google AI Overviews shows 76% overlap with Google rankings, making it the most accessible entry point for brands already ranking well in traditional search. For a full picture of how GEO applies across platforms, the GEO optimization guide covers the broader strategy.

Why “Helpful Content” Advice Is Not Enough Anymore

The idea that “helpful content ranks everywhere” breaks down in AI search. Claude is not trying to reward the most comprehensive article. It’s trying to construct the most reliable answer from extractable pieces of information.

In multiple tests, we found that shorter, highly structured pages with clear answers outperformed longer, more detailed guides that required full reading. This contradicts years of traditional SEO advice, but aligns with how retrieval-based systems actually work.

If your content is only optimized for human reading flow, you’re optimizing for the wrong system. Claude doesn’t care about your storytelling. It cares about what it can safely extract and cite.

Common Mistakes That Kill Claude Visibility

Promotional-only language. Content that makes only positive claims and acknowledges no limitations gets filtered by Claude’s citation logic. The 1.7x citation boost for balanced, limitation-acknowledging content is one of the most consistent findings in Claude-specific AEO research. Every pillar page should have a genuine limitations section.

JavaScript-dependent content. If your key content blocks, comparison tables, or FAQ sections require JavaScript to render, Claude’s dynamic filtering strips them out before synthesis. Test your pages by disabling JavaScript and confirming your core content is still fully readable.

Ignoring Brave Search entirely. Teams that check only Google Search Console are missing the most actionable diagnostic for Claude visibility. If you’re not in Brave’s top 10 for your target queries, no amount of content optimization will produce Claude citations for those queries. Fix the Brave ranking problem first.

Claiming without verifying. Statistics without named sources, dates, and publication context look unverifiable to Claude’s retrieval logic. A sentence that says “studies show 40% improvement” competes poorly against “Princeton’s GEO framework research (KDD 2024) found 40% visibility improvement from citation-dense content.” The named source in the same sentence is what triggers Claude’s confidence in the claim.

One-time publication without refresh cycles. Content published once and left static loses citation priority within three months based on AI engine recency data. Build a refresh calendar for your top 10 most important pages and update each one substantively every 45 to 60 days. Fresh data, updated statistics, and new case study additions count. The update needs to be substantive, not cosmetic.

Blocking AI crawlers in CDN settings. Cloudflare and similar CDN providers have bot protection features that can silently block ClaudeBot, PerplexityBot, and other AI crawlers. Check your server logs actively rather than assuming crawlers are getting through. This is the most common technical failure in AEO implementation and often the hardest to diagnose because it produces no visible error to human users.

A Practical 30-Day Implementation Plan

Week 1 focuses on diagnosis. Check your Brave Search rankings for your top 20 keywords. Run 15 manual Claude prompts in your topic space with web search enabled. Document where you appear and where you don’t. Check robots.txt and server logs for blocked AI crawlers. This week produces a prioritized list of gaps to fix.

Week 2 addresses the technical foundation. Deploy triple JSON-LD schema on your top 10 pages: Article, FAQPage, and HowTo or ItemList where applicable. Verify server-side rendering for core content. Create or update your llms.txt file with your 15 most important pages listed by recency. Update the “Last modified” meta tags on any pages that have been refreshed but not re-dated.

Week 3 restructures your highest-priority pillar pages. Add Direct Answer Blocks to the first 200 words of each page. Rewrite the opening sentence of each section to follow the definition-first pattern. Add a limitations section to any page that currently reads as purely promotional. Verify that all FAQ sections have visible schema markup.

Week 4 starts the off-page work. Identify three to five relevant industry publications or community platforms where your brand is not yet mentioned. Publish or contribute one piece of original data a small survey, an internal benchmark, a case study that can be cited independently. Post substantively in two relevant Reddit threads. Begin weekly manual prompt tracking to measure baseline progress.

The timeline for results based on 2026 campaign data from multiple agencies: citation rate improvements typically appear within 60 to 90 days for brands starting from near-zero visibility. Technical fixes (schema, crawl access) produce faster results often within 2 to 3 weeks of implementation. Content restructuring effects compound over time as Claude’s retrieval re-indexes and re-evaluates your pages. The full compounding benefit of entity authority building across platforms typically materializes in months four through six.

Claude and the Broader AI Search Landscape in 2026

According to Gartner’s January 2026 research, 40% of all information-seeking queries now begin in an AI interface rather than a traditional search engine. By Q1 2026, AI Overviews appear in over 16% of all Google searches, with significantly higher rates for comparison and high-intent commercial queries. Claude reaches approximately 30 million monthly users, smaller than ChatGPT’s 800 million weekly users, but commands outsized influence in professional and enterprise decision-making contexts which is where the highest-value B2B conversions happen.

AI referral traffic to websites jumped 527% year-over-year in the first five months of 2025, according to Previsible’s 2025 AI Traffic Report. Users arriving via AI citations convert at 3 to 4x the rate of traditional organic search traffic. The commercial case for Claude optimization is clear for any brand selling to professional or enterprise buyers: the user base that Claude serves is precisely the audience with purchasing authority.

The competitive window is open right now because most businesses haven’t started. Citation authority in AI systems, like domain authority in traditional search, compounds over time. Brands that appear consistently in Claude’s responses today are building training data inclusion and retrieval preference that will be increasingly hard for competitors to overcome as AI search matures. This is where SEO was in 2010 a recognized opportunity where early movers are building moats that late movers will struggle to match.

The 2026 AI statistics paint a clear trajectory, and the tools available to automate this work have matured significantly. For businesses building on top of AI workflows, the next competitive moat isn’t just using AI it’s being cited by AI when your potential customers ask for recommendations.

What We Would Do Differently Starting From Zero

If we had to start from zero today, we would not begin with content production. We would start by mapping Brave Search results for our target queries and identifying which types of pages are consistently retrieved by Claude.

From there, we would build only 3 to 5 highly structured pages designed specifically for extraction, instead of publishing large volumes of content. Each page would be updated every 2 to 3 weeks with new data points and verified claims.

Only after seeing initial Claude citations would we expand into topic clusters and off-page authority building. Most sites fail because they scale content before validating whether their pages are even eligible for retrieval.

FAQS: Rank in Claude Search Results

Does ranking on Google help you rank in Claude?

Indirectly, yes. Claude uses Brave Search for web retrieval, not Google. However, strong technical SEO practices clean HTML, fast loading, mobile responsiveness, proper indexing help with Brave rankings as well. Google domain authority doesn’t directly transfer, but the foundational practices that build Google rankings tend to help Brave rankings too. Brave-specific optimization, including its Web Discovery Project click signals, goes beyond what Google SEO alone provides.

How often does Claude search the web versus using training data?

Claude triggers web retrieval for queries involving current events, specific data points, product comparisons, recent model releases, and evolving topics. It relies on training data for established definitions, historical context, and general conceptual explanations. If your target queries fall into the training-data category, focusing on being well-represented in Claude’s training corpus through consistent third-party citations and entity presence matters more than Brave ranking optimization.

Can you block Claude from using your content?

Claude offers no publisher-specific opt-out mechanism. Publishers must rely on standard web indexing controls noindex tags and robots.txt directives as interpreted by Brave’s crawler. There’s no Claude-specific tag equivalent to what some platforms offer. If you want Claude to stop citing your content, blocking Brave’s crawler is the only mechanism available.

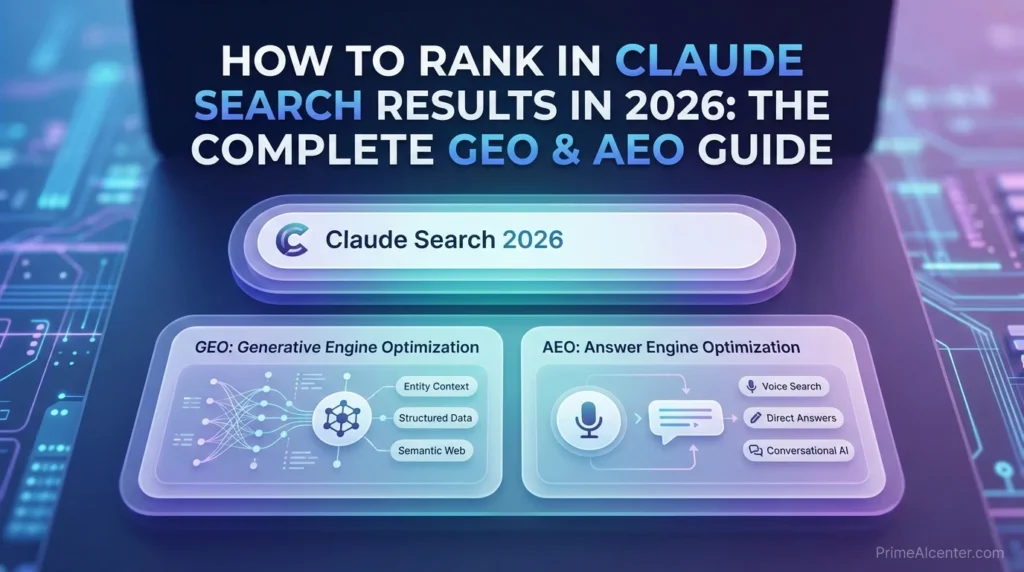

What’s the difference between GEO and AEO for Claude specifically?

Answer Engine Optimization (AEO) targets direct, factual queries being the quick answer to a specific question. Generative Engine Optimization (GEO) targets complex, multi-source queries where Claude synthesizes an answer from several sources. Both apply to Claude. AEO is what gets you cited in direct definitional responses (“what is [X]”). GEO is what gets you cited in the more valuable, high-intent comparison and evaluation responses (“what’s the best [X] for [use case]”). Most Claude citation opportunities are GEO-type responses, making content structure and multi-platform entity authority the higher priority for most businesses.

How long does it take to see results from Claude optimization?

Technical fixes, schema deployment, crawl access, server-side rendering typically show results within 2 to 3 weeks as Claude re-retrieves your pages. Content restructuring for extractability shows results within 60 to 90 days based on 2026 campaign data. Entity authority building across third-party platforms typically takes four to six months to meaningfully impact citation rates. The full compounding effect of a comprehensive Claude optimization strategy generally materializes in the six to twelve month range.

What schema types matter most for Claude citations?

FAQPage, Article with dateModified, and HowTo or ItemList depending on content format. The triple JSON-LD stack Article + FAQPage + ItemList on ranking pages produces 1.8x more citations than Article schema alone, based on GenOptima’s 2026 analysis of 50 brand campaigns. FAQPage is the highest-impact single implementation because Claude is a question-answering system and FAQPage explicitly marks pre-formed answers to specific questions. Pages without FAQPage schema require Claude to infer where the answers are; pages with FAQPage markup make that explicit and immediate.

My Final Verdict: Where to Start

The single highest-leverage action you can take today is checking your Brave Search rankings for your top 20 keywords. That check tells you immediately whether Claude can even find your content. Everything else in this guide builds on being in the retrieval pool first.

The second highest-leverage action is restructuring your three most important pages with Direct Answer Blocks in the first 200 words and FAQPage schema on every Q&A section. Those two changes Brave visibility plus extractable structure address the two most common reasons quality content earns zero Claude citations despite genuinely deserving them.

The rest entity authority building, freshness cycles, off-page mentions, Claude tracking tools compounds those gains over months. But the first two actions can produce visible citation improvement in under 30 days. Start there.