MCP vs A2A Protocol 2026: The Complete Guide to AI Agent Interoperability — What They Are, How They Differ, and Which One You Actually Need

I spent three months last year watching two teams at the same company build two completely different things — both calling their work “AI agents.” One team wired Claude to their internal database using MCP servers. The other built a workflow where a Salesforce agent handed tasks off to an internal fulfillment agent using A2A. Both approaches worked. Neither team talked to the other. When they finally compared architectures, they discovered they’d built layers of the same stack without realizing it.

That scenario is playing out across hundreds of engineering organizations right now. MCP and A2A both promise to solve “AI agent interoperability,” both are open-source, both are governed by the Linux Foundation, and both were released within five months of each other. Of course people are confused. The confusion is reasonable. But it costs real architectural decisions when you get it wrong.

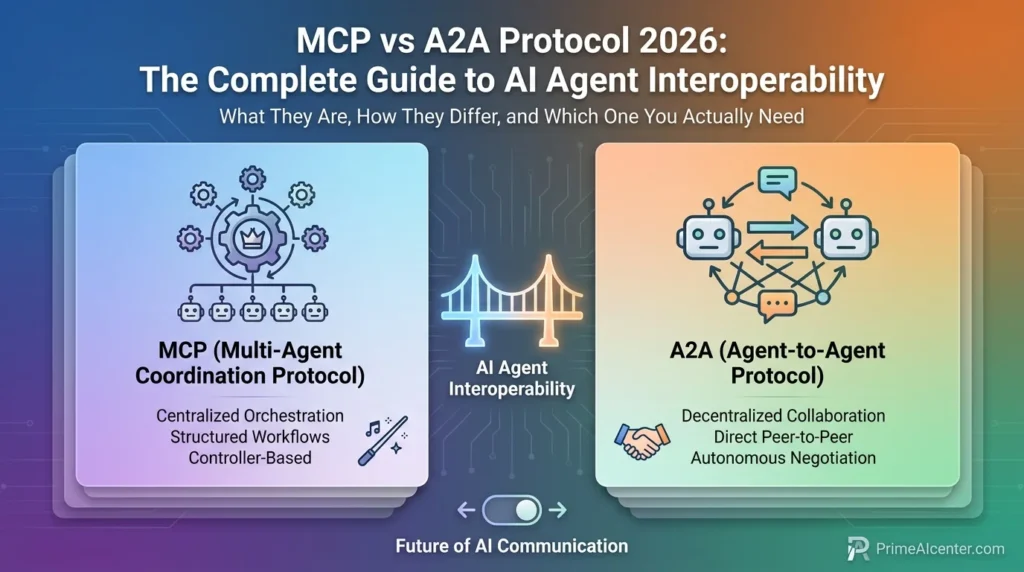

Here’s what I’ve come to understand: they are not competing standards. They solve fundamentally different problems at different layers of the same stack. MCP answers “how does my agent talk to external tools and data?” A2A answers “how do multiple agents talk to each other?” That’s it. The whole article from here is unpacking what that distinction actually means in practice — with the data, the architecture diagrams in your head, and the specific decision signals for when to use which.

MCP vs A2A: Simple Explanation (For Non-Engineers)

If you remove all the technical language, the difference is simple.

MCP lets an AI agent use tools. A2A lets AI agents work together.

If your system needs one smart assistant that can do things, you need MCP.

If your system needs multiple assistants that collaborate, you need A2A.Most real-world systems end up using both — one for execution, one for coordination.

The Mistake 90% of Teams Make with MCP and A2A

Most teams don’t fail because they picked the wrong protocol. They fail because they pick both — too early — without understanding what layer they actually need.

The common pattern looks like this: a team reads about multi-agent systems, sees A2A, and assumes they need orchestration from day one. They start designing agent-to-agent communication before their first agent can reliably use tools.

The result is predictable — complexity explodes, progress slows, and the system never reaches production.The reality is simpler. If your agent cannot access data, APIs, or internal systems efficiently, A2A won’t save you. Coordination without capability is just overhead.

The correct sequence in most cases is brutally simple: start with MCP, build real utility, and only introduce A2A when you have multiple agents that genuinely need to collaborate.

This is not a theoretical distinction. It’s the difference between shipping in weeks and getting stuck in architecture discussions for months.

The State of AI Agent Protocols in 2026

Before anything else, the numbers. Because they tell a story that reframes the entire “MCP vs A2A” framing.

MCP (Model Context Protocol), launched by Anthropic in November 2024, reached 97 million monthly SDK downloads as of March 2026. For context, the React npm package took roughly three years to reach 100 million monthly downloads. MCP did it in 16 months. There are now over 8,600 community-built MCP servers spanning databases, CRMs, cloud providers, developer tools, e-commerce platforms — essentially the entire enterprise software landscape. Every major AI provider — Anthropic, OpenAI, Google DeepMind, Microsoft, and AWS — supports it natively.

A2A (Agent2Agent Protocol), launched by Google in April 2025 with 50+ enterprise partners, just crossed 150 supporting organizations as of April 9, 2026. That announcement came today — literally the same day I’m writing this. AWS, Cisco, Google, IBM, Microsoft, Salesforce, SAP, ServiceNow all on board. Version 1.0 shipped. Production deployments in supply chain, financial services, insurance, and IT operations. SDKs in five languages.

The adoption profiles are different, and that difference matters. MCP won the developer layer bottom-up — thousands of individual developers building MCP servers for their favorite tools, then enterprises following. A2A won the enterprise layer top-down — major platform vendors committing first, production deployments following. Different adoption paths, different current ecosystems, different readiness levels. Worth keeping in mind when choosing what to build on.

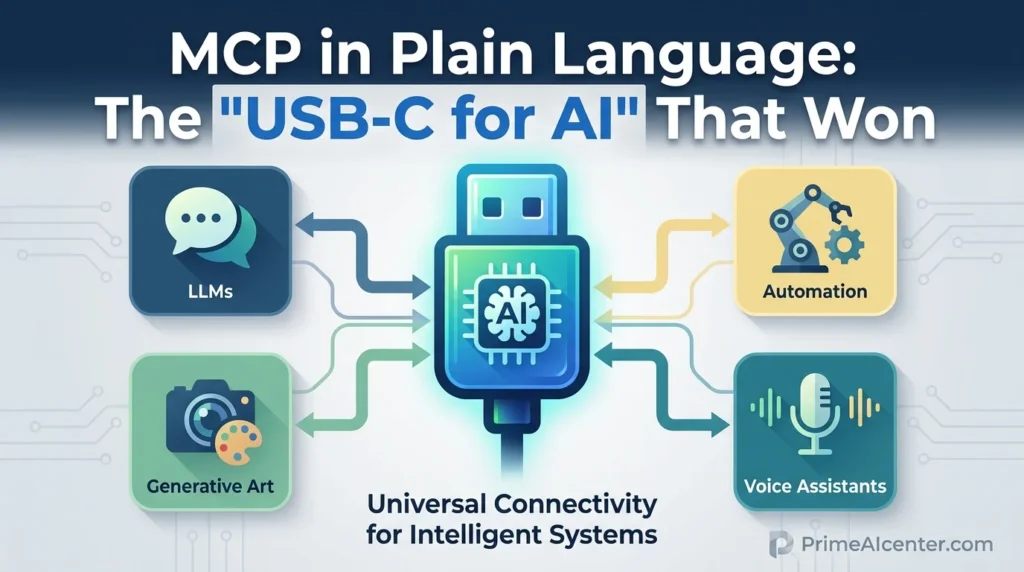

MCP in Plain Language: The “USB-C for AI” That Won

Before MCP, every AI integration was custom code. If you wanted Claude to read your GitHub issues and create Jira tickets, you wrote bespoke connectors for both services. Then if you switched to GPT-4 or Gemini, you rebuilt all of them. Three AI platforms × ten tools = thirty integrations to maintain. That’s the N×M problem MCP was built to eliminate.

The fix is simple in concept: standardize how AI agents connect to external tools. Build a tool once as an MCP server. Any MCP-compatible AI client — Claude, ChatGPT, Gemini, Cursor, GitHub Copilot, whatever comes next — can use it without any additional integration work. The “USB-C for AI” metaphor has been beaten to death but it’s accurate: you no longer need a different cable for every device combination.

Technically, MCP defines a client-server architecture over JSON-RPC 2.0. The AI agent acts as the MCP client. External tools run as MCP servers. Three primitives handle everything:

- Tools — callable functions the AI can invoke (querying a database, sending an email, making an API call)

- Resources — readable data sources the agent can access by URI (files, database tables, API responses)

- Prompts — reusable templates that structure how the AI interacts with a service

The adoption story is remarkable not because of the technical design — it’s solid, not magical — but because Anthropic donated it to the Linux Foundation in December 2025 instead of keeping it proprietary. That decision killed any vendor-control concern that might have slowed enterprise adoption. Once the three major AI labs all speak MCP, every tool in the ecosystem has a compelling reason to implement it. Network effects took over.

Block integrated MCP with Snowflake, Jira, Slack, Google Drive, and internal APIs, cutting 75% of time their engineers spent on daily tasks. That’s not a demo — that’s a production deployment with measurable outcomes. One healthcare provider deployed MCP-enabled diagnostics that cut patient waiting time by 30% by letting an AI agent retrieve medical imaging in real-time from hospital systems. MCP has crossed the line from “interesting protocol” to “infrastructure.”

Worth noting: the developer community hasn’t been entirely positive. “About 95% of MCP servers are utter garbage,” one developer wrote on Reddit. Another reported “token overhead with 30 MCPs turned my $2 chat into a $47 nightmare.” Security researchers found that nearly all publicly accessible MCP servers lacked authentication. These are real problems. The 2026 roadmap directly addresses them — OAuth 2.1 enterprise auth in Q2, MCP Registry with security audits in Q4. But anyone building on MCP in production right now needs to understand these aren’t solved yet.

A2A in Plain Language: The Protocol That Lets Agents Hire Each Other

Here’s the thing MCP doesn’t solve: what happens when two separate agents — built by different teams, on different platforms, using different frameworks — need to hand work between each other?

Imagine a customer service agent built on Claude that handles inbound support tickets. When a billing dispute comes in, it needs to delegate part of the resolution to a billing agent built on an internal system by the finance team. The Claude agent has no idea what the billing agent can do, what inputs it accepts, or how to authenticate with it. Without a standard, someone writes a brittle custom integration that breaks every time either agent changes. Multiply that across an enterprise with thirty specialized agents and you have an integration nightmare that scales worse than the N×M tool problem MCP solved.

A2A solves this with three mechanisms. First, Agent Cards — JSON metadata files that describe what an agent can do, what inputs it accepts, what outputs it produces, and how to authenticate with it. They live at a well-known URI (/.well-known/agent.json). Agents discover each other’s capabilities by reading these cards without any central registry required. Second, task lifecycle management — A2A has explicit states (“submitted,” “working,” “input-required,” “completed,” “failed”) that both agents track together. This matters for long-running tasks that can span hours or days. Third, streaming support via SSE — agents can stream partial results in real-time rather than waiting for full task completion.

The stateful nature is A2A’s most underappreciated feature. MCP is fundamentally stateless — each tool call is independent. A2A tracks context across an entire workflow. An A2A task can be interrupted, resumed, request human input mid-flow, and complete asynchronously. For enterprise workflows where a process might start Monday and finish Wednesday, this isn’t optional complexity — it’s the entire point.

A real example from IBM: a retail store’s inventory agent uses MCP to interact with its product database. When that agent detects low stock, it delegates to a purchasing agent via A2A, which then communicates with external supplier agents to place orders. MCP handles the tool layer; A2A handles the delegation layer. Neither protocol could do the full job alone.

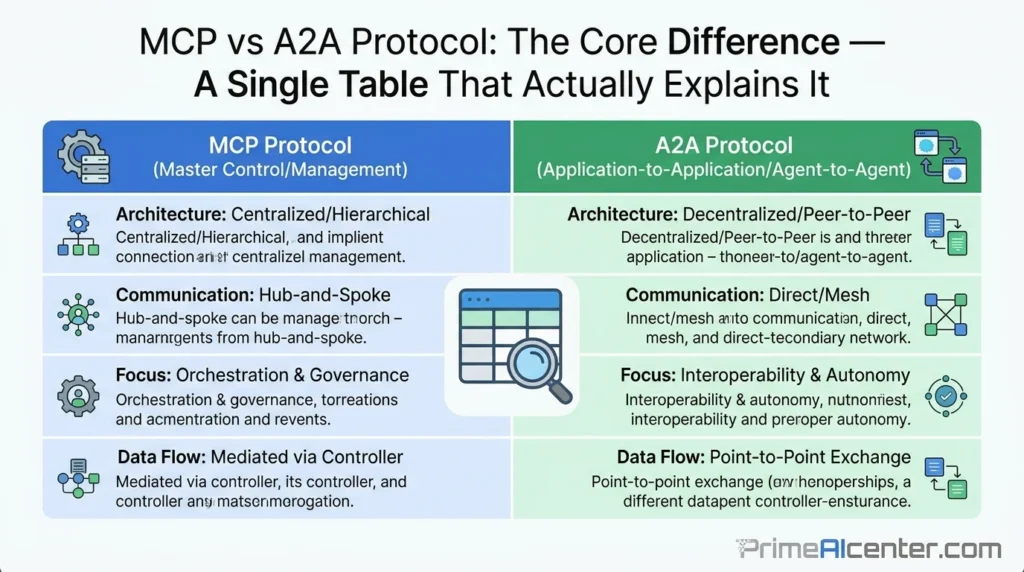

The Core Difference — A Single Table That Actually Explains It

| Dimension | MCP (Model Context Protocol) | A2A (Agent2Agent Protocol) |

|---|---|---|

| Created by | Anthropic (Nov 2024) | Google (Apr 2025) |

| Governance | Linux Foundation / AAIF | Linux Foundation |

| Problem it solves | Agent → Tool communication | Agent → Agent communication |

| Architectural layer | Vertical (agent to external systems) | Horizontal (agent to agent) |

| State management | Stateless (each call independent) | Stateful (task lifecycle tracked) |

| Primary entities | Tools, Resources, Prompts | Agents, Tasks, Agent Cards |

| Discovery mechanism | Tool schema from MCP server | Agent Cards (/.well-known/agent.json) |

| Transport | JSON-RPC 2.0 | HTTP, SSE, JSON-RPC |

| Long-running tasks | Not natively supported | Core feature (hours/days) |

| Ecosystem size | 8,600+ servers, 97M downloads/month | 150+ organizations, 22K GitHub stars |

| SDK languages | Python, TypeScript, C#, Java | Python, JavaScript, Java, Go, .NET |

| Current maturity | Production-ready, widely deployed | v1.0 released, production deployments growing |

| Security (current state) | Authentication gaps being addressed (Q2 2026) | Signed Agent Cards, OAuth 2.0, TLS 1.2+ |

| Payments | Not supported | AP2 (Agent Payments Protocol) — 60+ orgs |

One thing the table doesn’t capture: A2A’s security architecture is meaningfully more mature at launch than MCP’s was. Signed Agent Cards for cryptographic identity verification, OAuth 2.0 from the spec, TLS mandatory. MCP’s security gaps are real and being patched; A2A learned from them by design. If you’re evaluating for a regulated environment, this gap matters.

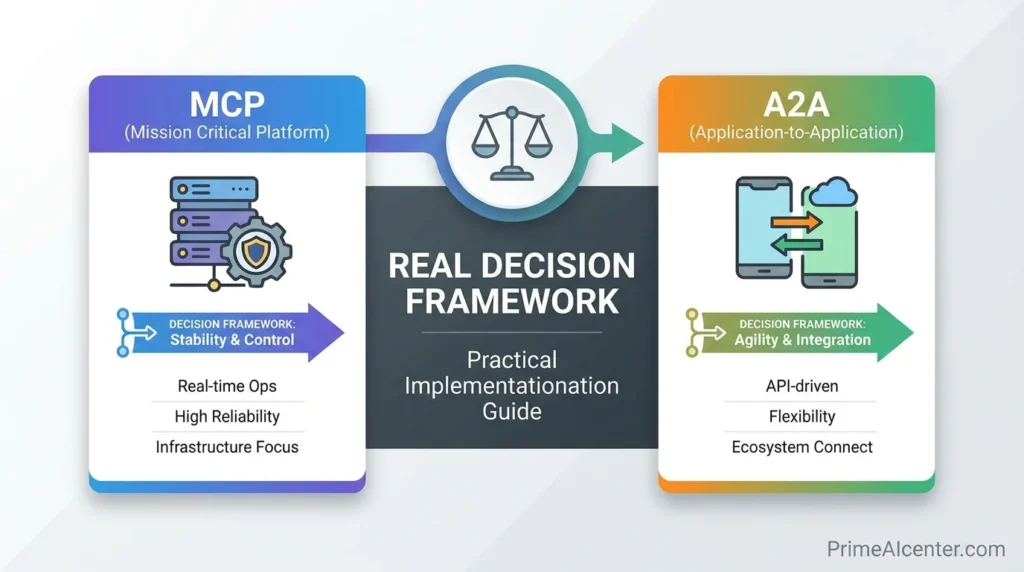

MCP vs A2A in Practice: A Real Decision Framework

At a high level, the difference between MCP and A2A is obvious. In practice, teams still struggle to decide what to implement. The problem isn’t understanding the definitions — it’s mapping them to real-world systems.

A useful way to think about it is this: MCP becomes necessary the moment your agent needs to interact with anything outside its own context. A2A becomes necessary the moment your system includes more than one autonomous decision-maker.

If you’re building a single intelligent assistant that pulls data, triggers workflows, or automates tasks, MCP is sufficient. If you’re building a system where multiple agents specialize, delegate, and coordinate work over time, A2A becomes unavoidable.

The mistake is treating them as alternatives. They are sequential layers. One expands capability. The other expands coordination.

How They Work Together: The Full Agent Architecture Stack

The most important thing I can show you isn’t the difference between these protocols — it’s what happens when you use them together. Because in most production multi-agent systems, that’s what you’re actually doing.

Here’s the architecture that most enterprise deployments are converging on:

An orchestrator agent receives a complex request. It reads Agent Cards via A2A to find the right specialist agents for each subtask. It delegates work via A2A task management, tracking state across the workflow. Each specialist agent — billing, inventory, compliance, whatever — uses MCP to connect to the tools and data it needs to do its specific job. Results flow back up through A2A to the orchestrator, which synthesizes a final answer.

The separation is clean: A2A handles the coordination layer (who does what, in what order, with what inputs). MCP handles the execution layer (how each agent actually interacts with real-world systems). Neither protocol invades the other’s domain.

Developers on DEV.to working on multi-agent hiring systems described hitting this wall: “The MCP side was straightforward. Expose a tool, define the schema, done. The A2A question is harder — it’s about domain semantics that the protocol deliberately doesn’t dictate.” That observation captures something real. A2A gives you the transport and handshake; it doesn’t tell agents what to negotiate about. Every vertical either builds shared semantics or ends up with bespoke schemas pretending to be interoperable. Payments already split — Visa built TAP, Mastercard built Agent Pay, Stripe partnered with OpenAI on ACP. Domain-layer standards are coming, but they’re not here yet.

For practical guidance on building agent workflows that use both protocols, our AI workflow automation guide 2026 covers the full stack including n8n, Make, and Relevance AI — tools that are already abstracting over MCP and A2A at the integration layer. And if you’re building agents from scratch, our complete AI agent architecture guide walks through orchestration patterns in detail.

The Hidden Cost of MCP (That Nobody Mentions)

MCP dramatically reduces integration complexity, but it introduces a different kind of cost that many teams only discover in production: context overhead.Each MCP server adds tokens, latency, and potential failure points. In small setups, this is negligible. At scale — with 10, 20, or 30 connected tools — it becomes a real architectural constraint.

Developers have already reported cases where a simple interaction became significantly more expensive due to the number of MCP connections involved. This isn’t a flaw in the protocol — it’s a tradeoff.

The solution is not to avoid MCP, but to design with constraints in mind: limit active connections, prioritize high-value tools, and treat MCP servers as infrastructure, not convenience plugins.

Teams that ignore this end up with systems that are powerful on paper but inefficient in practice.

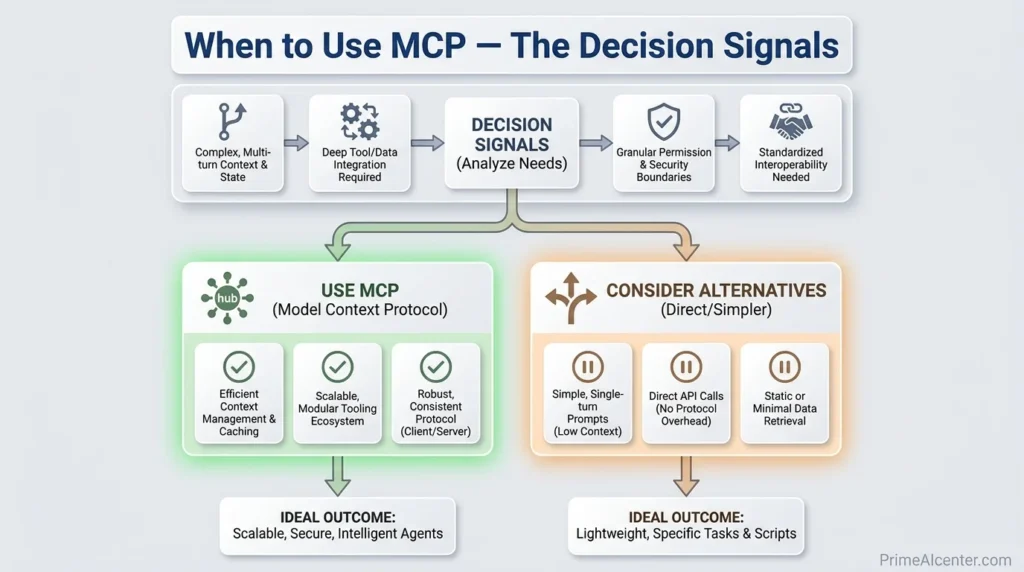

When to Use MCP — The Decision Signals

Use MCP when your agent needs to reach outside its own context. Specifically:

- You’re connecting a single agent to external APIs, databases, file systems, or cloud services

- You want integrations that work across multiple AI platforms (Claude, GPT-4, Gemini, Cursor) without rebuilding

- You’re building developer tooling where IDE assistants need project context

- Your use case is well-served by stateless, request-response tool calls

- You need to leverage the existing ecosystem — there are already 8,600+ MCP servers for most common tools

MCP is the right entry point for almost every team. An agent with no tools is an agent with no hands. Before worrying about A2A coordination, you need MCP tool access. Torchproxies’ guide puts it bluntly: “You need MCP first. An agent that can coordinate via A2A but has no tool access via MCP is essentially useless.”

For practical implementation, our WebMCP guide covers the specific MCP implementation patterns for web-based agent integrations, including authentication and remote server deployment.

What MCP Looks Like in Real Systems

The Block deployment is the reference case. They integrated MCP servers for Snowflake, Jira, Slack, Google Drive, and internal APIs simultaneously. Previously, each of those integrations required separate custom development for each AI tool they used internally. MCP turned N×M into N+M. Bloomberg deployed MCP for financial data access. Amazon used it across their internal tool ecosystem. The pattern is consistent: enterprises with multiple AI tools and multiple internal data systems find that the integration overhead savings justify the implementation cost quickly.

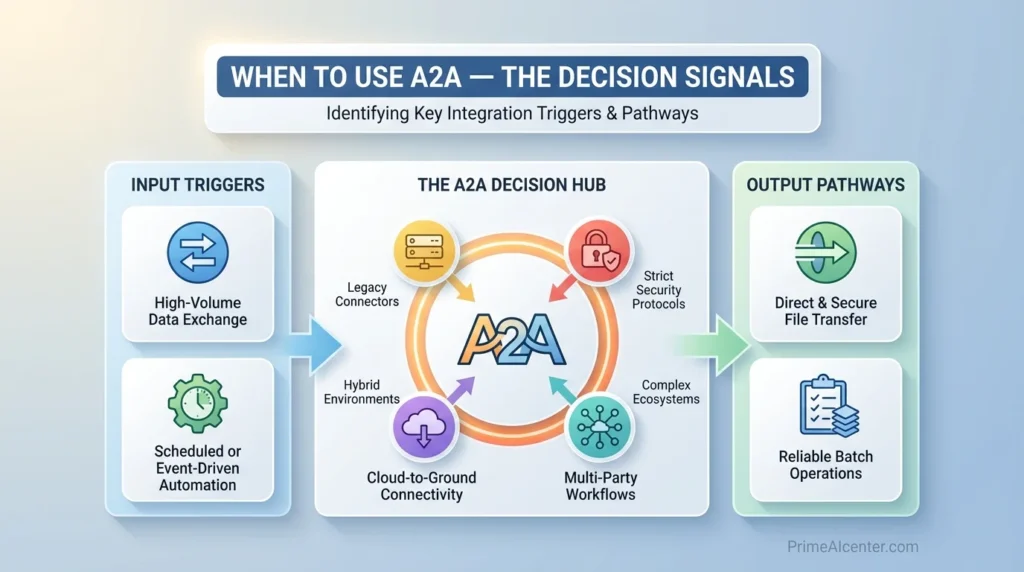

When to Use A2A — The Decision Signals

Use A2A when your system involves multiple separate agents that need to collaborate autonomously. Specifically:

- You have specialized agents built by different teams or vendors that need to hand work between each other

- Your workflows span multiple steps, multiple days, or require async operations with status tracking

- You need agents to discover each other’s capabilities dynamically without hardcoded integrations

- You’re operating in regulated environments where agent interactions need to be auditable and cryptographically verified

- You’re building payment or transaction workflows between agents (AP2 protocol)

A2A is meaningfully less mature than MCP in terms of developer ecosystem. If you’re evaluating in April 2026, the 150 organizations supporting it are mostly large enterprises and platform vendors — not yet the long tail of community MCP server builders. The v1.0 spec is stable, but production case studies are still emerging. Cisco’s quote in the announcement says it best: “This work is too important and too urgent to do alone.” That’s an organization that’s committed, not one that’s already optimized.

The supply chain use case is where A2A is already proving itself most clearly. Procurement agent, logistics agent, inventory agent, supplier agents — all from different vendors, all needing to coordinate on multi-day fulfillment workflows. A2A gives them the common protocol layer that makes this possible without custom integration contracts between every pair. For enterprise deployment patterns, our enterprise AI agent deployment guide covers governance frameworks and vendor evaluation for multi-agent architectures.

The ACP Question: A Third Protocol?

You’ll run into ACP (Agent Communication Protocol) in architecture discussions, especially anything involving IBM’s BeeAI or regulated industry deployments. It’s worth a quick clarification.

ACP started as an IBM-led effort to standardize agent messaging with an emphasis on structured collaboration patterns, REST-native architecture, and auditable message threading. It has real merit for environments that need highly traceable agent interactions — pharmaceutical FDA documentation, financial audit trails, regulated healthcare. However: as A2A gained momentum and broader organizational support, the two effectively converged scope. The Linux Foundation consolidated governance, and most teams evaluating ACP today are now directed toward A2A instead. ACP isn’t dead, but it’s not a parallel production standard you need to evaluate against A2A for most use cases in 2026.

There’s also ANP (Agent Network Protocol) — a community-driven effort for decentralized agent discovery without central registries. Worth watching for specific use cases (open agent networks, cross-organizational discovery without shared infrastructure), but not yet production-ready at the level of MCP or A2A.

Security: The Gap Nobody Talks About Enough

Here’s something most MCP vs A2A guides skip because it’s uncomfortable: the security situation for both protocols in production is genuinely challenging, though in different ways.

MCP’s security problem was identified by researchers scanning publicly accessible servers in early 2025: nearly all of them lacked authentication. Not limited auth — no auth. That means anyone who discovered an MCP server endpoint could potentially enumerate internal tool capabilities and in some cases access sensitive data. The community acknowledged the problem, the 2026 roadmap addresses it with OAuth 2.1 and enterprise identity provider integration landing in Q2 2026, but these aren’t shipped yet. If you’re deploying MCP in production right now, you need to build your own authentication layer or ensure your MCP servers are internal-only — not just assume the protocol handles it.

A2A’s security design is more mature at spec level — Signed Agent Cards for cryptographic identity, mandatory TLS 1.2+, OAuth 2.0 support from v1.0. The problem is that A2A is still in early production deployment. The security architecture on paper is strong; real-world adversarial testing at scale hasn’t happened yet. Trust the spec, verify in your threat model.

For WhatsApp AI agents and similar consumer-facing multi-agent deployments where MCP provides tool access, our WhatsApp AI agents guide covers the security architecture specifically for consumer-facing agentic workflows.

The Real Risk: Overexposed Agent Surfaces

The biggest risk isn’t just missing authentication — it’s exposing too much capability through a single agent interface.

When an MCP-enabled agent has access to multiple internal systems, it effectively becomes a unified entry point into your infrastructure. If compromised, the blast radius is significantly larger than traditional API exposure.

With A2A, the risk shifts. Instead of tool access, the concern becomes trust between agents — how do you verify that another agent is acting within expected boundaries?

In both cases, the protocol is not the security layer. Your architecture is.

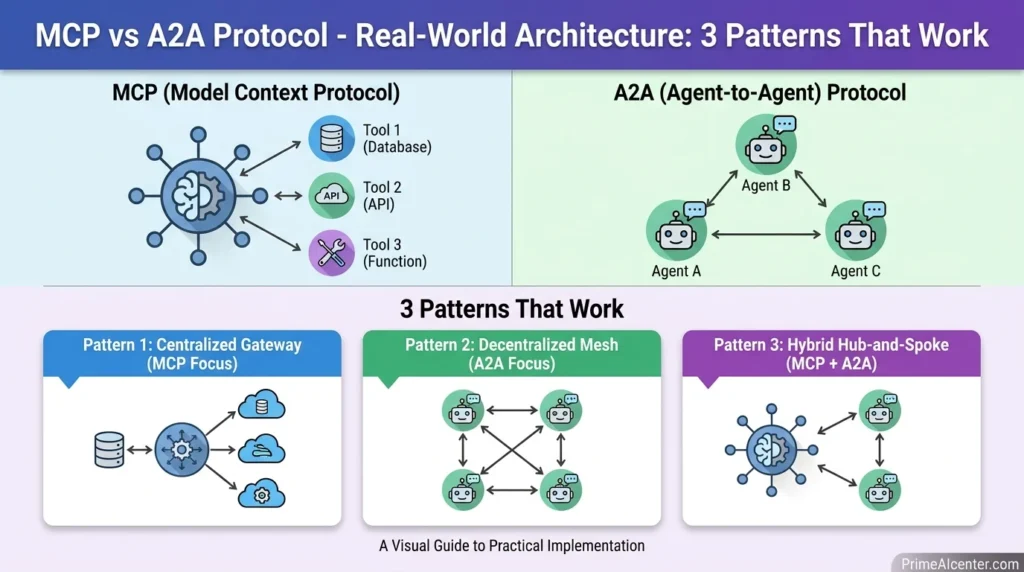

Real-World Architecture: 3 Patterns That WorkWhen to Use MCP — The Decision Signals

Pattern 1 — The Solo Agent with Full Tool Access (MCP only)

The simplest and most common deployment in 2026. A single agent (Claude, GPT-4, whatever) connected via MCP to ten or fifteen internal and external tools. No agent-to-agent coordination needed — just one model doing complex work with rich tool access. Block’s deployment is essentially this at scale. This is where most teams should start.

Pattern 2 — The Coordinated Specialist Team (MCP + A2A)

An orchestrator agent receives user requests and delegates via A2A to specialist agents. Each specialist uses MCP to access its specific tools. The orchestrator synthesizes results. This is the architecture IBM describes for supply chain — procurement, logistics, inventory agents working together, each with their own MCP tool connections, coordinating via A2A. This is where most enterprise AI projects are heading by end of 2026.

Pattern 3 — The Cross-Organizational Agent Network (A2A primary, MCP at leaf nodes)

Multiple organizations’ agents collaborating — supplier agents from different companies, healthcare systems exchanging patient data agents, financial institutions’ compliance agents. A2A handles the cross-org communication and authentication. MCP handles each organization’s internal tool access. This is the most ambitious pattern and the one the AP2 payments extension is enabling. Emerging in 2026, more common in 2027.

For teams building any of these patterns for content or business workflows, our best AI tools for content creators guide and solopreneur AI tools guide cover the practical tool layer that sits on top of these protocols in real deployments.

The Honest Verdict: What Should You Actually Build On?

This is where I’ll be direct about my own position rather than hedging.

If you’re building anything with AI in 2026 and you’re not using MCP, you are doing unnecessary work. That’s not hype — it’s the conclusion of watching 97 million monthly downloads develop in 16 months. The ecosystem exists. The security gaps are real but addressable. The productivity gain from not writing custom connectors for every tool integration is immediate and measurable. Start with MCP. Always.

On A2A, I’m more cautious than most coverage. The protocol is sound. The v1.0 spec is stable. The enterprise partners are credible. But developer-level ecosystem maturity is 12-18 months behind MCP. When I look at teams building multi-agent systems in frameworks like LangGraph, CrewAI, and AutoGen — many are still handling agent coordination internally rather than adopting A2A as the coordination layer. That doesn’t mean A2A is wrong; it means the developer community hasn’t fully committed yet the way it did with MCP when OpenAI adopted it.

The tipping point for A2A will probably come when a major developer tool — Cursor, VS Code, Claude Code — ships native A2A support the way they shipped MCP. Until then, it’s more of an enterprise architecture decision than a developer default.

The answer for most teams: implement MCP now. Design your architecture to accommodate A2A — meaning, keep your agent boundaries clean and your interfaces well-defined. When A2A reaches MCP-level developer ecosystem maturity (probably Q3-Q4 2026), migration will be significantly cheaper if you’ve been building with clean agent boundaries rather than tightly coupled systems.

For the broader AI model context — which models currently support MCP and A2A best — our best AI chatbots 2026 guide covers native protocol support across Claude, GPT-4, Gemini, and the rest. And our best AI tools 2026 guide evaluates the full stack including tools that abstract over both protocols at the application layer.

What’s Coming on the 2026 Roadmap

The convergence signal is real and happening faster than most people realize. IBM confirmed at their Think 2026 conference that A2A and MCP are being unified around a single “agent card” format — one standard way to describe an entity whether it’s a tool (MCP) or an agent (A2A). The Linux Foundation’s Agentic AI Foundation is coordinating this convergence actively.

MCP Q2 2026: Enterprise authentication — OAuth 2.1 with PKCE, SAML/OIDC integration with Okta and Azure AD. This unlocks regulated industry deployments that currently can’t meet compliance requirements.

MCP Q3 2026: Agent-to-agent coordination natively in MCP — one agent calling another as if it were a tool server. Hierarchical agent architectures through MCP without needing A2A separately. Whether this competes with A2A or complements it will depend on the implementation details.

MCP Q4 2026: MCP Registry — curated, verified server directory with security audits and SLA commitments. This directly addresses the “95% of MCP servers are garbage” problem by creating a quality tier.

A2A: Continued vertical expansion through the AP2 payments extension (60+ orgs already). Dynamic user experience negotiation mid-task. The announced one-year milestone on April 9, 2026 signals that A2A is treating this as a major maturity inflection point — expect developer adoption tooling to follow.

My prediction: by end of 2026, the distinction between MCP and A2A will feel less sharp to developers because each protocol is expanding into the other’s territory. The architectural principles will remain — tool layer vs coordination layer — but the tooling will paper over the distinction for common use cases. The teams that understand both protocols deeply now will have a meaningful advantage when that abstraction layer arrives.

For the broader context on where AI models and protocols are heading in Q2 2026, see our analysis on Claude Mythos Preview — Anthropic’s most capable model, which demonstrates what the MCP stack looks like when the underlying model is genuinely frontier-tier. And our GPT-5.5 (Spud) review covers how OpenAI’s next model is expected to extend its existing MCP and agentic capabilities.

Frequently Asked Questions

What is the main difference between MCP and A2A?

MCP (Model Context Protocol) standardizes how a single AI agent connects to external tools, APIs, and data sources. A2A (Agent2Agent Protocol) standardizes how multiple AI agents communicate and delegate tasks between each other. MCP is vertical — agent to tool. A2A is horizontal — agent to agent. They solve different problems and work best when used together.

Do I need both MCP and A2A?

For single-agent systems: MCP only is sufficient. For multi-agent systems where agents from different teams or vendors need to collaborate: you likely need both — MCP for each agent’s tool access, A2A for coordination between agents. Most enterprise AI architectures heading into late 2026 will use both at different layers of the stack.

Which is more widely adopted in 2026 — MCP or A2A?

MCP has significantly more developer adoption: 97 million monthly SDK downloads, 8,600+ community servers, and native support from every major AI provider. A2A has strong enterprise adoption: 150+ organizations including AWS, Google, Microsoft, Salesforce, SAP as of April 9, 2026, with production deployments in supply chain and financial services. Different adoption profiles — MCP won developers, A2A is winning enterprises.

Is A2A replacing MCP?

No. They operate at different architectural layers and neither can replace the other. An A2A-coordinated agent still needs MCP to access tools. An MCP-connected agent doesn’t need A2A unless it’s coordinating with other agents. The MCP 2026 roadmap actually includes agent-to-agent coordination features that may reduce the need for A2A in some architectures, but the core problem each protocol solves remains distinct.

Who created MCP and A2A?

MCP was created by Anthropic (November 2024) and donated to the Linux Foundation’s Agentic AI Foundation in December 2025. A2A was created by Google and launched in April 2025 with 50+ enterprise partners; it is now also governed by the Linux Foundation. Both are open-source under vendor-neutral governance.

What is an Agent Card in A2A?

An Agent Card is a JSON metadata file that describes an agent’s capabilities, accepted inputs, produced outputs, and authentication requirements. It lives at a well-known URI (/.well-known/agent.json) on the agent’s domain. Other agents discover capabilities by reading Agent Cards — eliminating the need for a central agent registry. Think of it as a résumé that any agent can read to understand what you can do.

Can I use MCP and A2A together?

Yes — and for most production multi-agent systems, you should. The standard architecture: an orchestrator agent uses A2A to coordinate specialist agents. Each specialist agent uses MCP to access its required tools and data sources. The two protocols operate at different layers without interfering. IBM’s retail inventory management example shows this clearly: MCP handles the database queries, A2A handles the delegation between inventory, purchasing, and supplier agents.

What is MCP vs A2A vs ACP?

MCP = agent to tools. A2A = agent to agent (Google/Linux Foundation). ACP = Agent Communication Protocol (IBM/BeeAI), an alternative agent-to-agent standard that has largely converged with A2A in scope. For most teams evaluating in 2026, ACP has been effectively superseded by A2A’s broader organizational support. ACP retains relevance for highly regulated environments needing auditable message threading, but it’s not a mainstream parallel choice.

Where can I start with MCP today?

The MCP ecosystem is accessible at modelcontextprotocol.io. Python and TypeScript SDKs are available on GitHub. Our WebMCP guide covers implementation patterns specifically for web-based integrations. For the full agent architecture context, our AI agent guide covers how MCP fits into production agent architectures end-to-end.

Where can I start with A2A today?

The A2A project is at google.github.io/A2A. The v1.0 specification is stable. SDKs exist in Python, JavaScript, Java, Go, and .NET. The GitHub repository has crossed 22,000 stars. The April 9, 2026 one-year anniversary announcement confirmed production readiness for enterprise deployments.

Sources: A2A Protocol 150 Organizations Announcement (April 9, 2026), Digital Applied — MCP 97M Downloads, Torchproxies — Developer Guide, Bonjoy — Three Protocol Comparison, Byteiota — MCP Standard Analysis, DEV.to — Complete Protocol Guide, DigitalOcean — Architecture Guide, Wikipedia — MCP, Google Developers Blog — A2A Launch, Zuplo — One Year of MCP, OneReach.ai — Enterprise Protocol Guide, IBM — A2A Protocol Explainer.