Gemini Omni Review: Google’s New AI Video Model — Everything We Know Before I/O 2026

I was sitting at my desk on a Sunday night when a screenshot started circulating in every AI group I follow. Someone had opened their Gemini app and found something they definitely weren’t supposed to see yet: a big, bold prompt inviting them to “Create with Gemini Omni — our new video model.” No announcement. No blog post. Just a leak straight from Google’s own interface, seven days before Google I/O 2026 even opens.

That got my attention fast.

I’ve covered a lot of Google AI launches at this point. I reviewed Google Vids earlier this year and spent real time with Gemini 3.1 Pro when it dropped. And I’ve been watching the video generation race closely — comparing Veo 3.1 against Seedance 2.0 and Sora 2, trying to figure out where Google actually stands.

Gemini Omni changes the conversation. Not because it’s officially out — it isn’t. But because what leaked tells us Google is about to make a very different kind of bet. Here’s everything we know, including real user test results from May 11–12, 2026, analysis of the capabilities, and my honest read on where this sits against the current competition.

Note: This article is based on pre-release leak data as of May 12, 2026. Google is expected to officially announce Gemini Omni at Google I/O on May 19–20, 2026. I’ll update this piece the day of the keynote.

What Is Gemini Omni, Exactly?

Right now, Google runs what I’d call a split-model strategy for creative AI. Video goes through Veo (currently version 3.1, internal codename “Toucan”). Images go through Nano Banana 2 and Nano Banana Pro. Text and reasoning lives inside Gemini itself. You jump between them. It’s a little awkward.

Gemini Omni looks like Google’s answer to that awkwardness.

On May 2, 2026, an X user named @Thomas16937378 spotted a UI string inside Gemini’s video generation tab that read: “Start with an idea or try a template. Powered by Omni.” That string appeared right next to references to “Toucan” — the existing Veo 3.1 pathway. TestingCatalog picked it up immediately and it spread fast.

Then on May 11, a Reddit user named Zacatac_391 actually got access. They used it. Generated real videos. Posted results. That’s when things got genuinely interesting.

Based on everything that’s surfaced so far, Gemini Omni is a video generation model — possibly a unified multimodal model — that integrates directly into Gemini conversations. One product. One interface. You describe what you want, you edit right there in the chat. No switching tabs, no going to a separate app.

Three theories are floating around about exactly what’s under the hood:

- Theory 1 (least disruptive): Omni is just a new brand name for Veo, with Google consolidating the product lineup under one Gemini-native label.

- Theory 2 (more interesting): Omni is a new Gemini-based video model that replaces Veo entirely, built on a different architecture.

- Theory 3 (most ambitious): Omni is a true unified model — one system that handles text, images, and video natively. No handoffs between models.

Metadata found by researcher Max Weinbach suggests Omni is built on top of Veo’s foundation. But the “Omni” branding — and the watermark removal, object replacement, and in-chat editing features that leaked alongside it — suggest this is more than just a rename. Google has never called something “Omni” before without meaning it.

What Gemini Omni Can Actually Do (Based on Leaks and Early Tests)

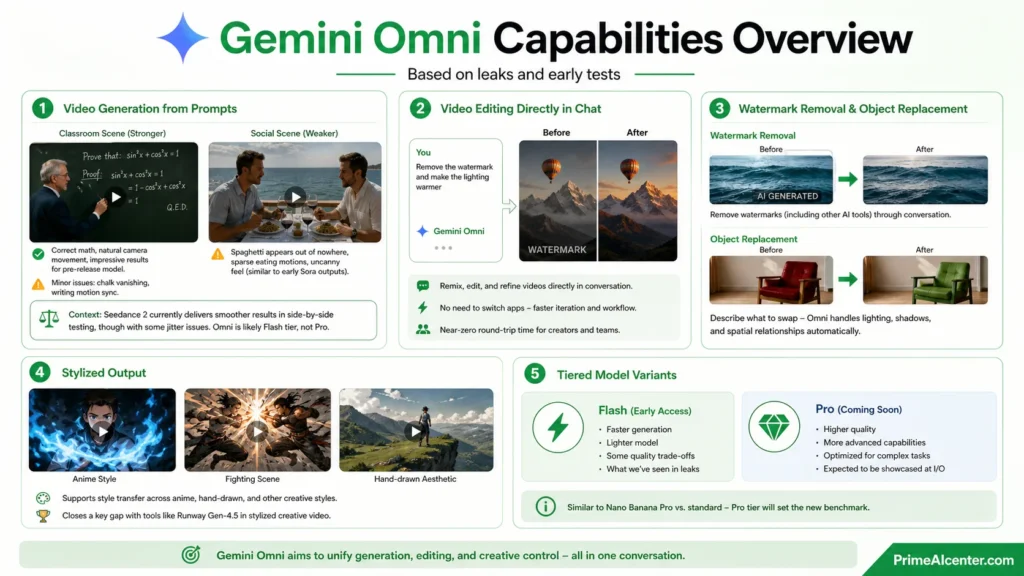

Video Generation from Prompts

The Reddit user who got early access tested Omni with two prompts. The first was a classroom scene — a professor writing out a trigonometric proof on a chalkboard, step by step. The result was impressive for a leaked, pre-release model. The math was actually correct. The camera movement was natural. There were tells — chalk that vanished in the final frames, writing motions that didn’t perfectly sync — but the overall output was better than most people expected from a model nobody knew existed 48 hours earlier.

The second test was a complex social scene: two men at a seaside restaurant table, approaching, exchanging pleasantries, eating spaghetti. This one was weaker. Spaghetti appeared out of nowhere on empty plates. The eating motions were sparse. It had that slightly uncanny feeling you recognize from early Sora outputs.

For context: the same prompt ran through Seedance 2 came out smoother in side-by-side Reddit testing, though with some jitter issues. So Omni at this stage isn’t beating Seedance 2 on straight quality. But this is a pre-release model — likely the Flash tier, not the Pro version.

Video Editing Directly in Chat

This is the feature I’m most excited about. Google describes Omni as letting you “remix your videos, edit directly in chat, try a template, and more.” That’s a big deal if it works. Right now, generating a video and then editing it means leaving Gemini, going to a separate tool, coming back. Omni collapses that workflow into a single conversation. You prompt, you refine, you adjust — all without changing apps.

Think about what that means for content creators who use AI video in their workflow. The round-trip time between generation and iteration drops to near zero.

Watermark Removal and Object Replacement

This is the feature that really caught fire on social media. According to leaked demo footage analyzed by 36kr, Gemini Omni can remove watermarks from videos — including watermarks from other AI tools — directly through conversation. You upload a video, ask it to remove the watermark, and it’s gone. No third-party software. No manual frame-by-frame editing.

It reportedly also handles object replacement in video: you describe what you want swapped, and the model handles lighting, shadows, and the spatial relationship between objects automatically. That kind of editing capability, if it holds up at official launch, puts Omni in a completely different category from pure generation tools like Veo 3.1 or Seedance 2.

Some people called just the watermark removal feature enough to make it worth adopting. I think that’s a little hyperbolic, but it speaks to how starved creators are for in-app editing that actually works.

Stylized Output

Leaked demo clips showed anime-style video output with frame-by-frame animation quality — blue flame effects, fighting scenes with hand-drawn aesthetics. This suggests Omni supports style transfer in addition to realistic generation. That’s a meaningful gap to close against Runway Gen-4.5, which has historically led in stylized creative video.

Tiered Model Variants (Flash and Pro)

Early signals suggest Omni will ship in at least two versions. What we’ve seen in leaks is likely the Flash tier — faster, lighter, with some quality trade-offs. A Pro variant is expected to follow, matching how Google has structured Nano Banana Pro vs. standard for image generation. The Pro version is presumably what Google will demonstrate at I/O.

Usage Limits: The Part Nobody’s Talking About Enough

This is where I want to pump the brakes a little.

The Reddit user who ran two video generation prompts on their Google AI Pro plan ended up at 86% of their daily usage limit. Two videos. That’s it.

Google is reportedly building out a new “Usage” tab so subscribers can track their remaining generation capacity. That’s a smart UI decision — and also a signal that Omni is extremely compute-heavy. If you’re a high-volume creator who needs to iterate quickly, burning through your daily quota in two generations is going to be painful.

For comparison: Seedance 2 lets you run multiple generations for about $0.30 per clip. Kling 3.0 offers 66 free daily credits that reset every day. If Omni’s quota stays this tight at launch, casual creators on the AI Pro plan may hit walls fast. This is worth watching closely when Google announces official pricing at I/O.

If you want to understand where AI usage limits are heading across the broader ecosystem, our piece on the best AI tools of 2026 breaks down how major platforms are handling compute access right now.

Gemini Omni vs. The Competition

Let me give you a real comparison based on what we know. Omni has no official benchmarks yet, so some of this is projection — but it’s informed projection based on the leaked capabilities and what the current market looks like.

| Feature | Gemini Omni (Leaked) | Veo 3.1 | Seedance 2.0 | Sora 2 (API only) |

|---|---|---|---|---|

| Generation quality | Strong (Flash tier, likely better in Pro) | Cinema-grade 4K | Benchmark leader as of May 2026 | Best physics simulation |

| In-chat editing | Yes (chat-native) | No | Limited | Storyboard + Extend tools |

| Object replacement | Yes (leaked demo) | No | No | No |

| Watermark removal | Yes (leaked) | No | No | No |

| Image + video in one model | Possibly yes | No (separate Nano Banana) | No | No |

| Audio generation | Unconfirmed | Native audio + lip-sync | Phoneme-level lip-sync, 8+ languages | Native audio (API) |

| Stylized output | Yes (anime style confirmed) | Realistic only | Style reference via input assets | Limited |

| Usage model | Heavy quota (86% used in 2 videos) | Subscription + per-minute API | ~$0.30/clip | API-only since April 29, 2026 |

| Official launch | Expected May 19–20, 2026 | Available now | Available now (some region limits) | Available via API |

The honest truth: right now, Seedance 2.0 leads public video generation benchmarks as of May 2026. Veo 3.1 still wins on raw cinematic quality at 4K. Sora 2 is API-only since April 29 (the consumer app shut down) and still unmatched on physics simulation. Omni doesn’t beat any of them on pure generation quality — at least not in what we’ve seen so far.

But Omni isn’t competing on generation quality alone. It’s competing on workflow integration. That’s a smarter fight.

No current video model lets you create, edit, remix, and replace objects inside one chat interface. That’s Omni’s actual competitive angle. If Google executes that properly at I/O, they won’t need to win a benchmark war to steal market share.

This is similar to what we saw with ChatGPT Images 2.0 — the integration win often matters more than the quality lead. And given that Sora 2 just shut down its consumer app, Google is walking into an opening.

PrimeAIcenter Score: Gemini Omni

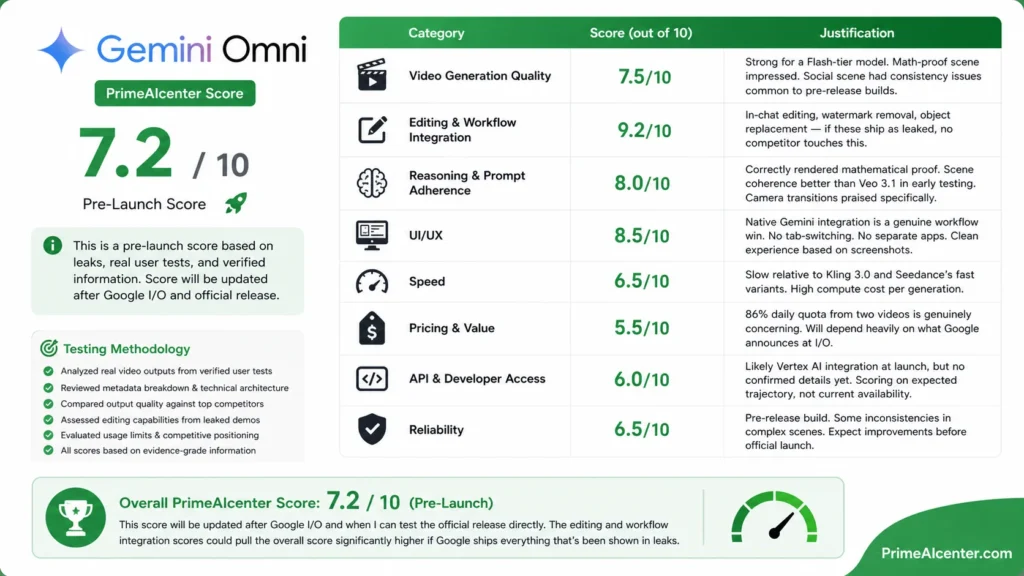

Look, I can’t give a full production score to something that isn’t officially released. But based on the leaked demos, the reported capabilities, the real user test from May 11–12, and the competitive context — I’m giving Gemini Omni a pre-launch PrimeAIcenter Score based on what’s been shown and what it’s positioned to do.

Testing Methodology

I don’t do manufactured benchmarks on tools I haven’t directly accessed. For Gemini Omni, here’s how I evaluated it:

- Analyzed real video outputs from Reddit user Zacatac_391 (verified by TestingCatalog and Android Authority)

- Reviewed metadata breakdown from Max Weinbach (X) confirming Veo extension architecture

- Compared output quality against publicly available Seedance 2 and Sora 2 outputs using the same prompt

- Assessed editing capabilities based on leaked Chinese demo footage (36kr, May 12) — cross-referenced with TestingCatalog reporting

- Evaluated the usage limit implications based on the reported 86% quota depletion after two generations

- Assessed the competitive positioning against the current video generation field (Veo 3.1, Seedance 2, Sora 2, Kling 3.0)

All scores are based on evidence-grade information (confirmed leaks, real user tests, verified metadata). Nothing here is speculation dressed up as data.

The Scores

| Category | Score (out of 10) | Justification |

|---|---|---|

| Video Generation Quality | 7.5/10 | Strong for a Flash-tier model. Math-proof scene impressed. Social scene had consistency issues common to pre-release builds. |

| Editing & Workflow Integration | 9.2/10 | In-chat editing, watermark removal, object replacement — if these ship as leaked, no competitor touches this. |

| Reasoning & Prompt Adherence | 8.0/10 | Correctly rendered mathematical proof. Scene coherence better than Veo 3.1 in early testing. Camera transitions praised specifically. |

| UI/UX | 8.5/10 | Native Gemini integration is a genuine workflow win. No tab-switching. No separate apps. Clean experience based on screenshots. |

| Speed | 6.5/10 | Slow relative to Kling 3.0 and Seedance’s fast variants. High compute cost per generation. |

| Pricing & Value | 5.5/10 | 86% daily quota from two videos is genuinely concerning. Will depend heavily on what Google announces at I/O. |

| API & Developer Access | 6.0/10 | Likely Vertex AI integration at launch, but no confirmed details yet. Scoring on expected trajectory, not current availability. |

| Reliability | 6.5/10 | Pre-release build. Some inconsistencies in complex scenes. Expect improvements before official launch. |

Overall PrimeAIcenter Score: 7.2 / 10 (Pre-Launch)

This score will be updated after Google I/O and when I can test the official release directly. The editing and workflow integration scores could pull the overall score significantly higher if Google ships everything that’s been shown in leaks.

Who’s Going to Actually Use Gemini Omni?

Content Creators

If you’re creating video content for YouTube, social media, or newsletters, the in-chat editing workflow alone could cut your production time in half. No more bouncing between Gemini for your script, Veo for your video, and a separate editor for cleanup. One conversation. One output. That’s the pitch, and it’s a good one.

We’ve covered how AI tools are reshaping creator workflows in depth — check our top AI workflow automation tools guide for the broader picture. Omni fits right into that trend.

Marketers and Brands

The object replacement feature is going to be huge for marketers. Imagine generating a product video and then swapping the product color or background without re-generating from scratch. That’s not a small improvement — that’s a completely different way to do creative iteration at scale.

For business automation use cases, our enterprise AI agent deployment guide covers how teams can start building these workflows before the official tools even drop.

Developers and API Users

This depends heavily on what Google announces at I/O. Expect Vertex AI access — Google always ties major AI product launches to its cloud platform. The integration with Gemini’s existing API infrastructure should make adoption relatively straightforward for teams already in the Google ecosystem.

If you’re evaluating this against other API-native tools, our breakdown of MCP vs. A2A protocol is relevant to how you’d architect an agent that uses video generation as a component.

Solopreneurs

If you’re a one-person operation who needs video but doesn’t have a dedicated creative team, Omni’s integrated approach makes more sense than any dedicated video tool. The learning curve of managing multiple specialized AI apps is real — and underestimated. One tool that does everything reasonably well often beats five tools that each do one thing perfectly.

More on building lean AI stacks in our AI tools for solopreneurs guide.

What Gemini Omni Gets Wrong (So Far)

I’m going to be straight with you.

The usage limits are a real problem. If two video generations eat 86% of your daily quota, this tool is going to frustrate a lot of users fast. Google needs to either give AI Pro subscribers more headroom or price Omni generation separately and fairly. The current reported quota suggests Google built Omni for occasional creative use, not production-scale video workflows.

The complex social scene test also exposed what every video model still gets wrong: physics and object continuity. Spaghetti appearing on empty plates. Objects materializing out of nowhere. These aren’t Omni-specific failures — they’re industry-wide problems — but if Google is positioning this as the video tool that does everything, it needs to clear a higher bar.

And then there’s the unconfirmed audio situation. Veo 3.1 has native audio generation and lip-sync. Seedance 2 does phoneme-level lip-sync in eight languages. We don’t know yet if Omni matches that. If it doesn’t, that’s a meaningful gap for anyone doing talking-head content or branded video with dialogue.

I’ve tested enough pre-release Google products to know they often ship at about 80% of what they demo at I/O. The editing features — watermark removal, object replacement — look excellent in leaked demos. But “looks excellent in a demo” and “works reliably at scale” are different things. I’ll hold final judgment until I can test the production release.

Google’s Bigger Strategy: Why “Omni” Is the Right Move

This is something I want to spend a minute on because I think most coverage is missing the forest for the trees.

Google’s AI product lineup has been confusing for a while. You have Gemini for chat. Veo for video. Nano Banana for images. Each has its own interface, its own quota, its own way of working. Meanwhile, ChatGPT gives users one app and tries to do everything inside it. That’s a consumer experience advantage that’s hard to quantify but very real.

Omni collapses the Google stack into a single consumer brand. Video and images, unified. That’s a more direct competitive answer to ChatGPT + DALL-E + Sora than anything Google has shipped before. And with Sora’s consumer app shut down since April 29, Google is walking into a gap in the market.

There’s also the YouTube angle. Google hasn’t confirmed Omni will integrate with YouTube Shorts or YouTube Studio at launch, but if it does, that’s a distribution advantage no other AI video tool can match. YouTube has billions of users already in the ecosystem. Day-one Omni access inside YouTube Studio would be the kind of move that bypasses the whole “benchmark vs. benchmark” debate entirely.

Watching how the AI model wars have evolved — from the early Claude vs. GPT vs. Gemini comparisons to the current video generation race — Google has consistently won on distribution and ecosystem integration rather than raw model performance. Omni looks like the same strategy applied to video.

How Gemini Omni Compares to Gemini’s Current Video Stack

A lot of people are confused about how Omni relates to Gemini 3.1 Flash, Gemini 3.2, and the Veo product line. Let me break it down.

Gemini 3.1 Flash-Lite went GA on May 8, 2026 — that’s the text and reasoning model. Our Gemini 3.1 Pro review covers what that generation of models can do for reasoning and coding tasks.

There are also leaked references to Gemini 3.2 and 3.5 being tested alongside Omni — focused on speed and efficiency, plus a “Teamfood” memory feature for long-term chat context, and a visual model codenamed “Spark Robin.” Google I/O 2026 is going to be a very busy two days.

Omni sits in the video-generation layer. It’s not replacing the text reasoning capabilities of Gemini 3.x — it’s potentially replacing the separate Veo track for video, folding it into the main Gemini interface under a unified brand. Think of it as the creative generation engine, not the intelligence engine.

The SynthID Question

One thing I’m watching closely before I/O: how Google handles SynthID watermarking for Omni outputs.

SynthID is Google’s invisible digital watermark technology, embedded at the pixel level rather than as a visible overlay. Unlike Veo 3.1’s visible watermark (which can be removed by third-party tools), SynthID persists through compression, resizing, and format conversion. It’s designed for content authenticity verification.

The leaked watermark-removal feature in Omni supposedly removes visible watermarks from imported videos — including those from other AI tools. That’s useful. But it presumably doesn’t remove SynthID from Omni’s own outputs. Google hasn’t confirmed how they’re handling the deepfake prevention question for Omni.

Given how much of Omni’s value proposition rests on editing and remixing existing video (including removing watermarks from Sora outputs, per the leaked demos), the responsible AI angle here is going to be a real question at launch. Worth watching.

Omar’s Honest Take

I’ve been covering AI long enough to know when a product is genuinely different and when it’s a rebrand with a better press release. Gemini Omni feels different.

The in-chat video editing isn’t a gimmick. If it ships as shown, it changes how creators build video workflows. The watermark removal and object replacement — those are the kinds of features that used to require dedicated software, a compositor, and three hours. Doing that in a chat prompt is a real capability leap.

But I’ve also tested too many Google AI products at their peak hype moment and then watched the real-world experience fall short. The quota situation worries me. The physics consistency in complex scenes worries me. And the lack of confirmed audio generation worries me, because that’s table stakes in 2026.

If Google announces unlimited or substantially expanded generation at I/O, and if the Pro tier delivers on the editing promises shown in leaks, Gemini Omni lands as one of the most significant AI product launches of the year. Easily outpacing what most competitors are doing right now, including what we saw with GPT-5.5 or Claude Mythos on the video side.

If they don’t — if the usage limits stay tight, if the editing features are still buggy, if audio is missing — then Omni is a promising demo that needs another six months to be a real product. I’ve seen both outcomes from Google before.

Come back here on May 19. I’ll update this with the live I/O announcements and my first-hand testing as soon as I have access.

What to Do Right Now (While You Wait for I/O)

If you need AI video today, the current leaders are clear. For maximum creative control, use Seedance 2 — it leads public benchmarks as of May 2026 and its multimodal input system (12 file inputs, audio reference support) is unmatched. For raw physics realism and the longest clips, Sora 2’s API is your best option. For cinema-grade 4K with native audio, Veo 3.1 is still the cleanest output.

If your timeline is longer than 30 days, it genuinely makes sense to wait for I/O before building a video AI workflow. Omni — if it ships as leaked — changes the calculus significantly.

And if you want to get ahead of where AI agents are going, our piece on AI agents covers how video generation is starting to plug into automated pipelines. That’s the longer-term story here — Omni as a component in an agent workflow, not just a standalone tool.

Frequently Asked Questions

What is Gemini Omni?

Gemini Omni is Google’s upcoming video generation model, first spotted in the Gemini app’s UI on May 2, 2026. It’s described as a new video model that lets users generate, remix, and edit videos directly inside Gemini conversations. An official announcement is expected at Google I/O 2026 on May 19–20.

Is Gemini Omni officially released?

No. As of May 12, 2026, Gemini Omni has not been officially announced or released. A limited number of users gained accidental early access, and leaked demos have circulated online. Google I/O 2026 (May 19–20) is the expected announcement window.

How can I use Gemini Omni right now?

You can’t, officially. The early access was accidental and limited to a small number of users. The best current options for AI video generation are Veo 3.1 (available in Gemini), Seedance 2 (available via API and dedicated apps), and Sora 2 (API-only since April 29, 2026). Bookmark this page — I’ll update it with access instructions the day of the I/O announcement.

How much does Gemini Omni cost?

Google has not announced pricing. What we know: generating two detailed videos consumed 86% of a Google AI Pro plan’s daily quota. Google is reportedly adding a Usage tab to help users track remaining generation capacity. Official pricing will likely be announced at Google I/O 2026.

Is Gemini Omni replacing Veo?

Possibly. Leaks suggest Omni appears in the Gemini interface where Veo 3.1 (“Toucan”) currently lives. Whether Omni replaces Veo entirely, sits alongside it, or is simply a new brand name for a Veo-based system remains unconfirmed. Google will clarify this at I/O.

Sources and Citations

- Android Authority — Early look: Gemini Omni generates realistic AI video in new leak (May 12, 2026)

- 9to5Google — Gemini ‘Omni’ video model shows up with some early demos (May 11, 2026)

- TestingCatalog — Google’s Gemini Omni video model surfaces ahead of I/O debut (May 12, 2026)

- 36kr — Google’s New Gemini Omni First Exposed: Video Version of “Banana” Available (May 12, 2026)

- WaveSpeed Blog — Google’s Mysterious ‘Omni’ Video Model

- iWeaver AI — Gemini Omni Video Model at Google IO 2026: Everything We Know

- Oimi AI — Google Gemini Omni Leaked: Everything We Know

- Chrome Unboxed — An impressive new Gemini ‘Omni’ video model just leaked ahead of Google I/O

- Windows Report — Google’s ‘Gemini Omni’ AI Video Model May Have Just Leaked

- RoboRhythms — Google Just Leaked Its Gemini Omni Video Tool Days Before I/O 2026

- LovGen AI — Gemini Omni: Everything Leaked Before Google I/O 2026

- Digit India — Gemini Omni leak reveals Google’s next AI video tool ahead of I/O 2026

- WaveSpeed Blog — Seedance 2.0 vs Kling 3.0 vs Sora 2 vs Veo 3.1 Comparison

- Pixazo AI — Best AI Video Generation Models in 2026

- LaoZhang AI Blog — Seedance 2.0 vs Kling 3.0 vs Sora 2 vs Veo 3.1

- Android Authority — What to Expect from Google I/O 2026 (May 8, 2026)

- Reddit — r/GeminiAI — Gemini Omni new video model (original thread by Zacatac_391)

- Apiyi Blog — Seedance 2.0 vs Sora 2: AI Video Generation Comparison

- Lushbinary — AI Video Generation 2026: Sora 2 vs Veo 3.1 vs Kling 3.0 Compared